Broader test for “obviousness” promises far-reaching consequences.

Joseph E. Gortych, Opticus IP Law PLLC

The increasing prominence of intellectual property (IP) in society has prompted the US Supreme Court to take on more cases related to this topic, particularly those involving high-tech business. On April 30, the Supreme Court handed down decisions in two patent-related cases. One case, KSR v. Teleflex, involved the question of what constitutes the proper analysis for determining whether an invention is “obvious” and thus unpatentable.

In the KSR case, the court unanimously rejected the narrow application of the so-called “teaching, suggestion or motivation” (TSM) test often used by the Court of Appeals for the Federal Circuit (the highest patent court below the Supreme Court), and it re-established the more expansive and flexible approach of its precedents that makes it easier to find an invention obvious. Although the invention at issue was an automobile accelerator pedal, the decision affects all current and pending patents. Thus, the decision likely will affect patenting in high-tech business.

Legal background

The nation’s first Patent Act, enacted in 1790, did not include a requirement that an invention be “non-obvious.” This requirement was added through court decisions involving disputes over patent validity. The Supreme Court first addressed the obviousness issue in Hotchkiss v. Greenwood in 1851. The patented invention at issue was a method of making knobs (e.g., doorknobs) out of clay or porcelain using the same methods used to make knobs out of wood and metal. The court invalidated the patent as being “destitute of ingenuity or invention” and “the work of a skillful mechanic, not that of the inventor.”

The nonobviousness requirement ultimately was incorporated into statutory patent law in 1952. In 1966, the Supreme Court granted certiorari to three patent cases involving obviousness and set forth its obviousness analysis in Graham v. John Deere. In this case, the court looked to its analysis in the Hotchkiss case and set forth three main factors (the “Graham factors”) to be considered in determining obviousness: i) the scope and content of the prior art, ii) the differences between the prior art and the claimed invention, and iii) the level of ordinary skill in the pertinent art.

The application of these factors to inventions made up of a combination of pre-existing elements (which encompasses virtually all inventions) must show “some change in their respective functions” or produce a “new and different function” or “an effect greater than the sum of the several effects taken separately.”

The court also stated that “secondary considerations as commercial success, long-felt but unsolved needs, failure of others, etc.” may be useful in shedding light on the question of obviousness. The court realized that even with the Graham factors, questions of obviousness would be difficult to address uniformly in all situations; the law would need to develop over time as more and more cases were decided based on the Graham factors.

Origins of the TSM test

Nowhere in the Supreme Court precedents is embodied the TSM test, which requires that the prior art include a “teaching, suggestion or motivation” that would lead a person skilled in the particular art to combine the references to arrive at the claimed invention. However, because most, if not all, inventions are formed from known elements, inventions could be made to seem obvious in hindsight by piecing together an invention from disparate prior art references.

The TSM test as an analytical tool is designed to avoid such hindsight reconstruction of the invention by requiring a nexus between the prior art and the invention. It was first introduced by the Court of Customs and Patent Appeals (the predecessor to the Court of Appeals for the Federal Circuit) as early as 1938 but was applied with increasingly more regularity (but not exclusively) starting in the late 1960s and early 1970s.

As the test grew in prominence and focus, obviousness became increasingly harder to prove, thereby, in effect, raising the obviousness bar. This provided a window of opportunity for corporations to add patents based on minor improvements to existing technologies to their portfolios. The window also was exploited by those seeking unduly broad claims to inventions to extract licensing royalties — the same folks whom the prescient Supreme Court slammed over a century ago as “a class of speculative schemers … [who] lay a heavy tax upon the industry of the country, without contributing anything to the real advancement of the art.”

However, not every obviousness case decided by the district courts and the Court of Appeals for the Federal Circuit, and not every patent application in the US Patent and Trademark office where obviousness was in play, involved a narrow application of the TSM test. When the test was employed, the conclusion, in many instances, was the same as it would have been if the Graham factors had been the determinants.

Patenting in high-tech business

The Supreme Court’s 2007 decision to restore the obviousness bar to its industrial revolution level stands in contrast to patent modernity and evolution in the information age. In the days of yore, the IP strategy was simple: Patents protected products and provided “freedom of action” to make and sell them. Today, patents are high-tech business tools obtained and leveraged for an intended purpose according to an IP strategy defined by a company’s business plans. Patents often now are the products in that they are produced (even mass-produced by many companies), bought and sold (assigned), leased (licensed), traded (cross-licensed) and sued over. They can, and do, vary widely in quality and value, and IP licensing and tech transfer efforts resemble an intellectual property Turkish bazaar.

Although an ad hoc intellectual property strategy worked in the past, today sophisticated high-tech companies use a well-thought-out strategy designed to optimize IP business value. This often means creating patent portfolios that cover tracts of IP space and that typically exceed the technological breadth of the company’s product line. The IP portfolio represents a stockpile of trading chips to be used for cross-licensing rather than paying royalties or litigating patent disputes. These portfolios — not individual patents — are now the way most savvy companies establish freedom of action.

All inventions exist in an IP space that also contains their related predecessor inventions and knowledge, collectively called “prior art.” Inventions that fall entirely within a single prior art reference or that are, in their entirety, known in the art lack novelty and are unpatentable because they are considered “anticipated.” Other inventions may not fall squarely within a single prior art reference but, in the words of the Supreme Court, offer nothing more than “the predictable use of prior art elements according to their established functions.” Such inventions can be thought of as falling within a “sphere of obviousness” in IP space, wherein the invention lies so close to certain prior art that it falls within its influence and renders the invention unpatentable.

Obviousness wormhole

In the Graham case, the court enunciated “secondary considerations” to be weighed along with the three Graham factors when assessing obviousness. Secondary considerations include evidence of commercial success, fulfillment of a long-felt need and the failure of others to solve a problem that the invention solves. The Court of Appeals for the Federal Circuit has since held that secondary considerations are not limited to cases where patentability is a “close question.” It also has observed that the secondary considerations are not secondary in importance and that they often are the most probative objective evidence in assessing obviousness.

Of the secondary considerations, commercial success is perhaps the most interesting in that an invention can start out as “obvious” but can morph into something that is nonobvious while the patent examination process is ongoing. This is because typically it takes years to get a patent issued, and during that time a product embodying an invention can be designed, manufactured, marketed and sold in huge numbers.

Secondary considerations are an important aspect of the obviousness analysis in that any one of them, when backed up by the right evidence, can be a wormhole in IP space that transports an invention from inside the obviousness sphere to outside of it with the surprising effect of making an otherwise unpatentable invention patentable. As more inventions are challenged as being unpatentable for obviousness under the Graham factors, one can expect to see more frequent and clever uses of the secondary considerations to argue against obviousness.

In 35 USC § 101 of the patent law, it is stated that (emphasis added) “Whoever invents or discovers any new and useful process, machine, manufacture, or composition of matter, or any new and useful improvement thereof, may obtain a patent therefor.…” Most patented inventions fall into the “useful improvement thereof” category.

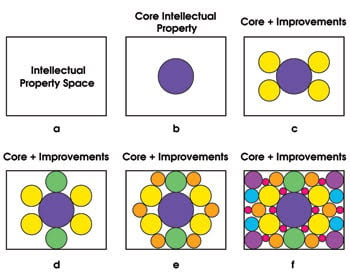

But how can so many patents on similar inventions be possible? The answer is explained in large part by the fractal nature of IP space. Figure 1 illustrates IP space associated with a truly “new” groundbreaking invention, such as the laser. The space starts out with “core intellectual property” that occupies a central position. However, improvements to the core (e.g., tunable lasers, solid-state lasers, different lasing media) show up quickly and start filling up the space (Figure 1 c-d). But these improvements open their own IP subspaces that, in turn, get filled with further improvements. For example, the semiconductor laser IP subspace contains vertical-cavity surface-emitting laser (VCSEL) inventions, lasers using different materials, etc. And then the VCSEL subspace opens and begins to be filled with VCSELs of different geometries, materials, output powers, output wavelengths, etc. Thus, the process illustrated by figure 1 is repeated for all of the IP subspaces.

Figure 1. Intellectual property space (a) begins with the “core” intellectual property (b), and as improvements to the core take place, they fill up the space (c-f).

The theoretical maximum density of a given IP space is where the patents therein are spaced apart by the very limits of obviousness. For commercially valuable technologies, the profit motive serves to compress the associated IP spaces toward this density limit. The TSM test seems to have played a role in allowing certain IP spaces (nanotechnology and holography, to name two) to be packed with patents to black-hole density. Many hope that the KRS case will prevent the formation of such warped IP spaces.

Balancing validity and infringeability

Imbalances in the infringeability-invalidity equation tend to breed dissatisfaction with the patent system. The KSR case holds the promise that it will be easier to invalidate over-reaching patents and restore what many believe is the proper balance between claim infringeability and claim validity.

What constitutes a patented “invention” is not the relatively lengthy “detailed description” of the invention but rather the relatively terse “claims” at the end of the patent document. It is important to understand that an invention can be inherently patentable but claimed in a manner that renders it unpatentable. This happens frequently because many inventors (or more often in high-tech business, their assignees) seek to capture all the IP space they can, right up to the limits of the prior art — which is to say, to the limits of patentability — regardless of how the patent is ultimately intended to be leveraged. When those filing patent applications do not know the full scope and content of all the prior art (which often is the case), this claiming approach makes for many potential infringers, but also makes it easy to wander into the sphere of obviousness.

At the other extreme, virtually any invention is patentable if enough claim limitations are added. However, this may require such an exquisitely detailed claim that even intentional infringement is nearly impossible.

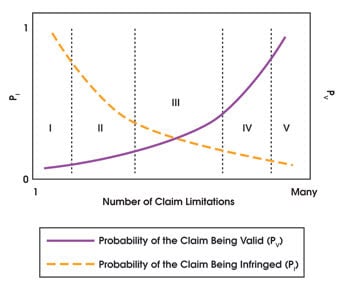

Figure 2. This plot represents a simplified view of the relationship between claim validity and claim infringeability. The number of limitations in a given claim is plotted against the probability of the claim being valid and the probability of the claim being infringed. Literal patent infringement occurs when an apparatus or method includes all of a claim’s limitations.

Figure 2 represents a simplified view of the relationship between claim validity and claim infringeability, showing the number of limitations in a given claim plotted against the probability of the claim being valid and the probability of the claim being infringed. Literal patent infringement occurs when an apparatus or method includes all of a claim’s limitations.

The figure is divided into Zones I through V. As an example of how these zones might apply to a real invention, let us consider a zoom lens with enough unique features to make it patentable over prior art zoom lenses. In Zone I, though the invention per se is inherently patentable, it is claimed with so few claim limitations (e.g., “A lens comprising: three lens elements.”) that the claim certainly is infringed by many, but it is also certainly invalid as being anticipated. Zone I, in essence, reinvents the wheel.

In Zone II, there are more claim limitations (“A zoom lens comprising: four lens elements with two moving elements to effectuate zooming”), which slightly improves the probability of the claim’s being valid to “probably invalid” and captures fewer infringers, but still more than is reasonable to expect.

In Zone III, the number of claim limitations increases (e.g., adds a holographic lens element specially designed to effectuate image stabilization), which moves the probability of the claim being valid to “more likely valid than invalid.” Although infringement is not certain, the number of potential infringers is within reason. The increased number of claim limitations also provides some room for avoiding infringement by “designing around” the claims (e.g., using a nonholographic element for image stabilization), but perhaps at a nontrivial cost.

In Zone IV, the number of claim limitations further increases (e.g., adds two aspheres and a 20× zooming range), which moves the probability of the claim being valid to “very probably valid,” while the probability of infringement diminishes to a relatively small number. The ability to avoid the claim by low-cost “design arounds” expands.

In Zone V, the number of claim limitations is very large (e.g., adds more lens elements as well as specific conditions on all of the lens curvatures, relative focal lengths, etc.), making the probability of the claim’s being valid “almost certainly valid,” whereas the probability of infringement is essentially nil, and designing around, if necessary, is trivial.

Many patents include a number of independent claims, each of which falls into a different zone. This was the situation in the KSR case. One of the claims of the Teleflex patent had fewer key limitations than the others, so it was a “Zone II” claim, broad enough to capture KSR as an infringer. When challenged by Teleflex on the issue of infringement, KSR countered by successfully invalidating the patent claim for obviousness.

Watching the horizon

Similar to the moments immediately after an undersea earthquake, it is not yet clear whether the KSR decision will result in a legal tsunami of patent invalidity decisions by the US Patent and Trademark office and the courts. The degree of impact of the KSR case can be measured by watching a number of signs, such as fewer patents allowed by the Patent and Trademark office, increasing narrowness of patent claims (i.e., more patents having claims in Zones 3, 4 and 5 and fewer patents with claims in Zones 1 and 2), an increase in obviousness rejections of patent applications, harder pushback by the Patent and Trademark office during patent prosecution, an increased number of patents held invalid for obviousness by the courts and fewer brazen attempts to litigate patents of dubious validity.

The KSR case may eventually lead to companies adjusting their intellectual property tactics — for example, seeking narrower patent claims, challenging the validity of dubious patent claims through litigation or Patent and Trademark office-based re-examination rather than licensing, and/or being more selective about filing patent applications.

However, the larger strategic intellectual property goal of generating and protecting intellectual property in a manner that optimizes business value will remain unchanged for savvy high-tech businesses.

Meet the author

Joseph E. Gortych is president of Opticus IP Law PLLC; e-mail: [email protected]. Based in Sarasota, Fla., he specializes in optics, photonics and semiconductor technology. The opinions expressed herein are solely those of the author.