High-Res Images Captured Superfast

Researchers Ala Hijazi and Vis Madhavan at Wichita State University in Kansas wanted to study the high strain rate deformation that occurs in high-speed machining by recording a series of microscopic images at such speeds. The year was 2003, and the duo had money in hand, courtesy of a National Science Foundation grant, to buy an ultrahigh-speed camera for their research. Because commercially available cameras were deemed too slow, too blurry, too dark or too difficult to interface with microscopes, the pair created their own system. The result was a camera that records microscopic images at higher resolutions than ever and at frame rates of up to 200 MHz (5 ns).

Mapping the velocity and strain rate fields in the primary shear zone – the area where chips are cut from a workpiece material, which lies slightly in front of the cutting tool – during high-speed machining requires frame rates of 100 kHz to 20 MHz.

“The field of view is very small, on the order of two- or three-hundred micrometers,” said Hijazi, who is now a researcher at Hashemite University in Zarqa, Jordan, and who collaborates long-distance with Madhavan. “The images needed to be sharp, with little motion blur or intensity noise, for accurately mapping the velocity and strain rate fields using digital image correlation [an optical method for measuring deformation on a surface].”

Because dual-frame cameras were being coupled with dual-cavity pulsed lasers for illumination and used in particle image velocimetry (PIV) applications to capture two high-speed frames, “I thought of combining several of those cameras together in order to capture a sequence of high-speed frames,” Hijazi said.

He and Madhavan began asking PIV system vendors to make them an eight-frame system by combining four dual-frame cameras.

“The response was that it is generally not possible to combine these cameras together because that will cause multiple exposures by the laser pulses,” Hijazi said. “However, it might be possible to do a four-frame system using two different polarizations of light. Vis thought of a way to overcome this constraint using different colors of laser light,” which would work with any number of frames.

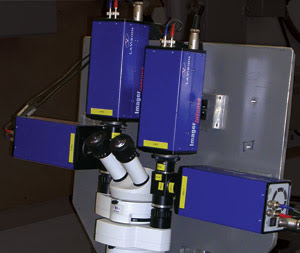

The duo applied for a patent on the system in 2004. LaVision, a spin-off from Max Planck Institute and Laser Laboratory in Göttingen, Germany, custom-built an eight-frame system for the researchers (four dual-frame cameras and four dual-cavity lasers built around a stereomicroscope) that was delivered in early 2005. The camera is housed in Madhavan’s Advanced Manufacturing Process Lab at Wichita State.

Camera concept

Hijazi said that their system works in a way similar to how one might capture high-speed motion – such as a golfer’s swing or a bee’s flight – using two simple cameras and two high-speed flashes. To avoid a messy, double-exposed image, each flash must be filtered so that they register only on images taken with their corresponding camera.

Instead of flashes, Hijazi and Madhavan’s method uses light pulses of different wavelengths and selectively routes the light of a particular wavelength to only the designated camera using wavelength-selective beamsplitters (or bandpass filters) so that the exposure can be controlled by the timing and duration of the light pulses, without crosstalk between the pulses.

“This concept allows the time separation between each image that is captured to be infinitesimally small, which results in a camera system that can capture a sequence of high- resolution images at ultrahigh speeds,” Hijazi said.

The system is being used mainly for imaging the chip formation process during high-speed machining, but Hijazi said it would benefit most research and educational applications involving high-speed phenomena.

“Events that happen within a microsecond need to be recorded at ultrahigh speeds (>1 MHz). … In particular, such a camera would be very useful in applications requiring high-quality, high-resolution images such as DIC or PIV, where quantitative data are to be obtained by analyzing the recorded images,” he said. Those fields include biomechanics, microscopy, crash testing, ballistics, turbulent flows, cell biology, materials science and medicine.

Comparing configurations

Although some other camera systems are comparable speedwise to their system, “the concept our camera system is based on is quite general and can be applied to convert a variety of combinations of cameras and pulsed illumination sources into high-speed cameras,” Hijazi said.

In particular, he said, cameras based on the concept could be used with picosecond lasers to image highly reflective objects at gigahertz rates, something not yet achieved.

“In the current configuration of the prototype camera we have, gigahertz rates are not possible, since the pulse duration of the nanosecond pulsed lasers being used for illumination is five nanoseconds. It would be possible if the camera system were retrofitted with picosecond lasers and higher speed electronics for triggering,” he said.

Electronic high-speed cameras can obtain sequences of thousands of frames at rates of several thousand frames per second, but the resolution or the field of view of the images decreases as the frame rate increases, making the maximum attainable rate on the order of 100 KHz, he said.

“Our camera can produce multiple megapixel-resolution images at frame rates that can go into the gigahertz range, but the number of frames is limited. We believe that systems that can produce image sequences with up to 100 frames are feasible with our approach,” Hijazi said.

The Wichita State system also offers advantages over rotating mirror or drum cameras and multichannel gated-intensified ultrahigh speed cameras, he noted.

Although rotating mirror or drum cameras can record image sequences up to 100 images at frame rates up to 20 MHz, they are expensive, difficult to operate and maintain, and their resolution is limited by the rotation of the mirror or drum.

Multichannel gated-intensified superfast systems also can record at frame rates of up to 200 MHz, but they also are expensive, the number of frames is limited to 16 and the image quality is poor, he said.

In contrast, the image resolution and quality produced by a multichannel camera system built according to their concept “is limited only by the native resolution afforded by the imaging optics and the imaging sensors being used,” Hijazi said.

The system will be commercialized through the researchers’ company, Spectrum Optical Systems, a spinout of Wichita State.

“The company is working on developing low-cost, compact LED- and diode laser-based illumination systems, and on optical configurations to increase the number of frames,” Hijazi said. “Our goal is to begin marketing inexpensive three-frame cameras that can provide high- resolution images at frame rates of up to one megahertz using the Spectral Shuttering™ technique.”

The goal also is to have a three-frame camera system available for sale in 2009, and the company is seeking industry partners. “We are also open to licensing the technology to high-volume commercial manufacturers,” he said.

A three-frame system could cost from a couple of thousand dollars to several thousand, Hijazi said, depending upon the base camera model chosen.

“Eventually we envision a suite of commercial solutions at different price point ranges that satisfy the needs of scientists, industry, enthusiasts, etcetera,” Hijazi asserts.