Dr. Lutz Kreutzer, Mvtec Software GmbH

The first robotic visitor to the International Space Station performs difficult visual and tactile tasks in service to the human crew.

The first humanoid robot astronaut boarded the International Space Station (ISS) in August 2011.

Mission STS-133 was scheduled as one of the last commitments of NASA’s space shuttle flights. Onboard that Discovery flight was Robonaut R2, a humanoid robot – developed by General Motors (GM) and NASA – with more skills than any previously tested similar device. One skill is decision-making, which it does using sophisticated imaging algorithms based on MVTec’s software library Halcon.

The crew of Discovery during mission STS-133: seven humans and one robot astronaut. Courtesy of NASA.

The robot worked precisely and reliably, realizing a 15-year dream of NASA’s. However, that mission was just the beginning of another age: robot astronauts that can support human activity in space under zero gravity.

Robonaut 2 looks like an astronaut, with its gold-colored head and metallized visual field. The proportions of its white torso, arms and head are close to that of a human body. But Robonaut 2 does not have legs. Its body is fixed on a special rack; thus, it cannot walk or run.

Robonaut R2 was developed by NASA and General Motors as a humanoid machine with more skills than any other previously tested similar robot device. Photo courtesy of NASA.

Its development began in 2007. The robot not only will support the daily work of an astronaut at the space station, but also will execute boring and recurring work. As with automobile production robots – and in contrast to a human worker – Robonaut 2 does not get tired. Thus, it seems the optimal solution for dangerous work such as performing EVA (extravehicular activity) outside the spacecraft.

Robonaut 2’s flexible vision system combines a stereovision pair of Prosilica GC2450 cameras from Allied Vision Technologies GmbH of Stadtroda, Germany, and infrared distance measurements and structured lighting, enabling it to achieve robust, automatic recognition and pose-estimation of objects on the ISS. Robust object recognition requires the use of complex patterns measured from the environment using multiple sensor types.

Complex pattern recognition of entire scenes can be computationally prohibitive. NASA and GM’s solution was to apply pattern recognition to small segmented regions of the scene. Information within the image, such as color, intensity or texture, was used to segment the regions scanned by the robot’s imagers.

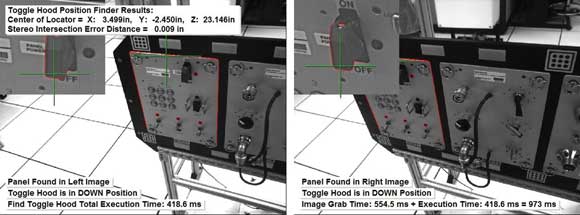

The robonaut’s eyes see a pair of left and right views acquired during control of the position of a toggle hood, and that the toggle hood is in a closed position. Courtesy of NASA.

Myron Driftler, robonaut project manager at NASA, said MVTec’s Halcon 9.0 was chosen to integrate the sensor data types and to perform all the complex computations in a single development environment.

“Halcon supports GigE Vision and allows for a quick setup of each camera in software via its automatic code-generation feature,” he said. The rangefinder is a Swiss Ranger SR4000 from Mesa Imaging AG of Zurich.

The robot’s tactile force and finger position sensors are custom-designed and -fabricated. Its rangefinder, force sensors and finger position sensors use custom C/C++ code to perform depth measurements and tactile object recognition.

“Halcon’s Extension Package Programming feature allows us to import all custom code into a single development environment, such as HDevelop used for rapid prototyping of our applications,” Driftler said. “The stereo camera calibration methods inside Halcon are used to calibrate the stereo pair and will also be used eventually to calibrate the Swiss Ranger.”

The Robonaut R2 manipulates a space blanket. Handling soft goods such as this is a prime challenge to the robot’s visual and tactile sensors. Courtesy of NASA.

Of the many planned ISS tasks for Robonaut 2, sensing and manipulation of soft materials are among the most challenging, requiring a very high degree of coordinated actuation. For example, a soft-goods box made of a flexible ortho fabric holds a set of EVA tools. To remove a tool, the box must be identified, opened and reclosed. The challenge lies in the tendency of the fabric lid to float around in zero-g, despite the base being secured. The lid can fold in on itself in unpredictable ways. Lid state estimation and grasp planning will be the most difficult functions to perform for this specific task, which requires a good mix of standard computer vision and motion planning methods combined with new techniques that combine the two fields.

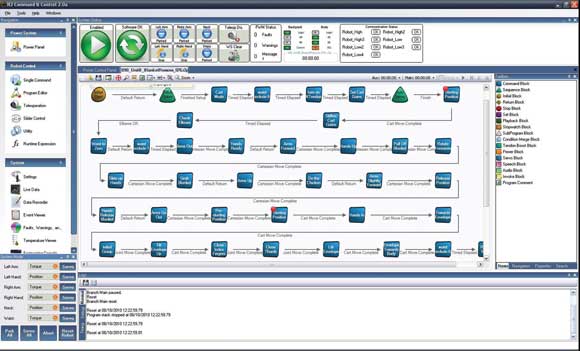

“For all these purposes, Halcon has many features that will allow for the successful execution of the soft-goods-box task described,” Driftler said. A single laptop computer connected to the robonaut will run both the R2 Command and Control and the Flexible Vision System software. Processing power will therefore be limited, calling for intelligent use of small, focused Halcon image processing regions of interest (ROIs).

This screen shot shows the robonaut’s command and control screen. Courtesy of NASA.

To locate the soft-goods-box ROI, Halcon texture segmentation functions will be used, Driftler said. The box lid fasteners, which are composed of latches and grommets, will be identified in the stereo images by using Halcon’s shape-based matching techniques.

The shape-based matching techniques also will be used to search for grommets that can be in any orientation once the lid is opened and floating around. Point-based stereo vision will be used for fast computation of the lid fastener poses. Halcon’s “intersect lines of sight” function will use the stereo-pair calibration information and the location of the fastener components in each image to compute the six-dimensional pose of each component.

“As our own understanding of the task advances, Halcon’s built-in classification techniques and functions will be used for complex pattern recognition such as using fused image, tactile and finger-position data within the Halcon development environment to predict a feasible grasp location for unfolding the lid to close the box,” Driftler said.

Robonaut R2 is the first step into a new dimension of humanoid robots in astronautics – the start of a hopeful beginning for future space flights.

Meet the author

Dr. Lutz Kreutzer is manager of PR and marketing at MVTec Software GmbH in Munich; email: [email protected].