Paula M. Powell, Senior Editor

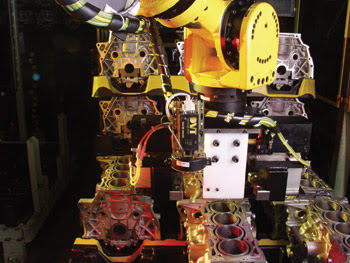

Take the robot — basically a blind beast of burden — add vision capability, and you now have an incredibly flexible fixture. It can load machine tools, inspect weld seams on an automobile, distinguish between the palest yellow and white on car doors or perform a variety of other complex tasks accurately and repeatably — all at fairly high production-line speeds.

Something as simple as robotic guidance can be accurate to within 1 mm or less, even in less-than-ideal environments, at least from the standpoint of vision. For example, backlighting may be infeasible or simply costprohibitive. There may also be significant space constraints for the inspection task or even for movement of the robot. Smart cameras or sensors fit well in these applications because of the imaging capability available within a compact frame. A typical system weighs 170 g and measures 112 × 60 × 30 mm, not including the lens.

Sensors also can handle applications of varying complexity that were once possible only with frame-grabber-based vision technology. Sometimes the smart sensor must fulfill a simple function, such as guiding a robot to a set point in an X-Y coordinate plane over and over again.

While the link between robot and vision system can be through a serial port or an Ethernet network, it may be beneficial to keep that communications link somewhat private and not add too many nodes to the system. As networks become busier, disruptions to real-time communication could be a problem.

Increasingly, however, vision is required to enable high-speed robot control, combined with some other task such as optical character recognition, bar-code reading or parts inspection. From a guidance standpoint, according to Michael Schreiber, applied engineering director for DVT Corp., the sensor must then provide the robot with real-time feedback for it to identify a location in 3-D space, with X, Y, Z and angular coordinates. These updates often must be fast and frequent.

No matter how simple or complex the application is, though, successful robot guidance requires that two basic issues be addressed. The first, said Ed Roney, manager of vision product and application development for Fanuc Robotics America Inc., is the need to establish communication between sensor and robot. There is no standard interface, so setting up a line of communication often requires both sensor and robot vendors to write code to establish a communications protocol. Roney noted that, although camera manufacturers have access to drivers for standard robot models, establishing the communications link is rarely a simple plug-and-play process when working with vision sensors and robots from different vendors.

Most often, information transfers from the vision sensor to the robot through a serial connection or via Ethernet. Even then, it may still be beneficial to keep the network link between sensor and robot fairly private to avoid degrading real-time data-transfer capability.

The second guidance issue involves calibration. Robots and vision systems use separate coordinate spaces, so they must learn to speak each other’s positioning language. “Robots are normally programmed to go to the same repeatable positions and repeat their operation,” Roney said. “When you add vision, you do so because something is going to change. You must be able to provide data about that change to the robot in the coordinate system it understands.”

He said that calibration can offer significant challenges, depending on the application. If the object you are looking for is a bagged product, for example, the robot can probably perform the application even if its guidance is accurate to within a few millimeters. But if you are picking parts off a pallet or loading a machine tool, you may need positioning accuracy of a millimeter or less so that you can perform the task accurately without any collisions.

Once the communication and calibration issues are resolved, the next step is to develop the application — and that is where smart camera functionality can really make a difference. Sophisticated color recognition is no longer possible only with frame-grabber-based vision systems, which could be too bulky for space-constrained robotic applications.

One of DVT’s smart cameras, for example, has a built-in spectrograph that can split the incoming light into its constituent wavelengths along the X-axis and, spatially, along the Y-axis. The inspection system can learn the complete spectrum (380 to 900 nm) of the sample and detect small deviations. This translates to unlimited color selection capabilities.

Sensors also can offer a range of resolution — such as from 640 × 480 pixels to 1280 × 1024 pixels —with higher resolution beneficial for both positioning and inspection. Schreiber noted that this is also one area where establishing a good line of communication among vision systems manufacturer, robot vendor and end user early in the development process can make a significant cost difference.

For instance, it may be possible to design a robotic assembly process to provide highly accurate positioning without the need for the highest-resolution vision system. This could involve something as simple as broadening the opening of a hole where a pin must be inserted and then having the hole dimensions become increasingly tighter as the pin is guided into it.

What’s next?

According to Schreiber, end users can expect sensor manufacturers to continue adding new tools and drivers. They also should expect more efforts on the communications side to tighten the integration between sensors and robots.

Roney also sees this happening. From the robot vendor’s perspective, he also questioned the need for separate vision system and robot communications processors. “To simplify development,” he said, “it would help for end users to work with just one interface for the integrated vision sensor and robot system, whether the vision system is a smart sensor or one developed internally by the robot vendor. One benefit will be the capability to program both the robot’s motion and the vision system’s task at the same time, using one teach pendant with a graphical user interface.”

The Fanuc engineer also predicted that end users will begin moving away from static calibration — take a picture, get a position coordinate — to visual servoing within a few years. Here, the vision system continuously watches the robot as it moves toward the target, providing guidance through either a series of images or streaming video.

Paula M. Powell

Contact: Michael Schreiber, DVT Corp., Duluth, Ga.; +1 (770) 814-7920; e-mail: [email protected]. Ed Roney, Fanuc Robotics America Inc., Rochester Hills, Mich.; +1 (248) 377-7739; e-mail: [email protected].