Image-acquisition software guides surveillance cameras without operator intervention.

Paul Simpson, PL E-Communications

The use of video surveillance as a security tool historically has been plagued by multiple limitations, including cross-platform incompatibility, inadequate nighttime operability, and the human fatigue and error factors.

Software developed by researchers at the University of Rochester in New York gives surveillance cameras a rudimentary brain. According to Randal Nelson, associate professor of computer science at the university and developer of the software, the idea is to get intelligent machines to do the observing and reacting in ongoing tasks, removing the need for continuous monitoring by a human.

The research has been licensed to PL E-Communications, also of Rochester, which developed software, dubbed AVT234, that provides users with advanced automatic detection and object discovery to continuously interpret video feeds. It works with any sensing device, is compatible with multiple computer platforms and can be used in conjunction with Internet Protocol cameras that have all applications software embedded, eliminating the need for computer integration.

The software can even be implemented for nighttime surveillance. Visual motion is a particularly effective cue for recognition tasks at night, when detecting motion is not affected by illumination and shading. Surveillance automation at night does not require complete knowledge of the scene being surveyed, so low-level autodetection works well. Although initially it was developed for use in military, law enforcement, commercial facilities and border surveillance applications, the software also can be used in nonsurveillance applications, such as factory automation, noninvasive medical diagnostics and MRI.

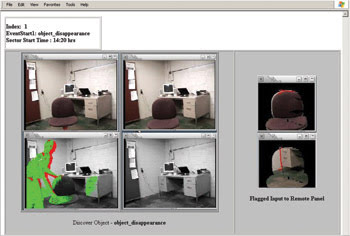

Advantages of the software in video surveillance include its ability to automatically distinguish multiple object and motion types in one frame so that it more accurately identifies changes in the scene and dramatically reduces the distraction caused by false alarms (see figure).

AVT234 software maintains constant surveillance on any still or video images run through it. The upper left frame is a normalized scene; the lower left frame shows someone entering the scene and grasping the chair. Because the chair is removed, a second set of frames (upper and lower center) is generated to show the “before and after” — in this case, an object disappearance. Note that the person in the scene is recorded but does not generate another event, illustrating the technique’s resistance to clutter and false alarms. The real change (the chair being removed) is detected immediately, and an object disappearance event is logged and filed for forensic purposes. At far right, a before-and-after frame shot of the object change that has occurred is sent to alert an operator in case some action needs to be taken.

The way it works

The software performs automatic detection of changes in still and streaming images using object discovery by recovering and explaining the temporal structure found in each pixel’s stream of observations. Although the idea superficially resembles the use of segmentation algorithms that assume local homogeneity of object characteristics — such as color, texture and optical flow vectors — only temporal information is used to perform pixel clustering.

The software has frame-rate requirements of 1 to 5 Hz, which is far lower than comparable solutions that need minimum frame rates of 15 to 30 Hz. The lower rate makes detection quicker and less sensitive to distracting motion, while maintaining the ability to discover entire objects, even in cases where they are always partially occluded. Because the software is continuously normalizing the scene, it also recovers complex occlusion relationships, including those in which different objects share the same space, texture and color. It automatically discards problematic sequences rather than generating false alarms, making prioritization of alarms more efficient.

And because spatial information is not used in the clustering steps, the technique is very flexible. Change detection and object discovery can be done on a variety of time scales, from rapidly moving objects to stationary people and vehicles, for intervals from a few seconds to several hours. It also can be used with any type of sensor data, including multispectral data fusion.

This technology enables onboard fusion/processing of multiple sensor data types to prioritize targets and other changes that are of interest to the user. A critical element of the process model sets priorities for users to look at surveillance scenes in order of importance, flagging urgent scenes for tracking and comparison by military, state and local law enforcement and other key stakeholders. This same technique can be used to prioritize event management for factory automation, noninvasive medical diagnostics and MRI applications.

Meet the author

Paul Simpson is CEO of PL E-Communications, Government Services, in Rochester, N.Y.; e-mail: [email protected].