Specialized hardware -- and the accompanying cost and complexity -- has long been the bane of machine vision systems with real-time image streaming and processing requirements. Gigabit Ethernet, the 1-Gb/s version of the leading global LAN protocol, is a viable alternative based on off-the-shelf, lower-cost technology.

George Chamberlain, Pleora Technologies Inc.

Real-time operation is a requirement for most high-performance vision applications, including destructive testing, postal sorting, security and surveillance, and the inspection of electronics gear such as flat panel displays, semiconductors and circuit boards. In these environments, microseconds count. Cameras must acquire image data quickly and stream it immediately to PCs that process it on the spot with applications software. This allows the real-time triggering of actions, such as discarding a defective device.

Specialized hardware such as Camera Link or low-voltage differential signal chips and connectors, frame grabbers, digital signal processing boards and unique cabling can transfer and process imaging data in real time. But like many specialized systems, the resulting solutions are expensive, complex and difficult to integrate and manage. They are also limited in that they have no networking flexibility, support only point-to-point connections between cameras and computers, and often are locked into products from one or two vendors, with no clear migration path.

Against this backdrop, many in the machine vision industry are turning to standard, economical Gigabit Ethernet equipment for real-time camera-to-PC connectivity. Hundreds of real-time vision systems based on the protocol are running today, and many more are in testing. Adding to the momentum is a focused effort by industry leaders to standardize Gigabit Ethernet for vision systems. This initiative, being managed by the Automated Imaging Association, is making significant progress (see sidebar).

With a wire speed of 1 Gb/s, the Gigabit Ethernet commercial data transport platform meets the fundamental bandwidth requirement for real-time operation. The equipment costs less than specialized gear and is easier and less expensive to integrate, manage, maintain and upgrade.

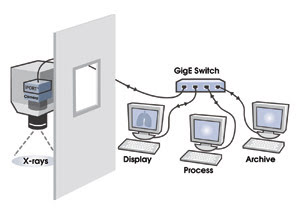

Further, the protocol offers benefits in distance, networking flexibility, scalable processing and reduced system flexibility. It allows direct camera-to-PC connections of 100 m with low-cost copper cable, and much longer with fiber or low-cost Gigabit Ethernet switches. It supports a broad range of connection options, including one-to-one, one-to-many, many-to-one and many-to-many, and it can be used to multicast or to send data simultaneously to more than one PC (Figure 1).

Figure 1. A Gigabit Ethernet real-time x-ray system converts camera data to IP packets and sends them simultaneously in real time to three PCs over a standard Gigabit Ethernet switch. One PC is used for display, another for processing and a third for archiving.

This multicast feature means that PC-based computer farms can perform intensive processing tasks, a cost-effective, easy-to-use, scalable alternative to real-time computing based on costly and complex digital signal processing architectures. The connections use off-the-shelf network interface equipment, cabling and switches. Gigabit Ethernet also allows all camera types to operate across the same transport/applications platform.

Avoiding data delays

To build real-time machine vision systems with Gigabit Ethernet equipment, however, careful attention must be given to three performance areas: end-to-end latency (delay), CPU usage at the PC and data delivery.

High-quality, real-time video delivery is a precision operation that requires low and predictable latency from end to end. Although Gigabit Ethernet’s link speed is more than sufficient for live-action data transfer, performance also hinges on the efficiency of the techniques that convert camera data into IP packets, queue it for Gigabit Ethernet transmission, receive it at the PC and move it into PC memory for processing.

Standard Gigabit Ethernet networks use general-purpose, operating system-based software, such as the Windows or Linux IP stack, and a network interface card/chip driver. The software is optimized to manage many applications and competing demands on processing time. As a result, it introduces too much latency and unpredictability for real-time operation.

Instead, cameras and PCs need more focused packet-processing technology. One approach is to use a purpose-built packet engine either inside the camera or as an external box. These engines grab, convert to a packet and queue imaging data with low and predictable latency. At the PC, the drivers shipped with standard network interface cards/chips must give way to high-performance driver software that doesn’t use the IP stack.

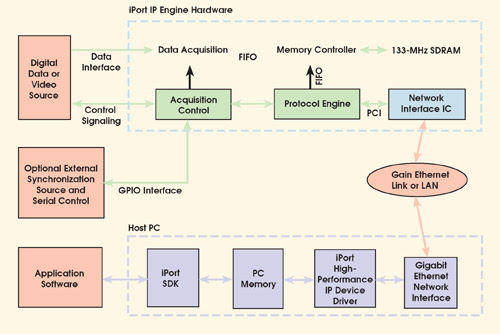

Figure 2. The iPort streams data in real time over Gigabit Ethernet using an IP engine at the camera and a high-performance device driver at the PC.

In most implementations, one such option, marketed as iPort (Figure 2), delivers predictable latencies of less than 500 μs from data source to PC memory, which is approximately equal to solutions based on frame grabbers.

Another challenge with Gigabit Ethernet in real-time systems is that the standard Windows or Linux IP stack monopolizes the CPU during high-speed data transfers, making it impossible to run vision applications at the same time. Higher-performance network interface card/chip drivers can bypass the IP stack — for example, as iPort does — by processing incoming packets in the operating system kernel and transferring image data directly into memory. As a result, they handle full-bandwidth, high-speed data transfers using only approximately 1 percent of the CPU capacity.

The third issue is ensuring the delivery of every image taken by the camera. Because Ethernet can lose packets in some environments, the system must detect lost or erroneous packets and request them again efficiently.

Standard IP transfer protocols such as TCP, UDP and RTP have unacceptable limitations for real-time vision systems. TCP validates transfers, so packet reception is guaranteed, but latencies are large and unpredictable, host CPU requirements are high, and data throughput is low. UDP has low latency and predictable high-speed transfer performance, but no guarantee of packet reception. RTP also supports predictable, real-time multimedia streaming but offers no guarantee of packet reception.

Next generation

The iPort protocol, in contrast, streams over UDP with features for transferring video information, including automatic error checking for guaranteed packet reception.

Looking ahead, the use of Ethernet in vision systems is not likely to stop with Gigabit Ethernet. The next generation — 10 Gigabit Ethernet, which supports data rates of 10 Gb/s — is already running over fiber, and a specification for copper is expected from the Institute of Electrical and Electronics Engineers in 2006.

Ethernet for vision is here to stay, and it has the potential to reshape the way camera-to-PC communication is deployed in most types of machine vision systems.

Meet the author

George Chamberlain is president of Pleora Technologies Inc. in Ottawa and co-chairman of the Automated Imaging Association’s Gigabit Ethernet Vision Standard Committee; e-mail: [email protected].

Standardizing Gigabit Ethernet for Vision

In early 2003, soon after the first products for high-performance delivery of imaging data over Gigabit Ethernet entered the market, a small group of industry executives formed an ad hoc group to standardize the protocol for machine vision. The objective was to create an open framework for camera-to-PC communications that would allow hardware and software from different vendors to interoperate seamlessly over Gigabit Ethernet connections. Three technical subcommittees set about developing a Gigabit Ethernet camera interface, a communications protocol and an applications programming interface.

Over the next year, the Automated Imaging Association adopted the Gigabit Ethernet Vision Standard initiative, whose membership grew to more than 35 companies. The technical subcommittees expanded to include representatives from 11 companies, covering every sector of the global machine vision industry.

The particulars of the standard have changed considerably, but the goal of end-to-end communications standardization has not. The first draft of the standard, expected to be ratified in mid-2005, comprises a set of bootstrap registers and procedures, a static XML descriptor file of camera capabilities and communications protocols for device discovery, command transactions and data delivery.

The bootstrap registers will be common to all compliant devices with Gigabit Ethernet connections. They will specify high-level features, including the location of the XML descriptor file. The XML file, in turn, will provide a description of the device’s capabilities, enabling easy software interfaces based on the camera features.

The communications protocols will operate at the application (top) layer of the seven-layer IP protocol stack. They will rely on underlying standard transport protocols such as UDP and TCP.

For further details, contact Jeff Fryman at the Automated Imaging Association; e-mail: [email protected].