3-D time-of-flight technique provides extra dimension to safety and security applications.

Hank Hogan, Contributing Editor

For cameras, the lack of depth perception presents a depth of problems. Two-dimensional machine vision systems, for example, struggle to recognize shapes. Traditionally, three-dimensional vision has involved multiple cameras or a laser rangefinder, but both approaches have drawbacks. The first doubles the number of cameras needed, while the second requires a rapidly moving beam.

Several companies are commercializing a technique that yields 3-D information using only one sensor. Canesta Inc. of Sunnyvale, Calif., the Swiss Center for Electronics and Microtechnology Inc. (CSEM) of Neuchâtel and PMDTechnologies GmbH of Siegen, Germany, make sensors that extract distances based on time-of-flight measurements, data that helps construct 3-D images.

Standard 2-D imaging technology provides a flattened view of objects in the field of view (top). Time-of-flight measurements add distance measurements at each pixel (center) and, when smoothed by software, complete a 3-D representation of the objects (bottom). Courtesy of PMDTechnologies GmbH.

Jim Spare, vice president of marketing at Canesta, likened his company’s products to the image sensors that are found in digital cameras — with one crucial difference: “Our image sensor additionally computes the distance at every pixel, providing a depth map, or a 3-D image, of everything that is seen in the nearby area.”

An inexpensive 3-D imager would be a boost for automotive, machine vision, security and other applications. There are even ways such sensors could be useful in the biosciences (see sidebar).

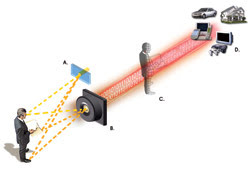

In an imaging system that incorporates time-of-flight capabilities, three-dimensional information is achieved by having a near-IR light source illuminate a subject (A). The sensor chip measures the distance that the light travels at each pixel on the chip (B). Embedded software calculates the phase shift between the transmitted and reflected light and uses that data from each pixel to create a depth map of the object (C). The sensor can be built into various products to add reactive capabilities to them (D). Courtesy of Canesta Inc.

In the 3-D time-of-flight technique, a light source is added to the system. Unlike a laser rangefinder, the source doesn’t have to be a highly collimated beam that is swept across a surface. Instead, LEDs or other light sources with an output that can be modulated at a frequency of tens of megahertz can be used. CSEM’s SwissRanger 3-D camera, for example, uses a bank of LEDs for measuring distances from a few centimeters to tens of meters with subcentimeter accuracy.

“The wavelength of the intensity-modulated light wave is located in the near-IR band, around 870 nm. However, any other wavelength at which silicon is photosensitive will be fine as well,” said Felix Lustenberger, image-sensing section head within CSEM’s photonics division. This, he added, means that wavelengths from about 350 to 1100 nm can be used.

The modulated light from the source fans out and is reflected by objects in the field of view. By comparing the signal of the reflected light with that of the modulated outbound illumination, the devices determine the phase shift and, hence, the time of flight. From that (and the speed of light), the distance from the pixel to the origin point of the reflected light can be calculated.

Figuring out the phase shift at one time involved extensive computation, which made the time-of-flight technology incompatible with an inexpensive and fast sensor. Now the calculations can be done on a chip. CSEM uses a field-programmable gate array for its calculations but is working to implement an on-chip solution, according to Lustenberger. PMDTechnologies already does all of the calculations on-chip. Canesta’s phase shift demodulation scheme is based on the physical properties of a CMOS structure, an approach that it touts as requiring little circuitry.

Devices from the three companies have various capabilities and specifications. Depth accuracies range from 5 mm to 1 cm, and the devices can handle distances up to about 15 m. The sensors have pixel sizes of tens to hundreds of microns on a side, a figure driven by application needs. The arrays measure from 64 × 64 pixels to 124 × 160 pixels.

In the driver’s seat

The automotive market is a target of all three companies. One area of interest involves intelligent air bag deployment. The idea is that a 3-D camera could determine the size of someone sitting in a seat, his relative position and his distance from the air bag. This information would be used to prevent air bag deployment when the passenger is in an unsafe position, such as a driver leaning over the steering wheel.

However, that’s not the only automotive application. Bernd Buxbaum, CEO of PMDTechnologies, said that the company is working on projects involving pedestrian safety, stop-and-go driving, and driver’s assistance by way of gesture recognition. In such applications, a 3-D camera will enable automated systems to judge the distance from a car to objects, such as people in a crosswalk or parked or moving vehicles. The system would alert the driver or possibly take action on its own so that accidents could be avoided.

In a similar vein, Cedes AG of Landquart, Switzerland, is looking for ways to use these cameras to improve the safety of dangerous, automated machinery, such as elevators, revolving doors, swing gates, punching presses and welding robots. For example, the company makes sensors to open automatic doors and to keep them open until it is safe to close them.

According to Cedes President and CEO Beat de Coi, current techniques cannot secure all of the dangerous zones around a closing door. So the company has licensed 3-D time-of-flight camera technology from CSEM, with plans to incorporate it into a sensor, a move de Coi said will increase the safety of automatic-door operation dramatically.

“The 3-D camera allows us to monitor the whole leading edge as well as a certain part around it like an immaterial bumper,” he said.

De Coi noted that samples of these new sensors were already working in the laboratory setting, and that prototype production had already started. He forecast that field tests in real-world conditions would begin within a few months.

A well-shaped future

Many other applications can be addressed by the time-of-flight technique, including intruder detection, face identification and games that respond without the need to touch a screen or wear any gear. Machine vision also could benefit from inexpensive 3-D sensors, allowing the use of fewer cameras.

“The potential market value and the applications are almost unlimited, and new ones are discovered on a daily basis,” said CSEM’s Lustenberger.

The three vendors are working separately on a number of improvements for their products. Among these plans are attempts to enlarge the pixel arrays, enhance the distance resolution, extend the operating temperature range and increase the ability of the sensors to function in bright sunlight. Canesta’s Spare noted that his company is working on adding full-color capabilities to its cameras.

On the other hand, current devices are good enough for certain applications, and the expectation is that they will begin to turn up in products once design and production cycles are completed. That, however, will take some time, Spare predicted. “I would expect that you’d see end-user products start to appear in the market by the end of next year.”

The Technology Considers Biosciences

One use of 3-D time-of-flight technology has nothing to do with gauging distance. Instead, it lies in biomarker applications. Typically, biomarker techniques involve attaching fluorescent markers to biological molecules of interest. When illuminated by a laser or other light source of the right wavelength, the markers glow. The problem is that natural fluorescence can drown out artificially induced fluorescence. Solutions to date have involved the design and use of brighter biomarkers or means to otherwise get more light out and, thus, separate what is of interest from the background noise.

However, time-of-flight technology provides a way to detect the phase shift in a fluorescent signal. Most natural fluorescence has a much shorter lifetime than that of dyes and other fluorophores. By modulating the illumination and discarding signals with a phase shift, or time lag, below a threshold, researchers can eliminate natural fluorescence. That highlights the tags and also brings other benefits.

“Lifetime information would be highly interesting for any biological fluorescence or luminescence application because either it gives you additional information or it allows you to extract the information you would like to have, and it’s much easier compared to selecting more delicate dyes,” said Gerhard Holst, manager of the science and research department at PCO AG of Kelheim, Germany. PCO develops and produces specialized fast and sensitive video camera systems, mainly for scientific applications.

Discussions about the use of the time-of-flight technique in the biosciences are in a preliminary phase, but Holst, for one, is very interested in the possibilities.