Photonics technologies are advancing telescopes both on Earth and in space.

Hank Hogan, Contributing Editor

A science that studies exploding stars is itself exploding. Over the next decade, astronomers will more than double the cumulative worldwide telescope mirror area, survey the sky as never before while looking for killer asteroids, resolve objects currently impossible to separate — thanks to optical interferometry — and spot Earth-like and possibly life-sustaining planets through the clever use of lasers. There are also some projects that involve putting optical and radio telescopes in extreme environments such as Antarctica and the far side of the moon.

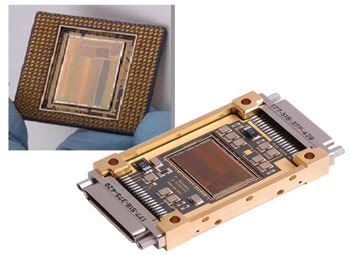

Developed for use on the James Webb Space Telescope, the SIDECAR chip is a microprocessor and is being tested on the ground before flying in space. The spaceflight package is on the right. Courtesy of Teledyne Imaging Systems.

Advances in photonics-related technology make nearly all of these projects possible. A look reveals some of these innovations and their impact.

Going big

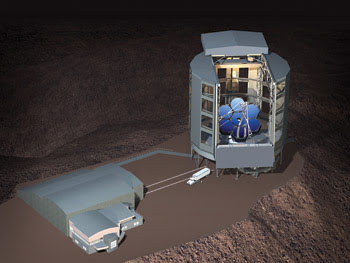

The biggest and most expensive of the new astronomy projects are three planned terrestrial mega-scopes, along with NASA’s space-borne James Webb Space Telescope. When constructed, the 25-m Giant Magellan Telescope, the aptly named Thirty Meter Telescope and the 42-m European Extremely Large Telescope will drive the cumulative amount of worldwide telescope mirror area from about 1400 m2 today to well more than 3000 m2.

The next generation of telescopes will be huge, as can be seen in this artist’s rendering of the Giant Magellan Telescope. Compared with two other proposed telescopes — the Thirty Meter Telescope and the European Extremely Large Telescope — the Giant Magellan Telescope and its housing will be the smallest in terms of its primary mirror. Courtesy of Giant Magellan Telescope, Carnegie Observatories.

Such monsters are possible thanks to advances in adaptive optics and improvements in the ability to effectively construct large-area mirrors out of much smaller segments.

James W. Beletic is director of astronomy and civil space for Camarillo, Calif.-based Teledyne Imaging Sensors, a company involved in the Webb telescope sensors and electronics. A few years ago he was deputy director at the Keck Observatory in Hawaii, which has a 10-m telescope. Before that he worked at the European Southern Observatory, which has four 8-m telescopes in Chile.

Electronics will help astronomers see what has not been seen before. This image of star-forming regions in Orion and S-140 was taken with the University of Hawaii’s 2.2-m telescope and processed with four SIDECAR (system for image digitization, enhancement, control and retrieval) chips. Courtesy of D. Hall, K.W. Hodapp, C. Aspin, UHIA.

The big terrestrial scopes are not cheap, with an estimated total cost of more than $2.5 billion. Beletic noted that the European effort has a steady funding supply — a billionaire kicked in money for the Thirty Meter Telescope, and the Carnegie Institution-lead group backing the Giant Magellan Telescope has in the past proved adept at finding funding. For those reasons, the projects all have momentum. “Those are going to happen. They used to just be a sparkle in people’s eyes, but they’re definitely going to happen,” Beletic said.

The 6.5-m Webb telescope has NASA funding as well as its own technology enablers. One is an application-specific integrated circuit designed by Teledyne. The integrated circuit is in what has been dubbed a system for image digitization, enhancement, control and retrieval – or SIDECAR. The chip is the equivalent of about 1 m3 and 20 lb of electronics. This miniaturization decreases the costs significantly. The Webb telescope will sit about a million miles from Earth, and it will cost an estimated $30,000 a kilogram, or about $15,000 a pound, to reach that orbit.

The chip also saves power and heat, which adds several benefits. The first is that the imagers on the telescope will operate at a cryogenic 37 K. Less power consumed translates into less material needed to radiate away heat in orbit, which reduces cost and also increases the mass that can be devoted to doing science. Secondly, because the SIDECAR device consumes little power, it can be within a few inches of the three instruments the device will control: the telescope’s near-infrared camera, near-infrared spectrograph and fine-guidance sensors. That proximity cuts noise and improves the fidelity of the conversion of the analog image into digital data, a transformation handled by the convertors on the chip.

The systems are being tested before the telescope is scheduled to fly in 2013. The SIDECAR chip is being tested by having it control imagers on the ground at the University of Hawaii Observatory and elsewhere. It also will fly before the Webb telescope does. “It’s being used to repair the Hubble Space Telescope,” Beletic said. The electronics in the Hubble’s advanced camera for surveys failed more than a year ago. The SIDECAR chip will control the two-chip CCD mosaic in the camera, which is the Hubble’s most popular instrument. The repair mission is scheduled for later this year.

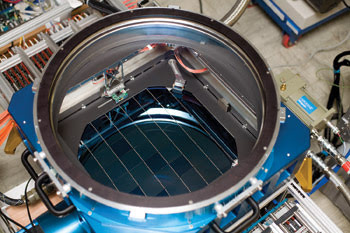

Of course, there are other telescope projects under way that also benefit from technological advances. One such is the Panoramic Survey Telescope and Rapid Response System, or Pan-STARRS. It is possible only because of improvements in detector technology, particularly the increasing size of sensors. Pan-STARRS will ultimately consist of four 1.8-m primary mirror systems, with a prototype single telescope system going live soon. The final configuration is expected to be running by approximately 2012.

Each Pan-STARRS telescope will have a 1.4-gigapixel CCD camera. Photo by Richard Wainscoat, University of Hawaii.

The system, which will be in Hawaii, will map the sky, looking for any objects brighter than apparent magnitude 24 — millions of times fainter than what can be seen with the naked eye. The field of view will be six times the diameter of the full moon, and the items captured by Pan-STARRS will include asteroids, comets and trans-Neptunian objects like Pluto.

Protecting against asteroids

Alan Fitzsimmons is an astronomy professor at Queen’s University Belfast in the UK, part of the consortium that will operate the prototype system. He is looking forward to investigating what the sky survey will reveal, which should eventually include many of the estimated 50,000 asteroids greater than 150 m in size that pass near Earth. Such rocks are a good deal smaller than what is thought to have killed the dinosaurs, but Fitzsimmons noted that does not mean that they are unimportant. “We don’t believe those smaller objects, if they happen to encounter the Earth, will cause global effects. However, they’d be pretty noticeable if you were in the vicinity,” he said.

Pan-STARRS is the first of the next generation of sky surveys to go after such objects, he added. This mapping is possible because each telescope will be equipped with 1.4-gigapixel CCD sensors, currently the largest such devices in the world. That size presents problems with both manufacturing and readout, which are solved by segmenting the imager. The focal plane of each camera consists of an almost complete 64 × 64 CCD array, each containing 600 × 600 pixels. This segmentation makes the manufacturing process easier because it is not necessary to build a single chip with 1.4 good gigapixels.

It also helps in readout, since that can be done using eight channels in parallel. As a result, the readout time is short and the camera can be cycled in as little as 8 s, a critical figure because a survey requires many, many exposures. The CCDs also have orthogonal transfer technology, which allows for an electronic version of traditional tip-tilt adaptive optics to be implemented within the CCD itself, thereby improving performance.

As integrated circuits, the CCDs benefit from Moore’s Law, the doubling of transistors on a chip that happens every few years. Nick Kaiser, an astronomy professor at the University of Hawaii and principal investigator of Pan-STARRS, said that, for CCDs, the equivalent involves the number of pixels. “The size of detectors doubles every 19 months,” he said.

He added, “There’s no reason why that trend shouldn’t continue into the future.”

Kaiser expects that 1000 or so moving objects will be captured in every Pan-STARRS exposure. The data will be processed so that asteroids, comets and other objects can be detected and identified. Given that the system will churn out terabytes of data per night, the data handling will not be trivial.

Something other than glass

For a really large telescope, consider the optical interferometer prototype operated by the US Navy, the Naval Research Laboratory and Lowell Observatory at a site southwest of Flagstaff, Ariz. Because the points on the interferometer are 435 m apart, the system has the resolution of an optical telescope of the same diameter. In theory, then, the optical interferometer could allow astronomers to see objects separated from one another by the tiniest of margins. It could, for example, enable astronomers to see features such as sunspots on stars light years away or to prove that what are thought to be single stars are actually twins or triplets.

In practice, the small size of the 60-mm flat-glass mirrors, along with other constraints, limits the system to objects of the sixth magnitude or brighter, objects visible to the naked eye. What is needed are telescopes that are large yet lightweight, making it possible to reconfigure the interferometer as needed without sacrificing too much light-gathering capability.

Enter carbon fiber-reinforced polymer mirrors. Manufactured by embedding carbon fibers in a resin, these mirrors are roughly a tenth of the weight of their glass counterparts, provided that not only the mirror but also the supporting structure is made of the material. Advances in the technology now allow such companies as Composite Mirror Applications of Tucson, Ariz., to construct mirrors with a wavefront accuracy of less than λ/4 peak to valley, a smoothness demanded by telescope mirrors.

The material also offers other advantages over glass. Traditionally, glass blanks are ground down into the right shape in a process that takes months for large mirrors. Producing two or more matched mirrors requires multiple grinding operations, each done with painstaking care to ensure that the mirrors are as alike as necessary. In contrast, the composite material only requires the production of a high-quality mandrel, against which the composite cures. Once the mandrel is done, replicas can be produced quickly at a cost that is as much as 10 times less than that of a glass equivalent.

Jonathan R. Andrews, an electrical engineer with the Washington-based Naval Research Laboratory, said that the composite offers another advantage when used in telescopes. The orientation of the carbon fibers in one layer relative to another can be set so that the material has specific properties. “You actually have the ability to control the coefficient of thermal expansion in different directions,” he said.

Thus, it is possible to make the distance between the primary and the secondary mirror not react to a change in temperature. As a result, there will not be focus changes because of temperature shifts.

The current mirrors in the optical interferometer are being replaced with 1.4-m carbon fiber-reinforced polymer mirrors. When completed, the change should boost the system’s ability to see dim objects to the 13th magnitude, about 250 times dimmer than what the unaided eye can spot.

Spotting other Earths

Finally, a recent technological advance may make it possible to detect Earth-like planets orbiting distant stars. The most common way such planets are spotted now is by seeing stars wobble. As a planet travels around a star, it pulls the star back and forth, creating a distinctive, periodic spectral shift. The size of the shift is related to the size of the planet and how close it is to the star.

Given current technology, astronomers have been able to detect such a wobble only for large planets whizzing closely around stars. A planet like Earth orbiting a star like the sun would produce a signal more than 10 times too small to be picked up.

A team of researchers lead by physicist Ronald L. Walsworth from the Harvard-Smithsonian Center for Astrophysics and from Harvard University, both in Cambridge, Mass., reported a possible solution in the April 2008 issue of Nature. They devised a laser frequency comb based on a mode-locked Ti:sapphire femtosecond laser and a Fabry-Perot cavity. With the laser, they generated a source comb and fed it into the cavity, creating a final output of regularly spaced lines. That output, in turn, could serve as the calibration source for a spectrograph and thereby provide the precision necessary to detect an Earth-like planet, a process that will require stable output from the device for at least a year. The system also could be used in a direct measurement of the decelerating expansion of the universe.

The astro-comb is scheduled to be deployed at a telescope in Arizona in July. Results from its initial use will not be available for some time.

Going to extremes

It is not technology alone that is helping astronomers achieve new telescope performance. There is also location involved. Two projects, one near-term and optical and the other long-term and radio-wave, illustrate this point.

In the case of the first project, a consortium plans to build an automated observatory in Antarctica. Team member Lifan Wang, director of the Chinese Center for Antarctic Astronomy and an associate professor of physics at Texas A&M University, College Station, noted that long nights near the South Pole would allow continuous monitoring of stars for almost four months at a time. There is no other place on Earth where this is possible.

This image is an artist’s rendering of a robotic observatory in Antarctica. The nights are cold and clear, and they last for months, potentially producing excellent visibility and the chance to do astronomy that cannot be done anywhere else on Earth. Courtesy of the Chinese Center for Antarctic Astronomy and Nanjing Institute of Astronomical Optics Technology.

Antarctica offers other advantages. The air is cold and, therefore, very dry. Thus, the water vapor that would normally absorb light at particular wavelengths will be absent, improving the ability to see through the air at those wavelengths. If observations can be done at elevation and above the turbulent ground-hugging boundary layer, the sharpness of the images obtained should be excellent, perhaps better than anywhere else in the world.

The consortium has a site in mind — a 13,000-ft-high spot east of the South Pole. Wang expects to be able to separate objects that appear close together better there than could be done elsewhere. “We expect the image to be very, very sharp,” he said.

For example, the resolution at Chile and Hawaii, two popular telescope sites, is about 0.6 or 0.7 arcsec in the visible. In Antarctica, that same figure may only be 0.3 arcsec.

Because of the site’s remoteness and harsh conditions, the observatory will have to be operated robotically. For now, instruments are collecting data to quantify the site’s properties, which may enable the construction of an observatory within the next five years or so.

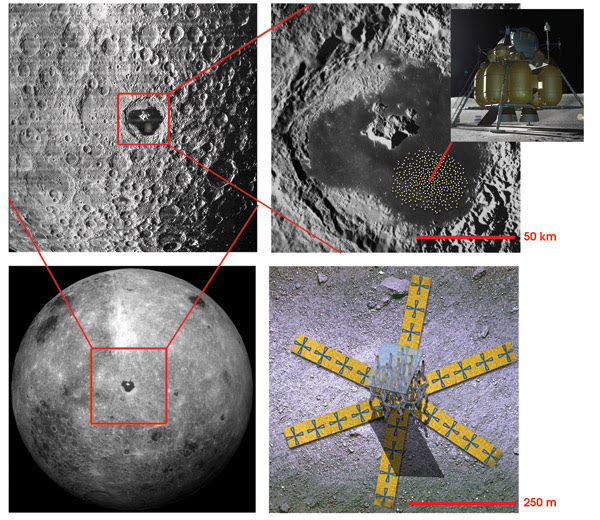

In this image of the far side of the moon (bottom left) the crater Tsiolkovsky is highlighted (top left and right). Scientists are studying the feasibility of placing a large radio telescope interferometer consisting of many stations over a large area (red dots, top right, with close-up of a single station, bottom right). If built, the instrument could enable scientists to peer into the time before the first stars ignited. Courtesy of NASA/Goddard.

For an even more exotic location, there is the far side of the moon. Protected by several thousand miles of rock, it is the only nearby place in the universe where radio astronomers can probe the time after the big bang but before the first stars ignited. These so-called dark ages lasted several hundred million years, during which time only a single radio emission line from hydrogen was present. If that signal can be captured, it might be possible to trace the evolution of the universe.

The problem is that terrestrial sources and the sun are much brighter than the faint redshifted signal from the dark ages, which explains the need to go someplace protected from all that interference.

Joseph Lazio, a radio astronomer at the Naval Research Laboratory in Washington, heads the Dark Ages Lunar Interferometer project. The goal, which might take as long as 20 years to complete, is to put a few hundred stations spread out over tens of kilometers on the far side of the moon. Doing so will require using robots to assemble the device and the development of very low power electronics and very lightweight assemblies.

Feasibility studies are under way, with the chance that a much smaller and simpler prototype might be deployed within the next 10 years. This prototype would be on the near side of the moon and would study the sun. The far-side instrument would be much larger and more complex. Fortunately, Lazio noted, meaningful data will result, even if not every station and every antenna works.

“One of the nice things about an interferometer is that it degrades gracefully,” he said.