For Kamalina Srikant, a product marketing engineer with vision software and hardware manufacturer National Instruments of Austin, Texas, improving imaging technology isn’t just a good idea, it may be a necessity – in part, because it could help reduce the worldwide carbon footprint.

Vision systems can inspect solar cells, properly site and position a solar grid, and direct cells or mirrors to track the sun. Such techniques can lower the cost per watt of solar power by varying amounts and make it more attractive, thereby reducing carbon emissions.

Vision systems cannot make the sun shine brighter, but they can improve the manufacturing of solar cells, thereby lowering the cost per watt of solar power. Courtesy of National Instruments.

Making solar energy economical is one of the grand challenges from the US National Academy of Engineering. The appearance of photonics-based imaging as part of a solution to one of the organization’s objectives is no surprise to Srikant. “There’s actually quite a few of them where vision could play a role to help solve some of these.”

She cited, for example, reverse engineering of the brain and engineering of better medicines. Although not as big as these problems, several trends not only point to how improved imaging and vision systems are tackling smaller ones but also reveal some of what still must be done.

Gaining perspective and controlling robots

Despite what cameras depict, the world is not flat. For some applications, such as reading a label on a bottle,

two-dimensional imaging is fine. However, that is not the case for what promises to be the growing use of vision in robotics.

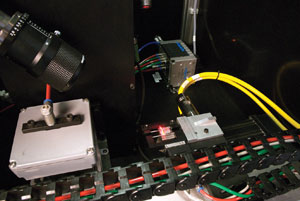

To avoid collisions while operating in tight confines, robots increasingly will use vision guidance, as are the ones shown here, which are involved in fully automated memory chip testing. Courtesy of ImagingLabs.

Ignazio Piacentini, CEO of ImagingLab Srl in Lodi, Italy, a robotics and vision technology developer, noted that today’s robots often perform fairly simple jobs blindly. By 2012, he said, up to 40 percent of new robots will make use of vision to automatically identify parts, to see if it is safe to take a particular path from point A to point B, to gain better positioning accuracy and to perform other tasks.

Such applications highlight a challenge. “Robots are inherently three-dimensional,” Piacentini said. “Hence, moving from 2-D to 3-D vision is of tremendous importance.”

There are a number of ways vision systems can accomplish this. They can do it stereoscopically, as people do, through the use of two cameras. Because of cost and complexity, however, most systems take another approach.

Some use structured light, projecting a grid onto an object and deducing deviations from a plane by distortions in the grid, for example. Others employ laser scanners to extract distances point by point. Still others use time-of-flight measurements to gauge distance across an array. Currently, Piacentini said, although laser scanning and structured light are more robust for industrial applications, suitable time-of-flight cameras are starting to appear.

Besides hardware becoming available, software is beginning to show up. Wolfgang Eckstein, managing director of vision software supplier MVTec Software GmbH of Munich, Germany, said that his company is developing new 3-D imaging methods. One, for instance, offers perspective deformable matching, the better to identify objects whose appearance is distorted.

Three-dimensional vision, he said, “will revolutionize machine vision and will provide advantages to many applications in well-established industries such as semiconductors and robotics.”

Adding the third dimension alone may not be enough, at least when it comes to some common robotic tasks, said Michael Muldoon, business solutions engineer for AV&R Vision & Robotics Inc. The Montreal-based systems integrator has been involved in hundreds of industrial automation projects.

The problem that Muldoon pointed to involves the field of view, resolution and speed. Often, a vision system will take measurements of a part to verify dimensional tolerances. Capturing images in 3-D could make that task faster and more accurate because fewer views will be needed and perspective-related distortions will be better accounted for.

But vision systems also are used for defect inspection. The challenge is that scratches, tool marks and other defects typically are much smaller than the dimensions of a part. Systems designed for measurement and those intended for defect inspection often differ markedly in resolution and in the speed with which they can scan an entire part, Muldoon said.

“There’s a big gap between the two. So having a system that’s somewhere in the middle – we would find that very useful.”

People watching

For other vision and imaging trends, consider the security arena. There, improved technology promises better performance and perhaps an end to one set of headaches, that of false alarms. However, bandwidth is a looming issue that may require smarter security cameras.

With regard to technological changes, one is that digital cameras are replacing analog ones. The new cameras use IP, or Internet Protocol, technology. They are addressable as nodes on the network, easily added or removed, and can be remotely configured from a central location. Moreover, the traffic to and from them can travel over any IP-capable network.

Surveillance cameras are going digital, with increasing resolution. These cameras stream data using Internet Protocol. Courtesy of JAI.

This change from analog to digital also will likely be accompanied by an increase in the resolution, said Tue Mørck, vice president of marketing at JAI in Copenhagen, Denmark. “We think the HDTV [high-definition television] format will be very popular. It will perhaps be the new standard in a few years.”

That is good news for anyone or anything looking at an image, but potentially bad news for the network. The switch to a higher-resolution digital signal will roughly double the number of scan lines and increase bandwidth demand fourfold.

But network problems already are showing up. In moving to digital output, the feed from cameras also is moving onto a network where it must contend with other traffic. That load could include the streaming video from every other camera in an installation.

Eric Eaton, chief technology officer for BRS Labs of Houston, noted that cameras that offer megapixel resolution at 30 fps or more are available and seemingly affordable. However, their price is not the complete cost that must be paid. “When you plug them in and try to stream high-quality video on your network, you realize your bandwidth requirements and costs are so high that it really multiplies the effective cost of those beautiful cameras,” he said.

BRS makes video surveillance behavioral analysis software. As such, its product must deal with network congestion and camera features, such as auto color and auto balance, that activate from time to time. These features can create artifacts in the data.

Smarter surveillance cameras could solve that problem and could help with bandwidth. The camera could analyze a scene and report back only the relevant elements, greatly reducing the amount of data traffic. Such benefits must be weighed against the extra cost and other factors, however.

With improved surveillance cameras, real-time-analytics can automatically spot someone crossing a fence (red rectangle in picture above) or a car stopped in the wrong spot (red square in picture at right). Courtesy of Agent Video Intelligence.

That cost might be dropping in the near future because of another trend. Video analytics and security technology have been hobbled by a lack of interoperability between various cameras and incompatible data formats. That is changing, however, through such efforts as the Physical Security Interoperability Alliance, or PSIA.

A final trend for video analytics involves determining how to better highlight significant events without flagging too many that are not important. This requires a balancing act, one that is getting easier and better as algorithms improve, imaging gets sharper, bandwidth increases, and more processing power becomes available.

However, an application related to video analytics may take off first, said Zvika Ashani, chief technology officer of Agent Video Intelligence Ltd. of Rosh Ha’ayin, Israel. The company provides video analytics solutions.

Ashani noted that software today can quickly categorize the shape, color, velocity and other parameters for every object in a scene. This capability readily lends itself to offline forensic analysis. For example, if someone wants to highlight, after the fact, all yellow vehicles traveling faster than a given speed, the software can quickly produce the data.

The situation is analogous to an Internet search engine. Most of what is presented is, in essence, a false alarm and irrelevant to finding what is being sought. But that does not mean the search results aren’t useful, Ashani said. “Because of the way that they’re being presented to you, you can very quickly zoom in on the relevant information. You’re not very concerned about the false alarms.”

The old standbys

There are, of course, vision and imaging trends other than the movement toward 3-D or the implementation of IP-based streaming video for surveillance. These result from ongoing improvements, particularly among sensors and semiconductors.

Endre J. Tóth, director of business development for smart camera maker Vision Components GmbH of Ettlingen, Germany, said that these hardware improvements are making devices smaller, cutting their power consumption and reducing their weight. He also noted a change in sensors, representative of a long-term trend.

“A wider range of CMOS sensors is available for the gradually growing machine vision applications using CMOS sensors,” Tóth said.

The continuing evolution of electronics and software also is leading to vision systems that combine the sensor, the image processor and the optics in one device. Philip Colet, vice president of sales and marketing at camera maker Dalsa Inc. of Waterloo, Ontario, Canada, characterized such integrated solutions as the ultimate in vision systems and indicated that they already are appearing.

“I’ve seen applications in automotive, for example, where you have devices that are mounted in the side mirror that will tell you if the passing lane is clear,” he said.

Other automotive-related applications detect pedestrians crossing in front of a car and automatically put on the brakes. Such applications need an integrated solution because of space and cost constraints. They do not need the most advanced sensors.

One area that does need such devices is the life sciences, where researchers cannot afford to waste a photon. Here, technology promises better imaging, although the improvement does not always come from the sensor.

A case in point is a new camera from Photometrics of Tucson, Ariz. The company recently introduced a device that solves a bioscience imaging problem. Deepak Sharma, a senior product manager, explained that bioscience researchers typically must specify results in arbitrary units because, besides the experiment itself, a camera’s output depends on the chips within it, the gain used and other parameters.

It is possible to calibrate for such variables, but the process can be involved. This year, Photometrics announced a scientific-grade camera that calibrates itself. It works by having the camera cut off outside light and then use internal light sources to derive the calibration curve. The process takes a few minutes.

The company chose to do this with an electron-multiplying CCD, or EMCCD, for a few reasons. One is the challenge of implementing the technology in an EMCCD, Sharma said. “We figured if we can do it with this type of sensor, we can do it for almost anything out there.”

The other factor, he noted, is that, because it has been difficult to absolutely quantify results with a scientific-grade EMCCD camera, the new device solves a need. It also helps that such cameras come with a higher price tag than other imaging systems and are thus better able to support the additional cost of precision autocalibration technology. The cost-to-benefit calculations may be different for other cases.

General cost and market constraints may slow the ongoing development and deployment of vision systems with higher resolution, faster frame rates, lower cost and newer interfaces. However, Friedrich Dierks, chief engineer and head of software development for components at Basler AG of Ahrensburg, Germany, does not think the current economic slowdown will have a significant effect on the general trajectory of these trends.

“These developments are long term. They may speed up or slow down due to the crisis, but I don’t think they will become different,” he said.

Making connections

A final trend involves camera interfaces. No man is an island and neither is any camera. They all connect to the wider world, and several innovations promise to enable new capabilities, cut costs and, perhaps, enable applications that today cannot be done or are not feasible.

One of these interface developments is an upgrade to one of the most common camera connections. The new USB 3.0 standard, also known as SuperSpeed USB, should appear in commercial products in 2010. Besides eventually being inexpensive and widely available, USB 3.0 offers vision users a number of advantages.

“First and foremost, it’s the bandwidth. You can go out to 400 MB/s, roughly, transfer rate. That is five times faster than FireWire, 10 times faster than USB 2.0 and four times faster than GigE,” said Vlad Tucakov, director of sales and marketing at Point Grey Research Inc. The company, based in Richmond, British Columbia, Canada, has demonstrated a USB 3.0 prototype camera and plans to officially offer USB 3.0 cameras in the third quarter of 2010.

Although it’s compatible with USB 2.0 plugs, the USB 3.0, or SuperSpeed USB, standard offers much higher speeds and could be useful in many vision applications. Courtesy of Point Grey Research Inc.

Some of the other benefits of the new interface include true isochronous data transfers that guarantee bandwidth on the bus and allow low-latency operation. Systems will know when the data from a camera will appear, a critical requirement in automation and other real-time applications. Also, users will be able to put multiple devices on a single connection. Finally, cable runs of 10 m will be no problem, and there will be enough power to run higher-performance CCD sensors.

The new interface’s system architecture, wiring and connectors are different from the old. However, the new connectors are compatible with USB 2.0 when inserted partially. Complete insertion leads to a USB 3.0 connection. The new standard should be of interest to anyone who has considered a high-speed interface but has decided against it for cost reasons, Tucakov said.

Vision systems often measure a part’s critical dimensions, as is being done here on the dovetail of a turbine airfoil. Vision systems also can inspect for defects. Performing both measurement and inspection may require two systems for efficiency. Courtesy of AV&R Vision & Robotics.

Other vision interfaces also will see changes in the coming years, said Jeff Fryman, director of standards development at the US-based Automated Imaging Association (AIA). Higher bandwidth and longer cable runs in Camera Link, for example, are being considered.

For GigE Vision, plans call for an extension to handle more than a camera. “This would allow control of peripherals, like a light,” Fryman said.

Other nonstreaming devices also could be managed, he added. To do so, these devices must have the needed hardware and software. However, because Gigabit Ethernet is a widely used interface, many devices might require only a change in their already resident code. Fryman expects a flood of new products that offer plug and play with GigE Vision to enter the market quickly after the standard is changed.

In another development, three key vision associations – AIA, the European Machine Vision Association and the Japan Industrial Imaging Association – recently announced plans to cooperate in future standards development and marketing. This approach could lead to a standard that is applicable to vision and imaging systems anywhere in the world. One result could be reduced costs for suppliers and lower prices for users.

Working cooperatively also will ensure other advantages to the process, and these are significant, Fryman said. “It’s important that standards follow the proper procedures during development in a fair and transparent manner so that all stakeholders in the industry benefit.”