If you are what you eat, then it might

be good for food to get the right – and therefore light – touch. Photonics-driven

improvements in food inspection and handling could result in fewer illnesses, less

waste, cheaper prices and better quality.

There is clearly a need for better inspection. According to the

Centers for Disease Control and Prevention, food-borne illnesses hospitalize more

than 300,000 people and cause 5000 deaths each year in the US. Thus, an improved

ability to detect contaminants and problems could save lives.

Take the case of chickens and turkeys. According to the US Department

of Agriculture (USDA), the US poultry industry produces 43 billion pounds of these

birds a year. Screening them during processing is labor-intensive.

“Currently, poultry is inspected by human inspectors,”

said Moon S. Kim, a research physicist with the USDA’s Agricultural Research

Service in Beltsville, Md.

To improve the situation, Kim and colleague Kuanglin Chao, an

agricultural engineer, have turned to hyper- and multispectral imaging, an approach

that combines the materials identification of spectroscopy with the location capabilities

of machine vision.

USDA agricultural engineer Kuanglin Chao examines the output from

a hyperspectral poultry inspection system. Courtesy of Moon S. Kim and Kuanglin

Chao, USDA.

To do this, they collect spectral data on a pixel-by-pixel basis,

creating a hyperspectral data cube through full spectrum capture. The result is

a multispectral volume if the collected data is restricted to fewer bands. Based

upon the results from good and bad samples, the researchers can determine if a given

bird is OK to process or should be shunted aside.

USDA scientists have been working on the inspection problem for

years, researching the technique for use on poultry, fruits and vegetables. During

that time, Kim has seen significant technology advances.

In particular, he points to improvements in sensors, such as the

advent of electron-multiplying CCDs originally developed for astronomy and microscopy.

These have undergone a significant increase in speed over the past decade, he said.

“Now it’s much faster, and that enables us to use the technology for

in-line applications. It’s almost two orders of magnitude faster.”

This also has allowed the researchers to build line-scan systems

that can handle 2.5 chickens a second. That’s fast enough for commercial use,

and Kim noted that one system currently is in field tests with a poultry processor.

If all goes as planned, the system could enter service by next year.

Aside from potentially removing the variability of human inspection,

the technique offers other advantages. One system can do multiple inspection tasks.

It also can be reprogrammed to handle another inspection chore by simply changing

software. That’s why the same setup can be used to examine chickens for contaminants

or to check fruits and vegetables for bruises or defects.

The performance of an inspection system is not just a function

of the sensor alone, according to Xing-Fei He, a senior product manager with Dalsa

Corp. in Waterloo, Ontario, Canada. He noted that food and agricultural product

processing often is done in harsh environments. The sensors must work across a wide

range of temperatures and humidity, withstand vibration and shock, and contend with

water, blood and guts.

A hyper- or multispectral system also confronts another challenge:

light. The USDA poultry inspection research, for example, has shown that imaging

from 520 to 600 nm is critical for success. But what’s best for looking at

birds may not be best for looking at vegetables, which absorb strongly in that range

and throughout the visible in general.

The spectral intensity, uniformity and stability of the light

are important inspection ingredients. But that presents a problem, He said. “You

cannot find a light that’s uniform across the spectrum because there’s

no such light source.”

The machine vision standard, a halogen lamp, tends to tail off

toward the blue. White LEDs, which are increasingly used, begin to falter toward

the red. Deploying multiple sources might be the answer, but that adds cost and

complexity.

In addition, the data generated can be enormous. Capturing hundreds

of spectral points for every pixel is the same as generating that many monochrome

images. Fortunately, this problem is lessened if only some spectral points have

to be evaluated and acted upon in real time.

For his part, He believes that the data processing and lighting

problems will be solved. He sees the trend progressing from monochrome to color

processing and then, eventually, to multi- and hyperspectral imaging.

Expanding the spectral information collected can lead to systems

that do more than spot problems once processing has started. They also could be

used to improve quality before processing begins.

A case in point comes from field work being done by Constellation

Wines U.S. Jim Orvis is the director of research and development for the company,

a wine- and beverage-producing division of Constellation Brands Inc. of Victor,

N.Y.

Orvis, who is based in Madera, Calif., noted that the quality

of a wine ultimately depends upon the quality of the grapes. Producers would like

to pick these at the right time, but because an entire vineyard will not mature

in lockstep, they have been seeking ways to nondestructively sample grapes on the

vine. Harvesting could then be done selectively, yielding what could be a more uniform

and, perhaps, higher quality product.

In the case of Constellation Wines, research has involved the

use of near-infrared spectroscopy as a way to identify where red grapes are in their

maturation process and as a gauge of quality. This has proved fairly successful

in the lab but much more problematic in the field.

Orvis would like to see an inexpensive and rugged instrument with

built-in geolocation capability. The latter is particularly important if it is to

be used to map a vineyard for selective harvesting.

He added, however, that determining grape quality is a challenge.

The solution is likely to involve some hand labor to correctly position the sensor

– at least at first. “It’s a difficult problem to solve because

you’re looking through the canopy. You’ve got to be able to get through

the canopy at the grapes.”

Another example of using more spectral information comes from

the Battelle Memorial Institute. The Columbus, Ohio-based nonprofit manages or co-manages

national labs for the US departments of Energy and Homeland Security. Its latest

food and agricultural inspection technology came out of bioterrorism and homeland

security applications.

The company’s rapid enumerated biological identification

system makes use of Raman spectroscopy to identify biological materials at the species

level, said senior scientist Andrew Bartko. It works by comparing the measurement

of individual cells to a library, looking for a match.

Based on techniques developed to combat

bioterrorism, this unit rapidly identifies biological samples, allowing food to

be tested for contaminants. Courtesy of Battelle Memorial Institute.

Bartko envisions the system working in several modes. One would

be by examining the biological materials in rinse water. Optics, imaging and image

analysis would determine which particles to analyze and which to ignore. Another

mode would look at airborne particulates, using imaging and analysis techniques

to spot and identify the species of such things as mold spores.

Bartko said the self-contained system is about the size of a microwave.

He also indicated that determining the mold contamination within coffee beans could

be done while a shipment was sitting on a dock.

“From the time you hit ‘go’ to a decision, it’s

about 15 to 20 minutes, depending upon the level of contamination. Low contamination

might take a little longer. If it’s grossly contaminated, you’ll know

immediately,” he said.

Besides the spectral dimension, there is another one to exploit.

Imaging has largely been a two-dimensional affair, but that is changing, thanks

to technological advances.

LMI Technologies Inc. of Delta, British Columbia, Canada, specializes

in 3-D sensors, according to Barry Dashner, vice president of marketing. For food

processing applications, getting a complete 3-D picture can be critical to success.

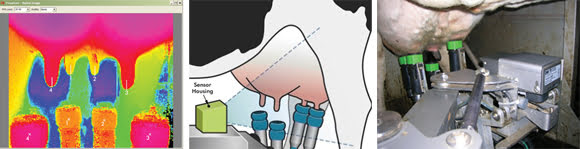

Look, Ma, no hands! Using 3-D imaging generated by a properly placed time-of-flight sensor

(schematic, above, and photo, left), an automated system can reliably attach milking

cups to a cow, cutting labor costs and increasing milk production. Color mapping

helps show distance (upper left image). Courtesy of LMI Technologies Inc.

Products from LMI, for instance, are found in automatic milking

machines, robotic devices that attach themselves to cows without human intervention.

The cows simply enter the stall, and the machine does the rest.

For that to work, more than 2-D imaging is needed because attaching

a suction device to the wrong spot will not produce milk. Also, a wayward attachment

might be enough to scare a cow off automated milking forever.

LMI uses a variety of methods to get 3-D information. Traditionally,

the company’s products have used structured light, in which a series of dots

or lines are projected onto a surface. The surface profile is then determined by

the distortions in this known geometry.

For cow milking, however, LMI makes use of time-of-flight sensors

that send out pulses of infrared light. The time it takes to travel to and from

an object determines surface distance. One advantage of this approach is that it

yields 3-D information and a gray-scale image.

Automated milking enables smaller-scale operations to cut labor

expenses. It also could boost output because it allows farmers to follow the right

timetable for maximum productivity.

In explaining this, Dashner said, “The cows go on their

own schedule. They come down. They walk into the milking machine. They’re

happy, and they give 10 percent more milk.”