Dr. Austin Richards, FLIR Commercial Systems

Infrared camera technology spans a wide range of the electromagnetic spectrum, with results that vary with the waveband. Although there is some overlap of applications between the different infrared subbands and associated sensor technologies, it is critical to understand the trade-offs before making a selection for a given application.

In recent years, infrared imaging technology has become widely visible in daily American life. Almost every TV-watching adult in the US has seen thermal infrared images and video, either in reruns of the action movie Predator or in footage of the Iraq War from thermal imaging pods mounted on military aircraft.

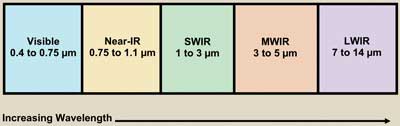

Figure 1. The visible and infrared spectrum can be broken down into subbands. IR imaging technology covers a range of the spectrum, and images vary with the waveband. Images courtesy of Flir Commercial Systems.

Ask an engineer in the photonics industry what an infrared camera is, and he or she will always give you an answer – but the answers will vary quite a bit because “infrared” is a broad term that people use loosely, especially in conjunction with the word “camera.” For example, in the security industry, an infrared camera can be a near-infrared device (based on a CCD or CMOS sensor) or a thermal infrared instrument (usually based on a microbolometer sensor). The goal may be the same – e.g., to observe a scene in low-ambient-light conditions – but the technology and the resulting imagery are very different.

For the purposes of infrared imaging discussions, the infrared region of the electromagnetic spectrum can be divided into two main wavebands: near-infrared and thermal infrared. The most significant properties of these bands are as follows: Near-infrared cameras primarily see reflected radiation. There must always be some ambient light present to make a picture unless the scene or object is very hot, such as a fire. The sensors and the optics are very similar to those used for visible-light imaging. NIR radiation can pass through optical glass, enabling observation through windows into houses and cars.

The human eye is insensitive to NIR radiation, but you can sometimes see a bright source at the shorter wavelengths, which appear as deep shades of red. NIR images generally look similar to monochrome visible-light images, although many black or dark-colored surfaces will appear as much lighter shades of gray. The wavelength range for NIR radiation is typically 0.75 µm (where the human eye cuts off) to 3 µm (where terrestrial scenes emit substantial radiation and the thermal IR band begins).

Figure 2. An NIR image of two people. Note that the pigments in the tops are transparent and that the vegetation looks white because of the reflectivity of chlorophyll in the NIR band.

Thermal IR cameras primarily see emitted radiation. They can image terrestrial scenes in the total absence of ambient illumination because every object emits thermal IR radiation. Window glass blocks thermal IR radiation, and the human eye is incapable of imaging thermal IR radiation. Thermal images look quite different from visible-light or NIR images. Areas that are typically dark and shadowy in the visible band are often quite bright in thermal IR images. For terrestrial imaging, the thermal IR wavelength range is typically from 3 to 15 µm (where Earth’s atmosphere becomes opaque).

These are the two “superbands” of IR radiation, but we are not finished with our categorization. The NIR and the thermal IR bands can be further divided into subbands. The NIR band often is broken down into the NIR band and the short-wavelength-infrared (SWIR) band. If the naming conventions seem confusing and inconsistent, that’s because they are! The NIR band is considered to be 0.75 to 1.1 µm (1.1 µm is where silicon sensors cut off), and SWIR is 1 to 3 µm. The thermal IR waveband is divided into the mid-wavelength-IR band (MWIR) and the long-wavelength-IR (LWIR) band. Figure 1 is a graphical representation of all these categories.

NIR properties, applications

Images look similar to visible-light images, although monochrome. Colors tend to disappear because many color pigments are transparent to NIR radiation. Healthy vegetation and human skin appear light gray. Silicon sensors are used to image NIR, and active illumination is used in low-ambient-light situations.

The most widespread application for NIR imaging is in low-light security cameras, which usually have short-focal-length lenses and a ring of NIR LEDs to provide close-range illumination. The LEDs are hard or impossible to see with the naked eye, so they are unobtrusive (though you can see them with cell phone cameras. Try it!).

The military uses night-vision goggles that work in both the visible and NIR bands. Soldiers use a number of NIR illumination sources in conjunction with these devices, including laser pointers, LED illuminators on the goggles themselves and NIR beacons to mark helicopter landing positions. NIR imaging also is used to evaluate the health of crops and forests, to inspect textiles for defects in the weave, to examine altered documents that have had text erased or obliterated, and to sort out fruits and vegetables that are bruised.

SWIR properties, applications

In this band, vegetation and human skin can look very white or very black because of water absorption – it all depends upon the wavelength within the SWIR band. Conventional silicon imaging sensors won’t work in the SWIR band – the photon energy is too low to stimulate the detectors, just as NIR radiation fails to stimulate the light receptors in the human eye. SWIR sensor materials are more exotic and include indium gallium arsenide, indium antimonide and mercury cadmium telluride. SWIR cameras can see through glass, but less well up near 3 µm. Special lasers can be used for active illumination out to long range.

What it’s used for: SWIR radiation can readily pass through thin paint and other thin solid materials, as well as haze and air pollution, with minimal scattering. It can act like “x-ray vision” in these situations and can see through these materials better than NIR radiation in many cases.

Figure 3. A SWIR image of a man (2 to 2.5 µm) with light skin color. Water just below the skin’s surface absorbs SWIR radiation in this band very well.

Many lasers used for optical fiber communication and military applications such as laser rangefinders work in the SWIR band, so SWIR cameras are used to characterize these laser beams and see reflected laser spots within scenes; they also are used for chemical imaging – many molecules have strong resonances in this band, and multispectral SWIR systems can identify materials simply by looking at them in a multitude of bands and comparing the spectral signature with a database of known materials.

Because of this strong interaction with molecules, especially water, SWIR images of everyday scenes can look quite different, depending upon which part of the SWIR band you are in. Figure 3 shows a SWIR image of a person with light skin color. The water right under the surface of the skin strongly absorbs SWIR radiation in this band. Liquid water absorption makes the eyes and teeth look very dark as well.

SWIR radiation passes readily through haze and smog, which tend to scatter visible light. Figure 4 shows two views of an oil rig imaged through 47 km of air with a substantial amount of marine haze in the air path. The rig can just barely be seen in the visible image, while the SWIR image shows strong contrast and the presence of a flare at the end of a long boom.

Figure 4. In this visible-light image (left) of an oil rig at a 47-km range, the oil rig can barely be seen, but it is visible in the SWIR image (right), which was taken at the same distance.

The images in Figure 4 were taken through fairly light haze, but when the obscurants are much thicker, a different SWIR approach, called range-gated imaging (RGI), is required. This technique uses very short pulses of laser light to illuminate an object that may be hidden by rain, fog, smoke or a heavy snowstorm. The short pulses of light are only a few billionths of a second long. They reflect off an object and come back to a special camera that is time-synchronized to the laser pulse.

The camera is designed to have an extremely fast shutter speed, and only the light that comes back within a very narrow window of time (the “gate”) is recorded by the sensor. The delay between the laser pulse going out and the shutter of the camera can be adjusted by the operator to match the time it takes for light to go out to the object and return (“range gating”). This makes the system insensitive to light scattered back into the camera from particles or aerosols close to the system’s aperture.

Without this, the camera would be blinded by the backscatter – just like driving with the high beams on through heavy fog at night. The range-gated imaging technique makes the fog or other aerosols essentially disappear because only nonscattered laser photons reflected off the object are recorded – the so-called ballistic photons.

The results are very impressive, with the ability to see detail that is completely hidden to other imaging methods. SWIR radiation often is used for RGI because the danger to exposed eyes is much less than for NIR laser systems as a result of the lower photon energy and the optical properties of the human eye.

MWIR properties, applications

Mid-wavelength-infrared imaging sees heat radiated from scenes. The sensors used for MWIR imaging are made of special semiconductor materials such as indium antimonide and mercury cadmium telluride. They must be cooled to cryogenic temperatures to operate. MWIR imagers also can detect the absence of heat, as shown in Figure 5, a 3- to 5-µm image of the Oxnard (Calif.) Airport. The image was taken about 30 min after sunset after a clear, sunny day. The image is false-colored, with a special color palette. The top and bottom gray levels are false-colored to show the hottest parts of the image (shades of orange, yellow and white) and the coldest (shades of blue). The tarmac has retained a substantial amount of residual heat, except in the locations of aircraft (mostly private jets) that have since moved. The undersides of aircraft that have recently landed are hot and radiate heat down on the tarmac, which warms it up. There is also reflected MWIR radiation. The tops of buildings and cars are smooth metal, which reflects the cold night sky above.

Figure 5. MWIR image of Oxnard (Calif.) Airport from the air, false-colored with a special color palette. The top and bottom gray levels show the hottest parts of the image in orange, yellow and white; the coldest parts appear in blue shades.

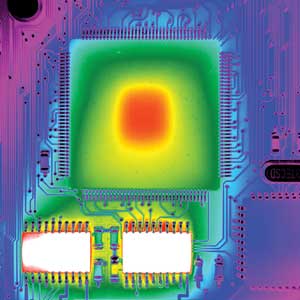

MWIR cameras are the Rolls Royces of thermal imaging, with unparalleled performance and a relatively high cost. MWIR imagers see better through high humidity air at ranges of several kilometers or more compared with LWIR systems. They can see people in total darkness out to 15 km when equipped with suitable long-focal-length optics. They also can measure minute temperature differences on surfaces, with values as low as 0.02 °C fairly easy to achieve. Figure 6 shows a false-colored MWIR image of a powered-up circuit board. The temperature difference between the red-colored center of the big chip and the blue-colored corner is about 2 °C.

Special MWIR cameras with internal optical filters can image gas plumes that are completely invisible to the human eye. This is crucial for safety inspections at refineries and power plants. These gas-finder cameras are tuned to the exact wavelength of absorption of a particular gas species, such as methane. The absorption lines can be very strong in the MWIR band; the gas plumes often look like clouds of black smoke pouring out of a leak.

Figure 6. A false-colored MWIR image of a powered-up circuit board. The difference in temperature between the red center of the big chip and the blue corner is about 2 °C.

LWIR properties, applications

As with MWIR imaging, LWIR imaging sees heat from scenes. The images look different from MWIR images; there is less contrast between areas of different temperature. This is related to the physics of IR emission from materials. In the LWIR band, there is quite a bit more radiation emitted from terrestrial objects relative to the MWIR band, but the amount of radiation varies less with temperature. The ratio of long-wavelength (7 to 14 µm) to mid-wavelength (3 to 5 µm) is 75 for a 30 °C scene. Because of this abundance of radiation, some types of LWIR sensors do not have to be cooled to operate.

These uncooled sensors are revolutionizing the IR camera industry, with costs that have dropped by an order of magnitude every 10 years, and miniaturization that has enabled the development of compact handheld cameras. Uncooled cameras have limitations on lens focal length that make very long range surveillance impractical with uncooled cameras.

Uncooled LWIR cameras are the most common thermal imaging cameras on the world market. They are widely used in security and surveillance applications in fixed installations, on vehicles and on unmanned aerial vehicles. Firefighters use handheld LWIR imagers to see through smoke and identify hot spots that can indicate a fire burning inside a wall or behind a door.

There are several applications where LWIR cameras are the only way to meet the imaging objective:

• imaging through thick smoke

• thermal imaging in subzero temperatures

• imaging scenes that contain the sun or a reflection of the sun

The last situation is particularly commonplace, especially for cameras monitoring traffic on a coastal road or highway. When a visible-light scene contains the sun, the radiation from it often will overwhelm the rest of the scene, especially for parts of the scene near the sun, as is the case at sunrise or sunset. An example is shown in Figure 7, which is a LWIR image taken with a microbolometer camera. You can see the people in the foreground, but the visible reference image shows a “blown out” scene.

Figure 7. Visible (left) and LWIR (right) images of an airport near sunset. The LWIR image was captured with a microbolometer camera.

There is no simple way to compensate for this effect because the visible camera optics will tend to scatter and reflect the sunlight around inside and “paint” it all over the rest of the sensor’s area, obscuring the regions of interest. LWIR imaging is much less affected by the presence of the sun in the scene.

Meet the author

Dr. Austin Richards is a senior research scientist at Flir Commercial Systems; email: [email protected].