I can’t see the rain … against my headlights

Driving at night through a downpour or a heavy snowstorm could become much easier if a sophisticated headlight system created at Carnegie Mellon University’s Robotics Institute becomes commercially viable.

A camera, computer chip and an off-the-shelf digital light projection (DLP) system comprise the prototype. The use of a DLP allows the researchers to control the individual light rays.

The camera captures an image and then looks for raindrops in the image. If it finds any, then the computer, using the institute’s algorithms, detects the raindrops and predicts where they are going to be in the future. And then, based on that information, “it controls the light projector to not illuminate that area,” Robotics Institute project scientist Robert Tamburo told Photonics Spectra.

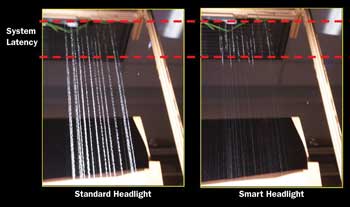

A new headlight system in the prototype stage could make driving in the rain and snow much easier. Here, the system at work: Native illumination on left and fast-reactive illumination on right during equivalent of heavy rainfall.

The photos were captured with an exposure time of 2.5 s.

The light strobes so fast that to the human eye it looks like a steady beam. And it operates just that way when there is no precipitation to detect.

“The neat thing is that if it’s not raining, it’s just a regular headlight,” he said.

Tamburo joined the project, led by associate robotics professor Srinivasa Narasimhan, a year ago. The project’s second and current prototype has a refresh speed of 120 Hz, four times faster than the original’s 30 Hz. The latency also has improved its processing lag time from 80 to 100 ms to 13 ms.

The team has conducted feasibility studies using computer simulations to show that the idea could work under different intensities of rain or snow.

“The simulations are promising, showing that the idea is feasible, and that if we can make the system fast enough, it will work on a moving vehicle,” Tamburo said.

To the human eye, rain can appear as elongated streaks. To high-speed cameras, however, it consists of sparsely spaced discrete drops. That leaves plenty of space between the drops where light can be effectively distributed, if the system can respond rapidly.

Fog is a bigger challenge than rain or snow, however, because it’s so dense and the particles are so small.

“I know there’s a lot of research on fog. I know there’s a lot of research on removing fog from scenes and trying to recover the photographs. I’m not sure how fast that algorithm is,” Tamburo said. “So as long as the algorithm is fast enough, potentially it could be used for things like fog. Or even dust storms.”

To be practical, the refresh speed must improve from 120 to 500 Hz, and the latency must be decreased. Both of those things will happen when the system gets smaller and can transfer data faster through custom-made hardware.

“In order to make it really, really fast, we have to make it smaller,” Tamburo said. “The image sensor, the light source and the processing unit could be on a small board, maybe a couple inches by a couple inches, in the future.”

New LED- and laser-based headlights under development by car manufacturers such as BMW (see “The Light in Your Eyes,” Photonics Spectra, January 2012, p. 88) could serve as the light source in the future.

“Either of those would be viable options for us as well because you can control the individual light rays,” he said.

Because the light is being controlled and is a projector, it also could assist drivers by projecting images onto the road when visibility is poor.

“So one of the things that you can do, if you have algorithms that are sophisticated enough, is project the lines onto the road so that drivers can see them when they otherwise couldn’t,” Tamburo said.

It could also conceivably serve as an early-warning system for drivers that there is something in a road, such as a deer, and even dim automatically when another car is approaching in the opposite lane.

“Once you have the hardware in place, you can write different algorithms to do different types of detection,” Tamburo said.

The system is confined to the lab for now, until its speed can be increased enough to deal with real-world conditions such as vibration and wind, and a vehicle traveling at 60 mph. Tamburo estimates that it will take three to four years to finish researching the technology behind the concept, then more time for commercialization and marketing.

“But we think that, even before it gets to that stage, we’re going to have to do significant user studies just to figure out what parameters need to be tweaked and optimized for the driver for the best effect,” Tamburo said.

When the system does start to work at speed, “I’m sure we’ll start slow … 10 miles per hour or something,” he said. “It might not even be on a car; it might be some sort of other moving platform – like a cart and someone’s pushing them through the wind, or through the rain and the wind. I think we will take it in slow stages to avoid any damage.”

In terms of accuracy, it doesn’t have to be at 100 percent to be effective.

“We’re shooting for 70 to 80 percent in accuracy. Because really, any improvement is helpful to the driver, improving visibility and reducing stress,” Tamburo said.

The research was sponsored by the Office of Naval Research, National Science Foundation, Samsung Advanced Institute of Technology and Intel Corp.

Published: September 2012