Carlton Heard, National Instruments

Vision technology gives robots the ability to see, and today’s software enables robots to pick up more details, opening the door to an extremely wide range of applications.

For decades, vision has been making its way into industrial automation applications, with one of the most common uses being vision-guided robotics, especially in areas such as assembly and materials handling. The process is not new, but it is growing: The need to increase productivity and quality in manufacturing is driving the adoption of more and more robotic systems.

A key factor to this growth has been the nearly exponential improvements in processing technologies. Image-processing capabilities are scaling with materials-processing technologies to enable machines and robots to be much smarter – and more flexible, too. When a machine can see which part it is dealing with and adapt as needed, the same machine can be used on a variety of parts with continuously changing tasks.

Multiple SCARA robots are used to pick and place syringes for packaging. Using vision, the syringes can arrive in the robot cell in any orientation and can be inspected for defects before being packaged.

In vision-guided robotics applications, a camera is used to locate a part or destination, and then the coordinates are sent to the robot to perform a function such as picking and placing the part. Incoming parts may not have to be sorted before entering the robot cell, and picking parts from a bin becomes easier as a camera locates a part with an orientation that can be handled by the robotic arm amid a pile of the same part. If no part is in a position to be picked up, flex feeders or shaker tables are used to reposition parts for picking.

Another added benefit of vision technology is that the same images can be used for in-line inspection of the parts that are being handled. So, not only are robots made more flexible, but they also can produce results of higher quality. Rather than waiting to perform the visual inspection at the end, it can be done at any point in the factory line. This also can be done at a lower cost because the vision system can detect, predict and prevent things such as jams by rejecting components that could cause issues farther down the line.

With today’s processing technologies, vision and robotic integration is being pushed beyond the traditional inspection pick-and-place guidance applications: Tighter integration and the execution of multiple automation tasks on the same hardware platform are enabling applications such as visual servoing and dynamic manipulation. Visual servo control can solve some high-performance control challenges by using imaging as a continual feedback device for a robot or motion-control system; in some areas, this type of image feedback technique has altogether replaced other feedback mechanisms such as encoders. An example of visual servoing can be found in semiconductor wafer processing, where new machines are incorporating vision with their motion control to accurately detect minor imperfections in freshly cut chips and to intelligently adjust the cutting process to compensate for these imperfections, thus dramatically increasing yields in manufacturing.

This spider robot uses a camera and onboard image processing to detect colors, objects and distances while searching through tight spaces on its six independent legs.

Faster embedded processors have cultivated a lot of this growth into higher-performance applications, but a key technology enabler for even more performance lies in the use of field-programmable gate arrays (FPGAs), which are well suited for highly deterministic and parallel image-processing algorithms, and for tightly synchronizing the processing results with a motion or robotic system. The medical field uses this type of process in certain laser eye surgeries, where slight movements in a patient’s eye are detected by the camera and used as feedback to autofocus and adjust the system at a high rate. FPGAs also can aid in applications such as surveillance and automotive production by performing high-speed feature tracking and particle analysis at a lower power budget.

Although the use of vision with robotics is common in industrial applications, its adoption is growing in the embedded space as well. One example is in the field of mobile robotics. Robots are coming out of the factory floor and entering many aspects of our day-to-day lives, from service robots wandering the halls of our local hospitals to autonomous tractors plowing the fields. Nearly every autonomous mobile robot requires sophisticated imaging capabilities, from obstacle avoidance to simultaneous visual localization and mapping. In the next decade, the number of vision systems used by autonomous robots should eclipse the number of systems used by fixed-base robot arms.

Beyond power and cost

The previously mentioned improvements in embedded processing technologies have certainly fueled this growth, but we are at a point where the processing power and low-cost image sensor availability are no longer the limiting factors. Low-cost consumer devices commonly have multimegapixel-resolution cameras integrated with multicore processors. An obvious example is the smartphone, which can quickly become a bar-code reader or augmented reality viewer with the download of an app.

Another example is the Microsoft Kinect, which saw explosive growth after its release and now has APIs (Application Programming Interfaces) in multiple programming languages for use in areas outside of gaming. An intelligent robot now can be built by anyone who can mount a Kinect on top of a frame with motors and wheels. The hardware is there, but readily available software for embedded vision will be the catalyst for even further growth in mobile robotics.

The growing trend of 3-D vision helps robots perceive even more about their environments. 3-D imaging technology has come a long way from its roots in academic research labs, and, thanks to innovations in sensors, lighting and, most importantly, embedded processing, 3-D vision is now appearing in a variety of applications, from vision-guided robotic bin-picking to high-precision metrology and mobile robotics. The latest generation of processors can now handle the immense data sets and sophisticated algorithms required to extract depth information and can quickly make decisions.

Stereo-vision systems can provide a rich set of 3-D information for navigation applications and can perform well even in changing light conditions. These systems use two or more cameras, offset from one another, looking at the same object. By comparing the multiple images, the disparity and depth information can be calculated giving 3-D information.

Depth detection

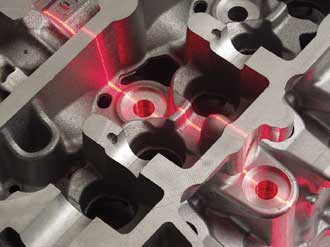

Another common method for 3-D image acquisition is laser triangulation, where a laser line is projected onto an object and a height profile is generated by acquiring the image using the camera and measuring displacement in the laser line across a single slice of the object. The laser and camera scan through multiple slices of the object surface to generate a 3-D image.

Laser triangulation is used to inspect the surface of a die-cast part. Both the camera and laser are mounted to the end effector of an industrial robot, which can then sort the part based upon the results of the 3-D vision scan.

In traditional inspection systems, 3-D vision can help solve challenges that would typically have been difficult with traditional methods. One example of this type of application is dent detection and measurement on surfaces that are difficult to detect – even with sophisticated lighting – due to texture, coating, pattern or material makeup. 3-D vision also expands the capabilities of vision-guided robotic bin-picking applications by providing a depth map and orientation data to the system for picking up parts with complex shapes that are lying on top of one another. Beyond traditional machine vision, mobile robots use depth information to measure the size and distance of obstacles for accurate path planning and obstacle avoidance.

The combination of robotics and vision does bring about a significant software challenge. These systems can become quite complex; e.g., think of a mobile robot carrying an industrial robotic arm to perform automated refueling of aircraft. Here we not only have the robot and vision systems, but also sensors, motors for the wheels, potential pneumatics and safety systems. There may well be proprietary languages, protocols and even development environments that do not span between the various subsystems. Software should be the glue between the electrical and mechanical devices, but often it can take more time to architect the connection between software packages and communication protocols than it takes to develop the IP to actually perform the task you wanted to accomplish in the first place.

A high-level programming language incorporating vision, robot control, I/O and all other aspects of a system can greatly reduce complexity and development time by keeping all of the programming and synchronization in one environment.

The decision as to which subsystem is the master and which are the servants can vary from application to application, and tightly synchronizing these subsystems can require both mechanical, electrical and software skills. Digital I/O and communication protocols such as EthernetIP and TCP/IP transfer commands and responses between these subsystems, which can all potentially be running on separate time bases. It’s a problem, and one that hasn’t gotten enough attention, because this type of challenge has just been considered a given when incorporating vision, robotics and sensors into a system.

Abstraction required

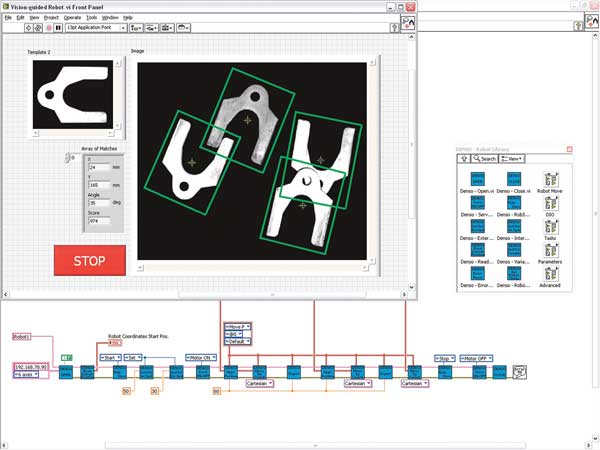

The answer to this problem lies in abstraction. A high-level programming language is needed to abstract the software complexity into a single environment. One such language is LabView, which combines the vision, motion, FPGA, I/O communication and all other programming needs into a graphical development environment.

With a high-level programming language, the complete system can be designed within one environment and

can quickly be synchronized because a single, central program handles all aspects of the system.

This also can reduce the learning curve because new development tools will not have to be learned whenever hardware changes. The amount of time invested in learning a software package should be leveraged across multiple products and be scalable with changes in robotic manufacturers, vision algorithms, I/O and hardware targets. High-level programming tools incorporate all of the necessary subsystems while maintaining a scalable architecture, which will be increasingly important as vision and robotics applications continue to become more and more complex.

Meet the author

Carlton Heard is product engineer for vision hardware and software at National Instruments in Austin, Texas; email: [email protected].