Xing-Fei He, Teledyne Dalsa Inc.

With high color quality and speed – and low cost – trilinear color cameras provide an attractive performance-to-cost ratio in line-scan color imaging for automatic optical inspection.

High-speed color imaging plays an important role in machine vision. Some objects are nearly indistinguishable in gray-scale monochrome imaging. Industrial line-scan color cameras, using either charge-coupled device (CCD) or complementary metal oxide semiconductor (CMOS) sensors, have been widely used in print inspection, check scanning, electronics manufacturing, food sorting, transportation safety and many other applications. When selecting an imaging technology, always consider the performance and cost requirements.

Line-scan color imaging

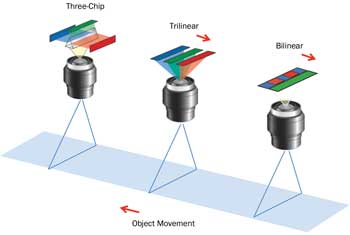

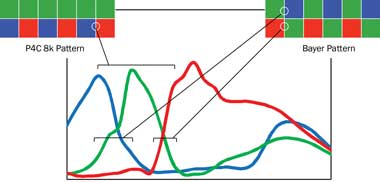

Unlike area-scan cameras, line-scan cameras capture one line at a time and combine the lines to form a two-dimensional image. Because silicon imagers cannot distinguish among wavelengths on their own, color bands – red (R), green (G) and blue (B) – must be spectrally separated before being captured by the imager. There are three major technologies being used today: three chip, trilinear and bilinear (Figure 1).

Figure 1. Schematic of line-scan color imaging technologies: Three-chip, trilinear and bilinear. Spatial correction is needed for trilinear technology to reconstruct a full-color image; bilinear has minimum spatial correction.

Three-chip cameras

In a three-chip line-scan camera (3CCD or 3CMOS), a prism-based dichroic beamsplitter separates the RGB colors. It uses optical interference for wavelength separation, and its filter line shape usually has a flat response and sharp drop-off characteristics. The color image is reconstructed later by combining the RGB images captured by three sensors. With a common optical axis, the RGB colors are captured simultaneously at one location on the moving object. This enhances the technology’s color registration and allows it to work on uneven surfaces, rotating or falling objects, and more.

The drawbacks to the three-chip line-scan camera are increased camera costs and the need for more expensive optical lenses. A prism causes back-focal shift and aberration, so the camera needs a specially designed lens. Its body is usually large to accommodate the prism and three sensors.

Table 1. Comparison of color technologies

Trilinear cameras

Many applications have adopted trilinear technology, which uses three linear arrays fabricated on a silicon die – one each for R, G and B channels. In operation, each array captures one of the primary colors simultaneously but at slightly different locations on the moving object. Color filters are absorption-based dyes or pigments coated on the silicon wafer. Because line-scan imaging usually requires intensive light, the color filters must have high light fastness (7 to 8 scales). Heat stability up to 250 °C also is important to avoid color deterioration.

To combine three color channels into a full-color image, the camera must compensate for the spatial separation, referred to as spatial correction, usually by buffering the first and second arrays to match with the third. Trilinear technology simplifies camera design, provides high image quality and has a small footprint. It further reduces system-level costs with standard lenses.

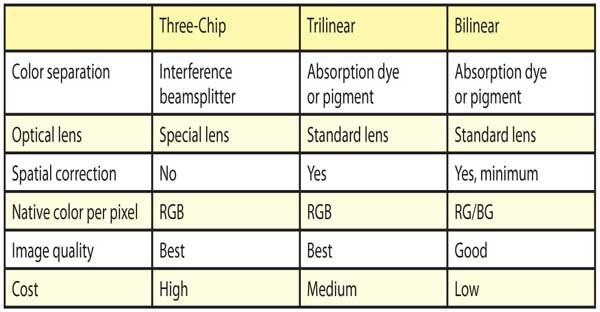

Color trilinear CCD cameras are in use in high-speed applications today; new color trilinear CMOS cameras improve the speed without compromising resolution. With the latest design, quantum efficiency also significantly improves (Figure 2).

Figure 2. The quantum efficiency of the Piranha4 Color CMOS camera has been improved significantly over that of the existing Piranha Color CCD camera; in particular, the blue efficiency is 65 percent higher.

As for image quality: Figures 3a and 3b show a portion of a banknote imaged by a trilinear and a three-chip camera; the trilinear technology has been used in many banknote inspection systems worldwide because it captures every detail with excellent color fidelity.

Bilinear cameras

A bilinear imager shares many advantages with a trilinear imager but has only two linear arrays fabricated on a silicon die. The arrays are usually next to each other to minimize the need for spatial correction. The bilinear sensor captures two native colors per pixel. To reconstruct a full-color image, the third color must be interpolated.

Figure 3. Banknote images captured by Piranha Color trilinear camera (a) versus three-chip camera (b). Trilinear can actually achieve the same or even better color quality over three-chip.

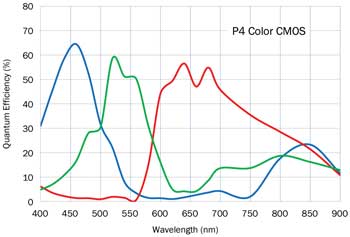

Spatial crosstalk can be improved with specific sensor architecture; spectral cross-talk can be reduced with certain arrangements of color filters. New bilinear color CCD and CMOS cameras use an RG/BG color pattern that differs from the Bayer pattern (Figure 4) to reduce spectral crosstalk: R and B are next to each other because they have less spectral overlap. Furthermore, it offers a 100 percent fill factor single G channel that can be used as a monochrome channel.

Bilinear cameras have even lower costs than trilinear ones and are useful for electronics manufacturing, food inspection, materials sorting and more.

Figure 4. Spectral overlap comparison of bilinear color patterns: Piranha4 Color 8k/7µm camera uses a unique color pattern that minimizes spectral crosstalk between channels and, at the same time, offers a 100 percent fill-factor single green channel.

Figures 5a and 5b show a stamp imaged using a color trilinear camera versus a stamp imaged using a color bilinear camera. The color filter pattern helps to greatly improve the color fidelity.

Spatial correction at subpixel level

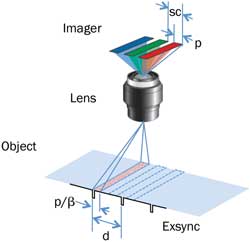

Spatial correction is the key to achieving an accurate color image using trilinear technology. Advanced technology has been developed for fine adjustment to subpixel level. As shown in Figure 6a, there are three sampling scenarios:

d < p/β: Web speed slower than camera scanning speed.

d = p/β: Web speed equal to camera scanning speed (square pixel).

d > p/β: Web speed faster than camera scanning speed.

In these scenarios, d is the object movement in one external sync period, p is the pixel size of the sensor, and β is optical magnification; p/β is the pixel-sampled size on the object.

Figure 5. A stamp captured by Piranha Color trilinear camera (a) versus Spyder3 Color bilinear camera (b).

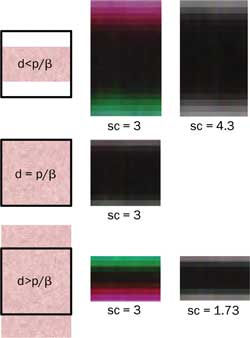

For square pixel situations, d matches p/β. In this case, spatial correction is equal to the number of line spaces between the RGB arrays; e.g., when the Piranha4 color camera operates in the square pixel sampling scenario where the spatial correction number is 3, it automatically delays three lines to align the RGB channel outputs.

In some applications, however, cameras operate in N:1 aspect ratio sampling; e.g., many inspection systems are designed in such a way that the web speed is moving much faster than the camera scanning speed to boost system throughput. This leads to a nonsquare “compressed” pixel in the image. On the other hand, web speed can be slower than camera scanning speed due to limitations of encoders and lens magnifications, etc. This results in a nonsquare “stretched” pixel in the image. In such nonsquare pixel sampling, spatial correction with an integer 3 results in color aliasing (Figure 6b).

Figure 6a. Spatial correction parameter (sc) depends on the distance of object movement during one external sync period (d) compared with pixel-sampled size (p/β), where p is the pixel size of the imager and β is the optical magnification.

Artifact correction

Subpixel spatial correction is capable of compensating with a fraction of a line delay to correct the artifact in such N:1 aspect ratio sampling. Figure 6b shows several images of a black-and-white bar with different sampling scenarios, where scanning was in the vertical direction. In the square pixel case, a spatial correction parameter sc 5 3 was used to reconstruct the image. In nonsquare pixel sampling, a fractional line delay (sc 5 4.3 and 1.73, respectively) was needed to correct the artifacts. Subpixel spatial correction also can be used to compensate and reconstruct the images captured where the camera is installed at an angle not perpendicular to the web surface.

During lens shading correction, a high-resolution camera might detect too much detail of the target – e.g., the paper grains – and this can become a problem. Physically, operators can avoid this by defocusing the lens or moving the target. But this can be inconvenient. A filter that can “defocus” without touching the lens or target solves this problem. White balancing is needed for most applications; to achieve more accurate colors, color calibration can be carried out using a Gretag-Macbeth chart.

Figure 6b. A black-and-white bar imaged by Piranha4 Color trilinear camera with various sampling scenarios and spatial correction (sc) parameters. A fractional line delay corrects the color fringes in nonsquare pixel sampling where d 5 p/β.

Automatic optical inspection

One of the most challenging applications is 100 percent print inspection. Most systems are designed to handle high speeds in the line-scan inspection of banknotes, checks and cosmetics, and of commercial, pharmaceutical and food packaging with an object resolution from 250 µm down to 50 µm, depending on application requirements. 4k resolution is a popular choice, with a line rate of 15 to 50 kHz.

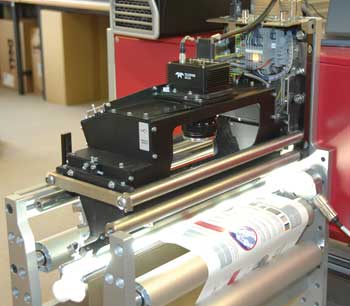

Figure 7 shows the ProofRunner 450 Press inspection system developed by EyeC GmbH of Hamburg, Germany. In this system, Teledyne Dalsa’s Piranha Color 4k/10-µm trilinear camera runs at 17 kHz with a web speed of 112 m/min and covers a field of view of 450 mm. ProofRunner can detect defects in full color with an object resolution of 110 µm in 100 percent print inspection for labels, leaflets, foil, packages, banknotes, etc. It fits into printing machines (flexo, offset, screen, gravure), converting machines, folder gluers and sorting machines.

Figure 7. The ProofRunner 450 Press inspection system developed by EyeC of Hamburg, Germany, uses Teledyne Dalsa’s Piranha Color 4k/10-µm trilinear camera for 100 percent inspection of graphics and texts in full color.

The camera also can monitor and control large-format printing systems. Figure 8 shows the Rapida 145 press by Koenig & Bauer AG of Würzburg, Germany. It prints 1050 x 1450-mm sheets at the speed of 17,000 sheets per hour. The same camera is used in its QualTronic ColorControl unit, which measures and controls color density in-line. Each sheet is scanned by the camera, and the result is fed back to the ColorTronic ink duct every tenth sheet. This ensures uniform image quality throughout the print run.

Future trends

As the demand for system throughput increases, the speed of line-scan cameras must increase. Trilinear cameras with 70-kHz maximum line rate and improved responsivity are the latest products to meet the requirements. Multiple lines per color channel with time delay integration (TDI) technology1 eventually will be needed to further improve the sensitivity of sensors for higher-speed imaging. Color TDI cameras are expected to be

the standard products in the near future.

Figure 8. The Rapida 145 press by Koenig & Bauer AG of Würzburg, Germany, uses the Piranha Color 4k camera in its QualTronic ColorControl unit to measure and control color density in-line.

At the same time, color imaging solutions are evolving into more complicated designs, such as multispectral imaging and also beyond the visible spectrum; e.g., wavelengths in between the normal RGB channels, or in the near-infrared wavelengths, improve detectability in automatic optical inspection. A multispectral camera based on a single chip with red, green, blue and near-infrared channels can be a cost-effective solution to meet even more demanding requirements in the future. To have truly independent multispectral channels, all filters should be coated on the wafer level.

Meet the author

Dr. Xing-Fei He is senior product manager at Teledyne Dalsa Inc. in Waterloo, Ontario, Canada; email: [email protected].

References

1. X.-F. He and N. O (2012). Time delay integration speeds up imaging. Photonics Spectra, Vol. 46, Issue 5, pp. 50-54.