The microscopy market is growing – set to exceed $5 billion by 2018 – even as microscopic targets get smaller and smaller.

The microscopy market is trending upward – and the technology is continually allowing researchers to see things on a smaller and smaller scale, which is especially useful for brain studies.

The global market for microscopes and accessories was projected to surpass $4 billion this year, according to Microscopy: The Global Market, a report by BCC Research. That value will hit $4.5 billion by 2015, according to projections from World Microscopy Market: Products, Applications and Forecasts (2010-2015), a separate report from MarketsandMarkets. It will reach nearly $5.4 billion by 2018, with a compound annual growth rate (CAGR) of 6 percent, according to the BCC Research report.

For a picture of the current state of the microscopy market – plus a look at where the technology is headed – we interviewed a panel of experts representing a range of companies: Emmanuel Leroy, product manager at Horiba Scientific; Duncan McMillan, director of biosciences product marketing at Carl Zeiss Microscopy; Marco Arrigoni, marketing director for the scientific market at Coherent, who spearheads the company’s support of nonlinear microscopy based on the company’s ultrafast lasers; Matthias Schulze, director of marketing for OEM components and instrumentation at Coherent, who is involved with microscopy in the area of commercial implementation; and Julien Klein, senior manager of product marketing for Spectra-Physics, a Newport Corp. brand.

Q: Which application areas would you say are the hottest – and why?

Arrigoni: At present, I’d have to say it’s definitely brain studies, both to provide a structural map of the brain connections (the connectome) and a functional map of the brain activity that resides close to the cortex. By brain activity, I’m referring to responses to visual, auditory and olfactory stimuli. Looking forward, studies of the human brain connectome are a big part of the European [Human Brain Project] effort, but these will also be dependent on computational power and advances in that area, too.

McMillan: Functional brain imaging. Because the brain is the “last frontier,” as evidenced by the recent efforts to map the brain connectome and the US federal government’s funding of the BRAIN Initiative. Also, the general area of optogenetics.

Klein: Neuroscience is a particularly important field of research that relies heavily on advances in microscopy for in vivo brain imaging to develop an understanding of neurological mechanisms and dysfunctions. Oncology research is another important field where novel imaging techniques such as CARS and [stimulated Raman scattering] that do not require the use of markers are enabling new research.

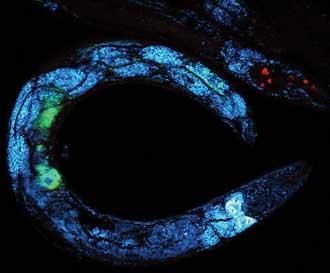

Multimodal image of a C. elegans worm that has been deprived of nutrients and, as a response, starts storing fats in reservoirs, resulting in bright CARS signals from lipids (blue). The image shows CARS at 798 + 1040 nm (blue), TPFE GFP at 920 nm (green) and TPFE dsRed at 1040 nm (red) using an InSight DeepSee laser. The GFP and dsRed are tagged to different proteins involved in lipid metabolism. Image actual size is ~300 × 300 µm. Courtesy of Dr. Eric Potma, University of California, Irvine.

Leroy: Superresolution microscopy, label-free nanoscale chemical imaging, near-field optical microspectroscopy. AFM-Raman leads the pack in terms of nanoscale chemical imaging, and although it is still more of a research technique, it is more and more accessible.

Q: Looking ahead, what do you see as the “next big thing” in microscopy?

Leroy: Hyperspectral imaging and going down to nanoscale – both of which are answered by nanospectroscopy.

McMillan: Bessel beam microscopes, because they offer isotropic 3-D resolution, high speed and can potentially be adapted to deliver superresolution.

Arrigoni: This is hard to say. There is an immense proliferation of microscopy techniques offering new possibilities, but each of them seems to come with limitations: For example, superresolution so far cannot go very deep in tissues; “light sheet” is very fast but so far has been mostly used to study small-body organisms like embryos, and it is unclear if it can be also exploited for other purposes.

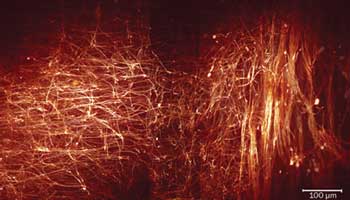

Axonal projections from the white matter tract to layer IV barrel cortex in the adult mouse, imaged using two-photon microscopy with an InSight DeepSee laser tuned to 1030 nm. Courtesy of Gabriel Jones, Dr. Kay Richards and Dr. Steven Petrou, The Florey Institute of Neuroscience & Mental Health, Melbourne, Australia.

Ideally, researchers would like a microscope that can go 5 mm deep in most tissues, offers some degree of superresolution and is able to image several planes at video rate. Obviously, we’re not there yet.

Klein: The NIH BRAIN and other similar initiatives are exciting in what they could mean for advances in health care, and optogenetics is a promising technique for in vivo, dynamic investigation and mapping of neuronal networks.

Q: What new and exciting advances do you see coming out of university and/or R&D labs?

Leroy: Availability of reliable TERS tips (tip-enhanced Raman spectroscopy) and more automation in the coupling of [scanning probe microscopy] and Raman – all this is getting applied to nanoelectronics, nanofabrication/-characterization and biological imaging.

Klein: Some researchers are trying to apply some of these advanced microscopy techniques to medical diagnosis and procedures. For example, microendoscopy using multiphoton or other advanced imaging techniques [is an exciting direction] for possible longer-term application of these tools to novel medical treatments.

Arrigoni: I see two significant trends:

1. Concerted efforts to genetically encode new types of tools beyond the fluorescent label type (e.g., GFP, mFruits) that are currently just used for imaging. These new tools include functional encoded probes above and beyond the current suite of optogenetics probes such as channel-rhodopsins. For example, to study neuronal signaling, we have a growing range of GECIs (genetically encoded calcium indicators). But genetically encoded voltage-sensitive probes would allow neuronal signaling to be studied at speeds maybe 100× faster than GECIs currently support. And, fortunately, most of these new encoded probes have variants that respond to light at or above 1 µm, in recognition of the deeper penetration of longer wavelengths.

2. The second trend is the development of clearing agents that remove lipids from fixed dead brain tissue, usually from mice, and thereby allow researchers to image detailed brain anatomy over thicknesses as high as 7 to 10 mm. [Dr. Atsushi] Miyawaki in Japan and [Dr. Karl] Deisseroth in USA have been using this approach to obtain major depth improvements in brain imaging, to the point that the working distance of objectives has become the current limiting factor.

Q: What are the biggest challenges microscopists are facing right now? How do you predict they will be overcome?

McMillan: With respect to the BRAIN initiative above, low sensitivity of voltage-reporting fluorophores (for optically reporting electrical signals in living organisms).

Arrigoni: Sticking to neuroscience, we see several attempts to monitor the path of brain signals as they move across the cortex. This would require ideally simultaneous 3-D imaging in a large volume to track the path of the brain signals. Since this is impossible as of now, we see a high-end trend to be able to scan the beam at will at arbitrary subregions of the complete 3-D space, using fast modulators or schemes where the beam is multiplexed at various depths to create a “limited 3-D” image.

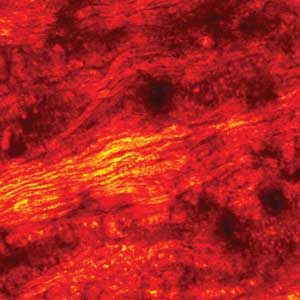

SRS (stimulated Raman scattering) imaging of rat spinal cord at 802 + 1040 nm with an InSight DeepSee laser. Courtesy of Dr. Ji-Xin Cheng at Purdue University.

Leroy: The challenge is to go label-free and to go to smaller scale (nano).

Labels are a big issue, as they interfere with real interactions. Raman spectroscopy is a label-free technique, but there [are so many] different compounds in a cell, it is still difficult to make sense of the data. Advanced processing techniques (multivariate analysis) allow a great deal of advancement there.

Going to the nanoscale is obviously of interest in itself, but because at the nanoscale the differentiation of molecules becomes easier – we’re now probing single molecules or a few molecules, not a whole lot of different ones – the interpretation also becomes easier.

Klein: Some challenges facing microscopists today include developing techniques to increase the imaging depth penetration to enable, for example, the study of neurological mechanisms in different parts of the brain. One approach being pursued is to use multiphoton imaging with infrared wavelengths within the water transparency window (up to 1300 nm) to reduce absorption and scattering. Scientists are developing fluorescent markers that can be excited at these longer wavelengths, and microscope and laser source suppliers are teaming up to create imaging systems at these longer wavelengths.

Another challenge is handling and interpreting the massive amounts of data collected, and bioinformatics is playing an increasingly important role.

Schulze: Superresolution is a very hot area at present. We’re now seeing this move from technical development and proof-of-principle studies into much wider deployment. As people try to connect the molecular scale with the macroscopic scale, superresolution techniques narrow the gap between these two domains and are now delivering exciting data in several areas of life sciences, not just limited to neuroscience.

But I think as technology suppliers to the world of optical microscopy, we need to be aware of a much broader and more fundamental trend: Scientists and microscope builders are both starting to focus on combining multiple-sample interrogation modalities.

What do I mean? Well, we already see extensive use of multiparameter imaging just within optical microscopy, to observe separate but connected properties of samples. In nonlinear imaging, typical examples include combining [multiphoton excitation] with CARS imaging and/or with [second- and third-harmonic generation] imaging. And in confocal work we see increased use of multiwavelength excitation. Incidentally, I believe photonics suppliers have done a great job of supporting this trend – for example, with more flexible ultrafast lasers and simple plug-and-play tools to combine multiple [continuous-wave] lasers into a single confocal microscope.

But stepping further back, the larger picture reveals scientists and instrument builders are increasingly recognizing the value of combining optical and non-optical modalities. Examples include combining confocal microscopy with scanning probe microscopy (e.g., AFM). Another pairing is electron microscopy and confocal microscopy. Now, some of these techniques are clearly not compatible with live-tissue imaging, but they can be used with fixed samples to give absolutely unique data that will help bridge the molecular and macroscopic domains I mentioned earlier.

We are in the early days of this type of combinatorial work, and a major hurdle will be data handling and processing. The various techniques yield quite different types of data sets, which have been handled separately in the past. Now there is a need to find ways to combine these data sets in a meaningful way. We’re talking about huge amounts of data processing here, so like the connectome work mentioned by Marco Arrigoni, this is going to be dependent on increased computer power in addition to new instrument development and deployment. This challenging complexity also means that lasers and other photonic components must deliver even greater operational simplicity and reliability as these become buried in these increasingly complex setups.