Vehicle Vision Puts the 'Auto' in 'Automobile'

Vision systems are increasingly enabling self-driving cars to automatically park, maintain speed and prevent collisions. Can a world of ubiquitous self-driving cars be far off?

Whether it’s a flying, automatically driven car like the Jetsons’ or an artificially intelligent robotic automobile named KITT, people have long dreamed of automated, self-driving (SD) vehicles that come when you call them and know exactly where to take you. For photonics companies and enthusiasts of a future with driverless cars, there’s good news and better news: The dream will become a reality. It’s a matter of when, not if.

For several decades, the technology has been steadily advancing in commercial vehicles. Adaptive cruise control, debuted by Mitsubishi in 1995, was the first “smart car” feature using lidar or radar sensors to maintain a constant distance from the car ahead using throttle control and downshifting. Later systems marketed as “preview distance control” or active cruise control evolved to apply the brakes and accelerate as needed. Nearly every major car company in the market now offers adaptive cruise control as an option in high-end vehicles.

In the past decade, adaptive cruise control has further evolved into full collision-avoidance systems in more than a dozen vehicle makes from Acura to Volvo. Such systems use lidar, radar, cameras, sensors or a combination of those to monitor dangerous situations in front of, behind and even beside the vehicle. The first such system in the 2006 Mercedes could apply the brakes and bring the car to a full stop, then resume again from a standstill in the case of a potential threat – in other words, it could navigate in a traffic jam or through a roundabout without the driver touching the pedals. In just the past few years, nearly all automakers have either begun to offer, or have made plans to offer, further automated systems that use sensing and imaging technology to autonomously steer, brake, accelerate and park without driver input.

Vision accomplished

Daimler AG, Ford and BMW have rolled out vehicles with systems using photonics and radar technology that do everything but make your lunch. The Mercedes S-Class for 2014 is the first vehicle on the market to offer camera-assisted “magic body control,” a system that can identify bumps in the road ahead and adjust the suspension to quell them in real time. A 6D-Vision system, combining stereo multipurpose cameras and multistage radar sensors, provides spatial perception up to 50 m ahead of the vehicle to maintain speed behind a car at speeds of up to 200 km/h. Short-, medium- and long-range radar sensors at 30, 60 and 200 m provide distance-monitoring information about other vehicles, oncoming traffic, pedestrians, traffic signs and road markings.

An active lane-maintaining system uses a side camera and radar sensors to perceive when the vehicle crosses dashed or solid lane markings. A thermal infrared imaging camera provides night vision to alert the driver to potential danger from pedestrians, cyclists or animals in dark conditions.

The Mercedes S-Class for 2014 incorporates numerous vision systems to assist the driver, from a 360° hazard-alert system to the industry’s first camera-based proactive suspension system that scans the road to prepare for bumps and dips. Photo courtesy of Mercedes.

The sensors in the Mercedes S-Class, one of which can detect whether the driver’s hands are on the steering wheel, all feed into a single control unit that intelligently synthesizes the data. Other systems include self-parallel-parking ability, and 360° monitoring of everything from blind-spot hazards to vehicles approaching from behind. With all these advanced vision systems already available commercially, the age of the lazy driver is imminent, especially in light of ongoing research on the next generation of highly automated cars.

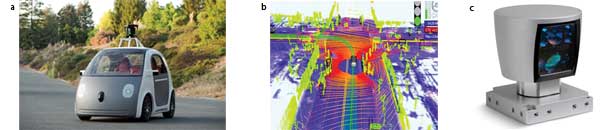

Google is ahead of the game. In May, the company announced a demonstration of the first prototype self-driving vehicle without a steering wheel, accelerator or brake pedal. Passengers pushed a button, sat back and relaxed while the car navigated a predetermined path at 25 mph. The key to Google’s autonomous navigation is the HDL-64E (E for Ethernet) lidar sensor from Velodyne LiDAR of Morgan Hill, Calif., which uses 64 fixed-mount IR (905 nm) laser-detector pairs to map out a full 360° horizontal field of view by 26.8° vertical. The entire unit spins at a frame rate from 5 to 15 Hz, gathering over 1.3 million data points per second to reconstruct a virtual map of the surrounding environment up to 100 m away at a resolution of ±2 cm, while traveling at highway speeds. The goal of Google’s SD car project is to create a viable technology that will reduce road accidents, congestion and fuel consumption. Unfortunately, the HDL-64E laser sensor systems currently cost more than the car.

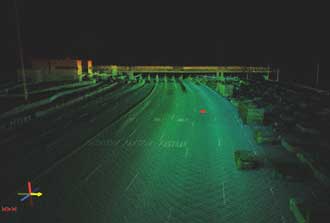

The Velodyne HDL-32E sensor mounted on a Hummer maps a tollbooth plaza using a 3-D lidar point cloud (green) of 600 full 360° scans over 60 seconds, combined with visible-wavelength camera images. Such modeling is useful for making safety improvements and sorting out legal issues, and as a visual verification of existing data reports. Photo courtesy of Velodyne/Mandli Communications.

“Google was able to realize these cars without steering wheels and brakes before automakers could, because the cost and liability factors are less important for them,” said Wolfgang Juchmann, director of sales and marketing at Velodyne LiDAR. “They are a software company, so they aren’t bound by the limits of a traditional car company. Google doesn’t need to ensure the car will sell. They can be visionaries. Furthermore, most car companies want a much smaller system not mounted on the roof.”

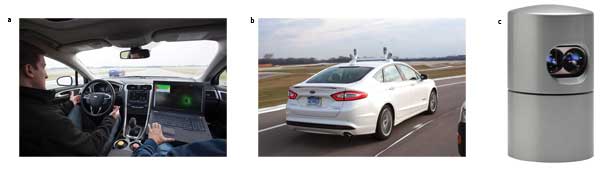

The Ford Fusion hybrid research vehicle, designed by Ford in collaboration with the University of Michigan and State Farm, also uses real-time lidar to map out the environment 360° around the car to determine distances to street signs and the condition of the roadway. Whereas the Google SD vehicle uses a bulky 64-laser system, the Ford Fusion hybrid uses four smaller, lighter-weight 32-laser systems, the Velodyne HDL-32E. At 5.68 × 3.35 in., the HDL-32E is a little larger than a soda can and weighs less than 2 kg. The system maps across a 41° vertical field of view at a frame rate of up to 20 Hz to map out a point cloud of 700,000 points per second. The system can “see” up to 100 m with an accuracy of ±2 cm. The HDL-32E requires 12 W of power and has an ingress protection rating of 67, the highest possible. The next challenge for Ford is reducing the number of lasers without losing the density of its mapping information.

(a) The Ford Fusion hybrid research vehicle is highly automated and can drive on its own, based on a 3-D map of the surrounding environment. (b) The Fusion hybrid research vehicle uses four Velodyne HDL-32E lidar systems to provide a 360° × 41° field of view with 700,000 data points per second. (c) The HDL-32E lidar system maps features down to 2 cm of accuracy at a range of 80 to 100 m. (a) and (b)photos courtesy of Ford (c) courtesy of Velodyne.

While Google’s SD car can retrace only a path it already knows, an ongoing community mapping pilot project launched in October 2013 by HERE of Espoo, Finland, a Nokia business, intends to map everything within sight of roadways for its GPS mobile mapping system. The second time an SD vehicle drives the same route, the system can compare what’s different, like a pedestrian or bicycle. The Nokia mapping project also uses the HDL-32E system from Velodyne.

Hi-ho, Silver!

Much in the way the Lone Ranger called his horse to save him, we could someday use apps to call our cars for automatic pickups – and even have the cars park themselves after dropping us off at a restaurant. Although so many guidance systems are likely to create more distracted drivers, the goal is to remove the human from the equation. “Machines are more reliable than humans and will go the speed limit,” Juchmann said.

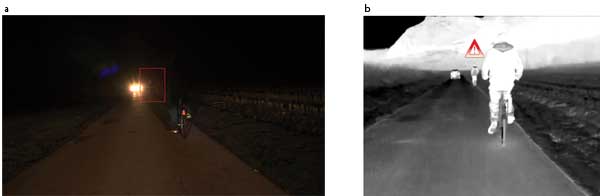

(a) A visible-wavelength image of a road scene demonstrates how the glare of oncoming headlights can obscure pedestrians and other objects in the roadway. (b) The same road scene – imaged using a QVGA 384 × 288-pixel long-wave infrared thermal image sensor from ULIS – shows enhanced visibility. (a) and (b)photos courtesy of ULIS.

Not only must the 360° mapping systems be small and cheap enough to be affordable, but they also must operate at low power, without loss of performance. Thermal image sensor producer ULIS of Veurey-Voroize, France, has leveraged high-performance vision technology into ultrasensitive but affordable technology for civilian applications. The long-wave infrared thermal imaging camera uses a QVGA 384 × 288-pixel amorphous silicon microbolometer with a 17-µm pixel pitch and power consumption of less than 100 mW, ideal for vehicle vision systems. The success of ULIS in high-performance, price-conscious commercial off-the-shelf applications has grown into the defense and military market with the Pico1024E Gen II, a new 1024 × 768 microbolometer thermal image sensor with 17-µm pixel pitch. The Pico1024E Gen II offers an extended detection range of 3 to 5 km while maintaining a low power consumption of 200 mW, optimal for helicopter pilots and military land vehicles.

Researchers at the University of California, Berkeley, are developing the next generation of lightweight, smaller and affordable vision systems using microelectromechanical systems-based vertical-cavity surface-emitting lasers. The new integrated chip-scale platform, measuring 3 × 3 mm, uses frequency-modulated continuous-wave lidar at a central wavelength of 1548 nm to achieve an estimated power consumption of less than 500 mW with a resolution of 1 cm at 10 m. The work was presented this spring at GOMACTech in Charleston, S.C.

Behnam Behroozpour, a UC Berkeley graduate student, said the system can remotely sense objects as far as 30 ft away – 10 times farther than with comparable current low-power laser systems. With further development, the technology could be used to make smaller, cheaper 3-D imaging systems that offer exceptional range for potential use in self-driving cars.

(a) In May, Google unveiled its prototype self-driving car, sans steering wheel and acceleration or brake pedals. (b) The Google driverless car’s vision system creates a detailed 3-D map of its environment. To identify a person, future systems may combine radar, lidar and imaging sensors. (c) The Google SD car uses a $70,000 HDL-64E lidar system from Velodyne to construct a 360° × 27° depth map comprising 1.3 million data points per second via 64 fixed-mount lasers spinning at 900 rpm. The system can detect obstacles and provide navigation for autonomous vehicles and marine vessels. (a) and (b)photos courtesy of Google (c) courtesy of Velodyne.

“Such a small 3-D ‘eye’ could enable a car to find parking in your line of sight, or come to you when you wave your hand,” Behroozpour said. “Cars that communicate wirelessly with each other and with the road would be more reliable than human drivers, as they would know where they intend to move, and when the light will turn red.”

With the technology developing so quickly, one big challenge preventing this dream from becoming a reality is the lag in legalizing self-driving vehicles. Before we can realize a world where all vehicles are intelligently tied in to a single network, states and countries will have to make laws that allow them. The U.S. states of Florida, Nevada, Michigan and California have passed various laws allowing the use of self-driving cars, as have Germany, the Netherlands and Spain.

Some in the industry say a future of connected intelligent self-driving cars is still quite far off, but Behroozpour is more optimistic: “I really think it can happen. Definitely in my lifetime,” he said. But then, he’s only 26.

Published: September 2014