Nate Holmes, National Instruments

Vision is not a new technology for production lines, as cameras have been used for automated inspection, robot guidance and sorting machines for many years. However, systems are becoming more complex, and today’s performance requirements are more rigorous. Continuous improvement in processor technology is advancing throughput for vision systems, as image processing is no longer the bottleneck in many production environments – but with increased speeds, synchronization becomes a key challenge.

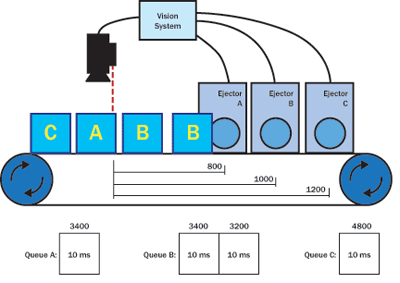

The vision system is just one piece of a multisystem puzzle that must be synchronized with other devices and I/O (input/output) to work well within an application (Figure 1). A common inspection scenario results in the sorting of faulty parts from correct ones as they move through the production line. These parts move along a conveyer belt with a known distance between the camera and the ejector location that removes failed parts. As the parts move, the location must be tracked for individual parts and correlated with the image analysis results along the way to make sure that the ejector correctly sorts out failures.

Figure 1. Production and assembly applications, such as the system in this winery, must synchronize a sorting system with the visual inspection process.

There are multiple methods for synchronizing the sorting process with the vision system, including the use of time stamps with known delays and proximity sensors that also keep track of the number of parts that pass by. However, the most common method relies on encoders. When a part passes by the inspection point, a proximity sensor detects its presence and triggers the camera. After a known encoder count, the ejector will sort the part based on the results of the image analysis. The challenge with this method is that a CPU must constantly track the encoder value and proximity sensors while running image-processing algorithms to classify the parts and communicate with the ejection system. This can lead to a complex software architecture, add considerable amounts of latency and jitter, increase the risk of inaccuracy, and decrease throughput.

FPGA-enabled I/O simplifies development

To solve this problem, engineers can off-load the synchronization from the CPU to a field-programmable gate array (FPGA), which excels at performing high-speed tasks in complete parallel with minimal jitter and latency. An FPGA can read digital input lines and react with a digital output within 20 ns, far outperforming the ability of CPUs, which experience software delays and overhead from the operating system. Other tasks such as network communication, supervisory control and image processing may still be easier to implement on a CPU, so the combination of a CPU plus an FPGA provides an architecture that is both flexible and high-performance enough for a wide range of automated-inspection applications.

New vision systems, such as the NI CVS-1457RT compact vision system from National Instruments, are implementing this type of architecture (Figure 2). This device includes an Intel Atom processor for image processing, network communication and many other tasks that typically are performed by PC-based systems, as well as an onboard Xilinx FPGA for the industrial digital I/O. The device’s FPGA is preconfigured with functionality for generating strobe pulses, triggering, and writing/reading from digital lines. Fortunately, because an FPGA is reconfigurable, engineers can customize it using LabView FPGA to achieve additional functionality, including custom triggers, timing, pulse width modulation outputs, custom digital protocols and high-speed counters. The inclusion of FPGA-based I/O in vision systems is removing the need for some PLCs (programmable logic controllers), which makes system integration and synchronization even more straightforward. The FPGA can control camera triggering, lighting pulses, read encoders and sensors, and control ejection mechanisms, all in complete parallel and within a single programming environment.

Figure 2. Vision systems such as the NI CVS-1457RT combine an FPGA with the CPU for tight I/O synchronization.

Including an FPGA in the same device as the CPU also provides an opportunity to tightly couple the I/O with the image processing. For example, the NI system uses the FPGA and CPU architecture, along with a feature called Vision RIO to solve the challenge of synchronizing the vision system with the ejector and associated I/O. This simple API (application programming interface) works by enabling users to configure a queue on the FPGA, which holds digital output pulses. As parts move down the conveyer belt and are inspected, pulses are added to the queue based on the results of the image analysis. As these same parts arrive at the ejector, the pulses are sequentially removed from the queue.

In the encoder-based example referenced earlier, the Vision RIO API would cause the FPGA to latch the encoder count when the camera is triggered. If the part fails the inspection, the vision system adds an offset that accounts for the travel distance between the camera trigger and the ejector trigger. Next, the FPGA uses a queue to keep track of the line to drive, the trigger condition and the duration of the pulse. Each queued pulse is triggered at the configured encoder count, resulting in the ejection of failed parts. In this scenario, a pulse is only added to the queue when a failed part is detected. Since the ejector is triggered by an encoder count, the correct parts may pass while the ejector waits for the encoder count to match the next failed part.

In some situations, the distance between inspected parts moving along a path cannot be guaranteed, because parts may shift as they travel along the path. This situation prevents an ejector station from being triggered at a specific encoder count. In this case, a proximity sensor near the ejector triggers the queue to drive the next pulse. Unlike the previous encoder example, the queue is triggered even in the presence of a correct part. To leave correct parts on the line, a 0-ms pulse is added to the queue for every correct part, which makes it possible for the queue to trigger without driving the ejector. This simplicity is a result of all the synchronization and timing that takes place on the FPGA, so only initially do users need to configure the queue and then insert pulses based on the inspection. These two simple steps make it possible to avoid developing a complete software architecture dependent on CPU latency and jitter. The API even scales to account for scenarios where multiple ejectors can be controlled simultaneously based on multiple inputs, including time stamps, encoder inputs and proximity sensors.

Figure 3. In this example, parts are sorted by multiple ejectors, which are triggered based on encoder counts and synchronized with queued pulses.

Tech advances reduce cabling

Technology advances in synchronization can also simplify cabling complexity. GigE Vision is one of the most popular camera interfaces in automated inspection applications, and the use of standard Ethernet cable for the camera bus makes it possible to achieve extended cable lengths at a reduced cost.

However, the network latency of Ethernet can cause system designers to install dedicated trigger lines to each camera. Action Command, a recent feature added to the GigE Vision standard, should help provide a solution by enabling deterministic triggering of cameras over the Ethernet bus. Action Command packets reduce the jitter and latency to the order of a few microseconds without the need for a dedicated, deterministic network. In addition, the adoption of power-over-Ethernet technology can help reduce cabling complexity by removing the need for dedicated camera power supplies. This means that it is now possible to provide camera power, transfer images and deterministically trigger the camera, all over a single Ethernet cable. Ultimately, the inclusion of FPGA-based I/O and cable-simplifying features like Action Command support and power-over-Ethernet technology are proving how integrated vision systems can be powerful and cost-effective solutions for automated inspection applications.

Meet the author

Nate Holmes is the vision product manager at National Instruments in Austin, Texas; email: [email protected].