Cows are happier. They also are giving more milk. Both of these related outcomes are a consequence of automated milking systems that depend upon photonics-based 3-D sensors.

Other innovations involve sensors operating outside the visible spectrum, which brings its own set of advantages. For instance, the use of near-infrared (NIR) and short-wave infrared (SWIR) enables manufacturers to spot defects in glassware, silicon ingots and pharmaceutical products. Other sensor advances make use of polarization to better image fine surface features.

While the capabilities of sensors are expanding, there are some requirements that have to be satisfied in any industrial application. First, the technology should be relatively inexpensive compared to the payback. Second, the sensors must be able to withstand harsh treatment.

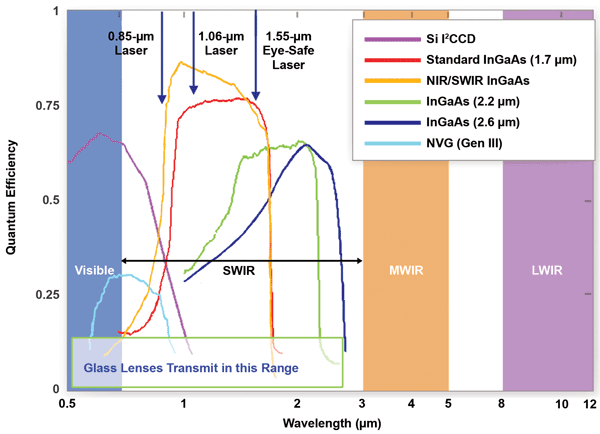

The short-wave IR, or SWIR, spectrum demands a detection material other than silicon. Photo courtesy of Sensors Unlimited.

“Those things have to be pretty rugged and reliable,” said Tom Hausken, a senior industry advisor at The Optical Society (OSA).

Several applications highlight recent developments and may also indicate where the technology is headed.

Take the case of automated milking machines. Lely North America Inc., a Pella, Iowa-based subsidiary of a Dutch company, manufactures a robot that can boost milk production by as much as 12 percent compared to the conventional approach. The increase is due, in part, to robots milking based on the cow’s own schedule.

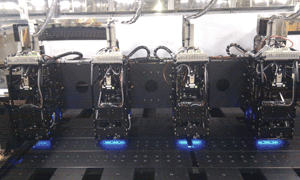

Machine vision systems used for industrial inspection applications. Photo courtesy of Teledyne Dalsa.

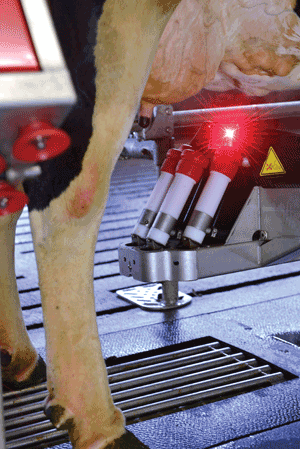

Connecting the machine to a cow requires rapid and accurate capturing of 3-D information, namely the location of the animal and its teats. This is accomplished with a camera and a laser, according to Ben Smink, manager of farm support at Lely.

Related: Happier Cows and More Milk

A switchover to time-of-flight distance sensing cameras has improved the overall robustness and speed of automated milking, said Pierre Cambou, imaging and sensors activity leader at analyst firm Yole Développement of Lyon, France. As the name implies, time-of-flight technology derives distance information by measuring how long it takes light to travel, round trip, from the camera to an object.

Cambou said this approach “has the main advantage of providing a high-resolution depth map when previous systems have either low resolution or only give information along a single line of sight.”

Using 3-D photonics-based sensing, a robot can milk cows on their schedules, boosting production. Photo courtesy of Lely North America.

The technology currently is limited to a maximum range of about 10 m. However, with the implementation of single-photon avalanche diode arrays, that distance could be pushed out to 35 m using a safe-in-all-conditions Class 1 laser, Cambou said. He added that a possible application could be in driverless vehicles.

Pepperl+Fuchs GmbH makes a range of optical sensors, according to Tom Corbett. He’s manager of photoelectric products at the company’s Twinsburg, Ohio-based U.S. subsidiary. The Pepperl+Fuchs product line includes distance sensing; time-of-flight solutions are increasingly attractive to customers.

“Time-of-flight technology in the last several years has decreased in cost to the point that distance-measurement sensors with very good resolution and repeat accuracy are not that much more than standard on/off sensors. These types of products are ideal for general-purpose automation applications,” Corbett said.

An earlier method for gathering the same distance information involved comparing the phase shift of outbound light relative to that of the returning signal. Time-of-flight techniques offer improved accuracy and color insensitivity.

These methods depend on the strength and stability of the source, which is one reason Pepperl+Fuchs is investigating the use of vertical-cavity surface-emitting lasers (VCSELs) over standard laser diodes. The former offers a wider operating temperature range, higher power performance and potentially lower cost, according to Corbett.

Other methods that can yield 3-D information include the use of multiple sensors and structured light, noted Glen Ahearn, sales and application support manager at Teledyne Dalsa Inc. The Waterloo, Ontario-based company makes cameras, image sensors, frame grabbers and control software.

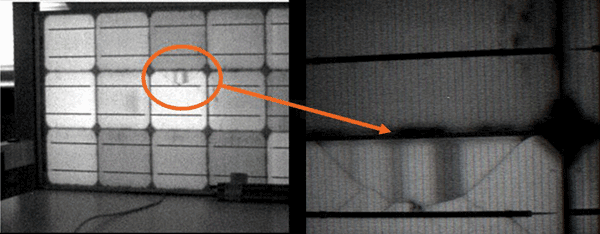

Multiple sensors extract distance information through triangulation, as human eyes do. In structured light, a source projects a known pattern, such as a checkerboard, onto an object, and distance is computed from the distortion of that pattern.

There has been some call, but not much, from industrial applications for pure 3-D measurements, according to Ahearn. In his experience, the more common demand has been for technologies that measure spectral characteristics outside human visual perception.

An example would be polarity. “Using polarized light and a polarized filter on your sensor, you can get very high resolution surface texture measurements,” Ahearn said.

This type of 3-D surface close-up is of interest to the semiconductor industry, which routinely deals with microscopic features. A polarization approach also can provide information about what’s going on inside an object, such as stress levels in glass.

As for wavelength, there are pushes both down into the ultraviolet and up into the infrared, according to Ahearn. Semiconductor users are most interested in UV because they require greater resolution provided by a shorter wavelength to resolve features.

Industrial sensors capture data from the ultraviolet, visible and infrared, sometimes combining the information to improve process control. Photo courtesy of Teledyne Dalsa.

The near-, short-wave and even thermal infrared are of interest to more applications. The IR sensors are often combined with those spanning the visual spectrum to create multispectral and hyperspectral imagers. These sensors may have more than six spectral bands.

Speaking of the future, Ahearn noted that the cost of machine vision components falls by roughly half every five years. That drop steadily expands the suite of applications that can make use of the technology.

On the sensor front, mobile devices have driven dramatic decreases in cost and increases in pixel counts, which may have an indirect effect on industrial

applications. Teledyne Dalsa, for example, does not use such sensors in its products, Ahearn reported. That’s because the pixels are often too small to provide the signal strength demanded in an industrial setting. In contrast, an industrial camera can allow the optical setup to be more thoroughly optimized than is the case for a general-purpose camera.

To incorporate infrared into industrial applications, it will be necessary to integrate different sensor materials. For instance, silicon is suited to the visible wavelength¬ – 400 to 700 nm – and the near-infrared out to 1050 nm. But short-wave infrared, which runs to about 2500 nm, requires an alternative material, as silicon is transparent beyond 1100 nm. At Sensors Unlimited Inc., the material of choice is indium gallium arsenide (InGaAs). The Princeton, N.J.-based subsidiary of UTC Aerospace Systems makes near- to short-wave IR linear and array cameras.

Sensitive to SWIR, indium gallium arsenide (InGaAs) sensors can spot manufacturing defects in solar cells, as seen in the close-up (right) of a 36-cell solar panel (left). Photo courtesy of Sensors Unlimited.

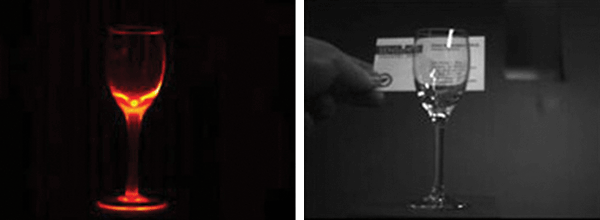

Doug Malchow, Sensors Unlimited’s manager of industrial business development, said SWIR is being applied in industrial settings to detect subsurface defects in silicon-based solar cells. Pharmaceutical manufacturers are using the technology to verify that finished products have the right mix of active and inactive ingredients. SWIR also is being used for hot glass bottle inspection, spotting defects that otherwise cannot be visualized.

“The SWIR camera can pick up the heating distribution in the thicker glass to observe cooldown problems and measure the dimensions of the bottle or detect slump, distortion and so on,” Malchow said.

The pixel size in such cameras has shrunk over the years. A decade ago, 40-µm-pitch pixels were state-of-the-art. Now the size is down to 12.5 µm. For comparison, visible-spectrum silicon image sensors used in today’s mobile phones have pixel sizes measuring about 1.5 µm.

On left, a hot wine glass false-color image of SWIR signals calibrated to temperature, showing the hottest part of the glass to be about 3300 °C. On right, it can be seen that the hot glass is transparent in the SWIR band. Photo courtesy of Sensors Unlimited.

The decrease in SWIR pixel size has resulted in many more pixels in an array sensor and, thanks to this pixel count increase, higher resolution. In the case of linear sensors, it has produced somewhat smaller sensors and cameras, as the pixel increase has not entirely offset the pixel size decrease.

When considering future applications, Malchow noted that IR can help with monitoring medicine particle size and chemical identification. Combined with information extracted from visual spectra, the technology could support more robust process control.

Achieving improved industrial control and outcomes does not depend solely on sensors. Novel sensors will help gather data in three dimensions across a wide spectrum with different polarizations, incorporating this and other information derived from interactions of light and matter. But sensor technology is only the start, as what’s done with an image after it’s acquired is equally important.

Addressing this issue, Thomas Giallorenzi, senior director of science policy at OSA, said, “It’s two things actually: how to acquire data better and how to interpret it faster and get more information out of it. Behind every optical system these days is a computer. A lot of the value added is in the processing and how you do it.”