Nate Holmes, National Instruments

Vision algorithm development is, by its very nature, an iterative process. If employed correctly, certain tools and design practices can dramatically decrease development time and improve quality. To that end, National Instruments has applied a graphical-programming approach to designing vision systems, resulting in an architecture it deems so crucial that it now serves as the scaffolding for all of its vision software workflows.

Ask any number of vision integrators whether they can build an inspection system and they’ll say, “We should be able to do that, but we’ve got to try a few things. Can you give us some sample images or, better yet, sample parts?” This hands-on approach to vision system design is necessary because image processing is still much more of an art than it is a science: There is still no adequate process to simulate images based on sensor, lens, lighting and ambient conditions. As a result, good vision integrators have “vision labs,” allowing them to try out many different lighting/lens/environmental control combinations with various algorithmic approaches to achieve robust and reliable results.

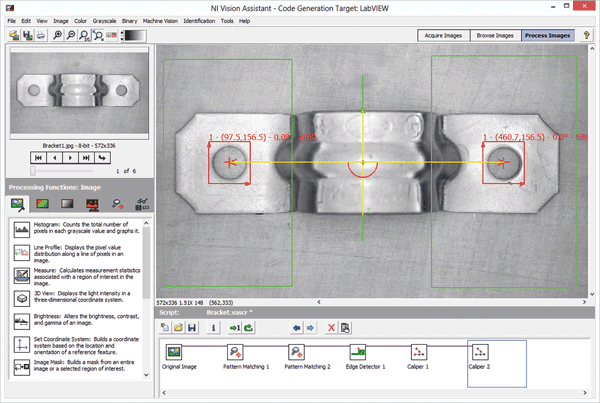

Configuration-based tools like the NI Vision Assistant allow for rapid exploration of boundary conditions when trying out different algorithmic approaches to a vision application.

At National Instruments Corp. (NI), we realized that our graphical-programming approach to software-based instrumentation also could be applied to vision system design. Subsequently, we identified five design practices deemed so essential for vision systems that we’ve incorporated them into NI’s vision software workflow.

Use an iterative exploratory design flow

With vision, you know up front that you are going to have to try a few approaches with any task. Often, it’s not a matter of what approach will work but what approach will work best. And “best” is different from application to application. For some applications, speed is paramount. In others, it’s accuracy. At minimum, you are going to have to try a few different approaches to find the best one for any specific application. Given a set of application constraints, it is paramount to explore the boundaries set up by these constraints to understand what may be possible when trying different approaches. What are the biggest contributors to the total algorithm execution time? Can this time be significantly reduced by optimizing the algorithm, or would it be better to change the environmental constraints to generate better images (e.g., different lighting, lenses or cameras, etc.) that could remove preprocessing steps? Sometimes, it will come down to a cost/benefit analysis. For example, should you spend the extra time in algorithm development and upgrade the processing ability of the system or should you instead make a monetary investment in more lighting and new lenses? The important thing is to arrive at these decision points as quickly as possible, and that’s best accomplished by exploring the application’s boundary conditions as you iterate through possible design approaches.

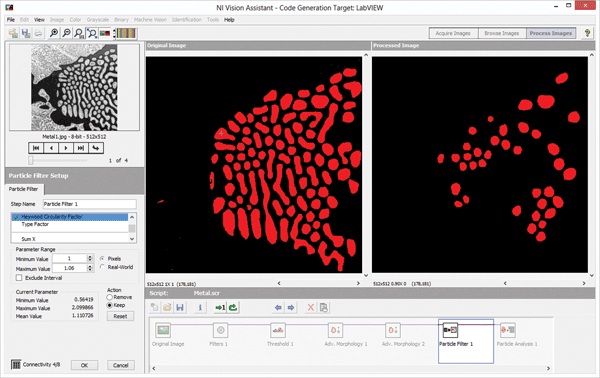

It’s a must to have a side-by-side comparison of original image and processed image for rapid algorithm development. Changes in numerous parameters and their combined effects then can be evaluated in real time.

Get immediate feedback and benchmarking

As an example, think about a WYSIWYG (What You See Is What You Get) text editor vs. HTML coding. Almost every time, it’s faster and easier to get results using WYSIWYG. And for those cases where you can’t get results, modern editing tools allow you to switch back and forth between the WYSIWYG editor and the HTML’s back end to get things just right.

It’s the same with vision – seeing algorithm results in real time is a huge time-saver when you are using an iterative exploratory approach. What is the right threshold value? How big/small are the particles to reject with a binary morphology filter? Which image preprocessing algorithm – and with what parameters – will best clean up an image?

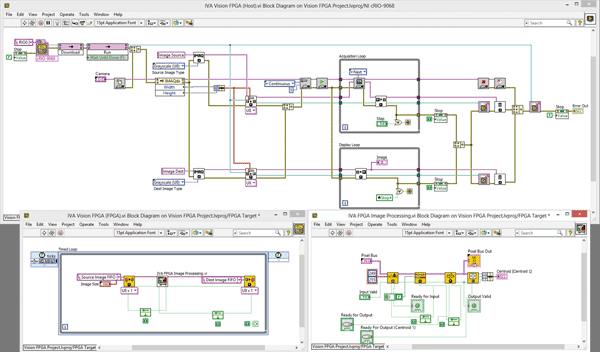

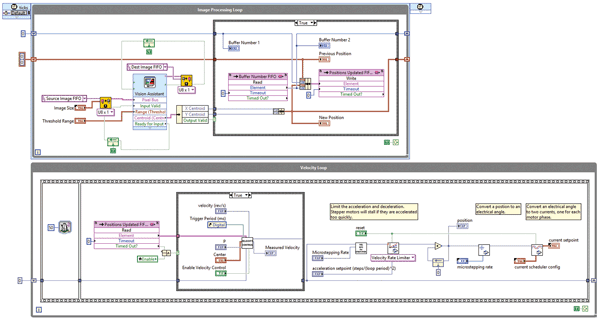

Example of LabView graphical code generated by the Vision Assistant configuration-based algorithm prototyping tool. The top window contains the real-time code for acquiring and transferring an image to the. The bottom left window is the FPGA infrastructure. The bottom right window is the FPGA implementation of the algorithm shown in the configuration-based software in Image 3.

The ability to benchmark an algorithm’s performance on a sample set of images is critical to evaluating the feasibility of a particular design at different points in development. If you know that you have to complete your inspection within 90 ms, you can immediately reject algorithm approaches that cannot be executed in that general time frame. The key is to determine the feasibility as soon as possible and before putting in all the work to string together a bunch of subcomponents in a vision algorithm that will work beautifully but will take 500 ms to complete. Benchmarking can be tricky; you develop on one type of processor, but you could deploy on another. Would upgrading the processor on the deployed system be faster than trying to optimize the algorithm? Could the acquired images be improved through better lighting to negate some preprocessing steps? Additionally, there is the entirely different approach of evaluating alternative processing platforms.

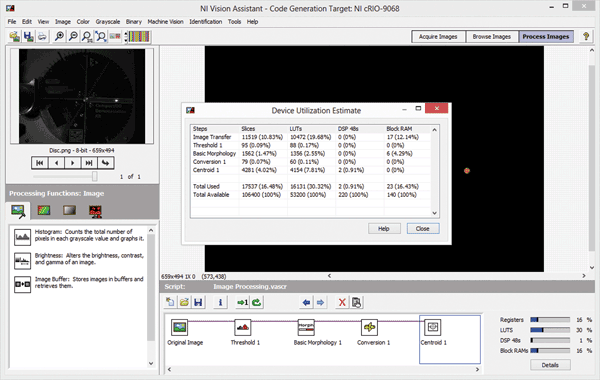

Developing an algorithm in a configuration-based tool for FPGA targets with integrated benchmarking cuts down on time spent waiting for code to compile and accelerates development.

Explore deployment to alternative processing platforms (GPUs, FPGAs)

If, through exploring the boundary conditions of a problem, you find that all the approaches you try take too long and/or that it would be prohibitively expensive to improve the images with a different component selection, you may want to consider using an alternative processing platform. Field-programmable gate arrays (FPGAs) and graphics processing units (GPUs) give you the ability to accelerate algorithms by using massive parallel processing and different fundamental processing architectures. The trade-off is these platforms’ increased hardware costs and software coding complexities.

The speed advantages of implementing processing-intensive tasks on an FPGA or GPU can be huge – greater by orders of magnitude. However, programming such devices also can be an order of magnitude more complex and more time-intensive. The good news is that advances are being made both to reduce the complexity of writing code for these devices through high-level synthesis tools, and to decrease compilation times required to try out a design on hardware. When developing on your PC, you can immediately compile and test your algorithm; these alternative processing platforms require time-intensive compilation. Because vision can be such an interactive process, compilation time can really add up and subsequently slow down a project.

NI provides FPGA benefits through high-level graphical programming to enable closed-loop control. For example, consider the control of a servomotor based on image processing results.

As an example of trying to cut down on the number of compilations required to get to a final design, NI implemented the ability to generate code for an FPGA target from the configuration-based tool, Vision Assistant. With it, you can select from a variety of FPGA targets, and your algorithm will be benchmarked against that target so you can know how an algorithm is going to work, as well as how much finite resource space it will need before you compile any code.

Enable the switch from rapid prototyping to implementation

The work flow thus far has been exploratory and iterative; it’s all been about discovering the best vision algorithm(s) to implement through exploring boundary conditions and benchmarking results with configuration-based vision software. Now, the challenge is to implement the proven approach on the selected deployment platform. Depending on the application, this task can range from very simple to quite complex. In fact, many configuration-based software packages can also handle deployment to selected platforms such as vision sensors and smart cameras for “pure” vision inspection systems, which do not require any integration and synchronization with other subsystems in the context of a larger system. If your application fits this requirement and falls within the boundaries of what these configuration-based software packages are designed for, it’s the best way to go. Designed with these use cases in mind, and incorporating the algorithm development functionality of Vision Assistant, NI’s Vision Builder for Automated Inspection software package is designed for such scenarios. It includes a state machine for looping and branching decision making and also includes a customizable user interface and configurable input/output (I/O) steps. Many applications, however, do not conform to the intended use cases for which these software packages were designed. When building a system that includes not only vision but also motion control and advanced I/O, you want a full-fledged development environment. This is especially true if you have to close the loop between various subsystems and the vision system, or if you need to interact with a supervisory control and data acquisition (SCADA) system, correlate images with I/O, save and present results, interact via a Web server, implement special security features, or perform any one of dozens of other common automation tasks.

The key is being able to switch back and forth between the configuration-based prototyping platform and the development environment needed to implement the full system architecture as fast as possible. Possibilities include code scripting capabilities from the configuration-based software, or the ability to call on an algorithm that was developed in a configuration-based software program within the development environment. Our solutions strive to provide both options to accelerate either approach for developers, as well as to provide an FPGA code scripting option – with all associated infrastructure – for image transfer and management.

Keep I/O and triggering requirements in mind

You must be able to make an informed decision as to what type of action to take based on the results of the vision algorithm. Do you need to return intermediate results and log images, or just return a final result? Take these requirements into consideration when exploring the boundary conditions of the problem to ensure you don’t design yourself into a corner with any particular software approach or hardware choice for implementing your algorithm design. It can be extremely frustrating to finish the vision processing piece of the application and then to get stuck trying to synchronize image acquisition with I/O, or effectively pass results to other parts of the system.

FPGAs particularly are useful when you have to meet timing for a demanding, high-speed application – such as closing the loop between vision and motion or another I/O source, e.g., a trigger or an ejection mechanism. Because FPGAs are implemented within the hardware, the cycle times on closed-loop control can be extremely fast. They also are the platform of choice when implementing various types of I/O because they are low-power embedded devices and have a more flexible interface to I/O than do GPUs or CPUs.

By nature, vision algorithm development is an iterative process, and if certain tools and design practices are employed correctly, you can dramatically decrease development time and improve quality. Be sure to use an iterative design flow that gives you immediate feedback and benchmarking. Catch failed approaches early and consider the ways that hardware (e.g., processing platform and I/O requirements) can impact your design. Also, choose a platform that allows for efficient and rapid migration from a prototyping to a deployment platform. If these considerations are taken into account, you are on your way to an efficiently designed, robust, well-performing vision system.

Meet the author

Nate Holmes is the R&D group manager for Vision and Motion at National Instruments Corp. in Austin, Texas; email: [email protected].