Daniel C. McCarthy, Senior News Editor

In the real world, most citrus packers still inspect and grade fruit manually. Economic and technical considerations limit machine vision solutions at the component level. Thus, vision finds application only in large packing operations that are able to afford the installation of a completely new, fully integrated production line.

In the real world, most citrus packers still inspect and grade fruit manually. Economic and technical considerations limit machine vision solutions at the component level. Thus, vision finds application only in large packing operations that are able to afford the installation of a completely new, fully integrated production line.

Not so our hypothetical citrus packer, who exists not to express the realities of a particular industry, but rather to illustrate how vision suppliers approach the challenge of distinguishing color variation from blemishes, and punctures from stem areas. Oranges arrive at our hypothetical orange packer and are unloaded, washed, de-stemmed and conveyed in single file on rollers that continuously rotate the fruit. There are 10 of these lanes, each carrying oranges past the camera at a rate of 10 per second.

The cameras must perform primary grading to ensure that no more than 7.5 percent of an orange's surface is damaged or discolored, and that small punctures are not mistaken for the stem area and vice versa. The stem area takes up less than 2 percent of the surface.

After the primary grading by machine vision, the fruit will be treated with fungicide and wax and will be graded manually before it is sorted by size.

The Perfect Orange

It sounds simple, but attached to every solution returned by contributing integrators were additional questions, qualifications and alternatives.

Prince SL of Barcelona, Spain, proposed monochrome progressive-scan cameras and red-light illumination to perform the primary grading function.

Vision Machines Inc. of North Reading, Mass., offered several solutions, each with a higher degree of performance. Options ranged from the use of standard monochrome cameras and color filters to the application of progressive-scan cameras with RGB color output.

Colour Vision Systems, headquartered in Bacchus Marsh, Victoria, Australia, emphasized that packers interested in applying vision to inspect fruit can reduce both the number of engineering problems and the associated costs by installing a complete, fully integrated inspection system rather than trying to paste new cameras over old production lines. The company proposed its own turnkey system, combining conveyors with digital cameras, high-performance processors and neural nets.

A red light on defects

Prince's proposed inspection system integrates:

• A monochrome progressive-scan camera from Pulnix America Inc. of Sunnyvale, Calif.

• A Viper series frame grabber and pixel processor, both from Coreco Inc. of St.-Laurent, Quebec, Canada.

• WiT software available from Logical Vision, a division of Coreco.

• A red-filtered illumination source.

The two most important considerations in applying vision to citrus inspection are the speed of the conveyor and what types of defect the system needs to detect, said Keyvan Eshghy, supervisor of Prince's technical department. Final component selection is driven by price, service, and hardware and software performance.

"Each and every aspect depends on the others," he said. "We have chosen the hardware and software for this article basically because its Spanish supplier offers a very good client service, and because the Coreco hardware ... supports a wide range of cameras from other manufacturers, and changes are very easy to implement."

"For the discoloration process, we assume that the [visual] image obtained will be sufficient to detect this error," Eshghy said, adding that the greater challenge is high-speed detection of punctures, which may be small but deep and can be mistaken for the stem area.

The pivot on which Prince's solution turns is a custom illumination system, a 100 × 100-mm optical glass dome coated with a white opaline layer. Light reflecting in the dome's interior evenly diffuses light from high-intensity discharge lamps filtered to emit at red wavelengths. The halogen lamps help compensate for the effects of conveyor speed on imaging, and the red light increases contrast to improve detection of punctures.

This also helps explain Eshghy's selection of a monochrome camera: "The red light may give us more contrast between the good and bad parts of the orange, but for this we have to use black-and-white cameras."

In principle, red light will cause punctures to appear black in camera images and discolored sections to appear lighter than orange parts. "We have not been able to test other wavelengths like blue or green, but we think that white light is not recommendable in this application because it offers less contrast between good and bad parts," said Eshghy.

Potential pitfalls riddle our hypothetical application, observed Marc Landman of Vision Machines. These include the problems associated with imaging wet oranges on a possibly reflective metallic conveyor belt, unwarping images of curved surface patches and achieving high-speed inspection that provides high spatial and color resolution to detect surface blemishes and small punctures.

Performance and compatibility

Also, Landman said, in order to propose an operable vision system for our application, he worked from the assumption that the conveyor rotates oranges on two axes, so that the entire surface is visible to the camera. Otherwise, he said, the computer cannot accurately gauge what percentage of the surface is blemished.

Further, Landman limited his focus to inspection of dyed or uniformly colored oranges. One inexpensive solution he outlined echoed suggestions from Prince in the use of standard monochrome cameras with color filters and a color space based on hue, saturation and luminance.

However, if our packer requires higher performance, Vision Machines suggested the use of color cameras and traditional color analysis methods such as thresholding or high-speed table lookup on individual pixels. This solution can use either:

• An XC-711 camera from Sony Electronics Corp. in Park Ridge, N.J., that provides 768 3 493-pixel resolution from a single CCD, Y/C output and asynchronous reset, or

• An HV-C20 camera from Hitachi Denshi America Ltd. in Woodbury, N.Y., that provides 768 3 494-pixel resolution from a three-CCD sensor, Y/C output and external trigger field.

Additional components include:

• An Imaq PCI-1411 composite color frame grabber from National Instruments in Austin, Texas, providing either composite or Y/C input and HSL (hue, saturation, luminance) color space conversion. The board provides 24-bit RGB or HSL output and also allows partial image scanning with programmable region of interest.

• A white-light LED illumination unit from Northeast Robotics in Weare, N.H.

• National Instruments' Imaq Vision HSL color matching software.

This system is cost-effective and has the practical advantage that camera, frame grabber and off-the-shelf vision software are fully compatible with one another, said Landman.

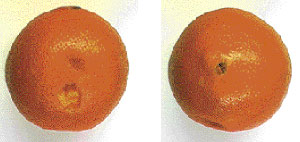

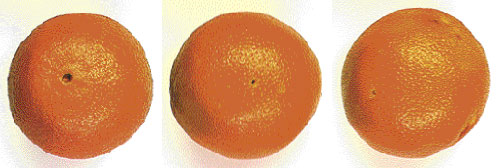

High-speed inspection of oranges requires high spatial and color resolution to distinguish damaged surface areas. Potential complications include imaging wet oranges on a reflective metallic conveyor belt, translating curved surface patches accurately into two-dimensional data and finding a method to observe 100 percent of the surface area. All photos courtesy of Vision Machines Inc.

Rotation and perspective both alter the apparent size of blemishes in a two-dimensional image. Accurately gauging how much surface area is damaged requires predictable and uniform rotation of fruit, additional vision hardware to unwarp images of curved surface patches or, preferably, both.

Still higher-performance components are available, but possible trade-offs include compatibility problems among the camera, frame grabber and software. If our citrus packer were willing to explore this option, Landman offered two suggestions for RGB color output cameras:

• Sony's DXC9000 progressive-scan analog camera, which provides 659 × 494-pixel resolution from three CCDs, or

• Hitachi's KPF-100C progressive-scan digital camera, which provides 1360 × 1034-pixel resolution from a single chip.

He noted that frame grabber choices compatible with high-performance RGB cameras are limited and that compatible color inspection software also may not be widely available. Because the compatibility of the camera with its processing platform is vital, Landman included several frame grabber options:

• For Sony's camera, he suggested the analog DT3154 Color PCI frame grabber from Data Translation Inc. in Marlborough, Mass., or the IC-RGB board from Imaging Technology in Bedford, Mass.

• For Hitachi's camera, he suggests National Instruments' Imaq PCI-1424 digital frame grabber.

Other components for Vision Machines' high-performance system include:

• An unwarp module to facilitate proper viewing of the rounded surface patches on the oranges.

• High-frequency fluorescent lights to provide soft, diffuse illumination at various color temperatures.

• Crossed polarizers to help minimize specular reflections from the conveyor surface.

Landman specified progressive-scan technology for both camera selections because it eliminates the need for strobed light sources, for which timing can be an issue. This, however, requires high-intensity lamps to provide sufficient light at the cameras' shutter speed. Three-chip cameras are preferable to single-chip cameras, he added, because they deliver better resolution and color rendition.

Landman also recommended RGB cameras and frame grabbers to provide a clean separation of color and spatial information. Alternatively, more widely available Y/C (S-video) systems could produce satisfactory results. He noted, however, that a composite color signal format should be avoided, because it produces images with substantially poorer color fidelity and reproducibility.

The inspection must detect small punctures that cannot be confused with the stem area. Because the citrus packer does not specify the size of the smallest allowable surface defect, Landman believes a standard 640 × 480-pixel resolution image should suffice. At this resolution, surface defects approximately 1 percent in size within a 4-in. field of view -- roughly 40 pixels in diameter -- would be clearly detectable if they possess sufficient contrast.

Inspection of multicolored fruit is possible as well, said Landman, but presents tougher challenges. Unlike inspection of dyed oranges, traditional color analysis methods based on thresholding and analysis of the individual color planes will not work well for multicolored fruit. These oranges require a system that will analyze the combined RGB color distributions. He recommended Way-2C software distributed by Ronald A. Massa & Associates in Cohasset, Mass., which provides pattern recognition algorithms designed to handle complex color distributions based on statistical histogram matching.

No assembly required

Colour Vision Systems is one of the few vision integrators targeting the citrus packing industry with complete turnkey systems able to provide any combination of weight, color, size, density and blemish sorting. Its systems singulate the fruit, rotate it beneath the camera, present it for weighing and deliver it to outlets for packaging. Software allows packers to deliver fruit to multiple drops by count, by weight and by volume.

A representative system from Colour Vision, designed to deliver stable color results across multiple lanes, integrates:

• Digital cameras based on Sony CCDs.

• High-frequency fluorescent lamps.

• Vision processors based on Coldfire technology from Motorola Semiconductor Products in Austin, Texas.

• Colour Vision's customized conveyor system.

• Colour Vision's proprietary Windows-based software, which includes macros that allow the program to evolve.

The conveyor enables six views of each rotating orange, four from overhead and two reflected by mirrors. Viewing 100 percent of an orange's surface area is not the hardest part, said Charles Esson, a manager of research and development for Colour Vision. The biggest issue is combining the images, which requires assumptions on how well the conveyor is rotating the fruit.

"It is a balancing act. Too few images, and you have to use the edges of the image; too many, and the errors caused by imperfect rotation increase," he said, adding that this approach precludes use of a line-scan camera or any other method that uses small sections out of many images.

The camera, able to supply pictures on demand at up to 10 fps, uses 8-bit RGB color. The human eye controls the intensity of light on the retina by expanding or contracting the iris, explained Esson. "Cameras have none of this. All we can do is improve its color stability, remove the analog errors and improve the accuracy," he said.

Colour Vision's camera uses four functions to transfer the picture details -- red, green, blue and near-infrared -- arranged neatly in 8 bits into a 32-bit "word." Practical use of near-infrared imaging applies to darker fruit, said Esson, but research may expand its applicability to other produce.

Each camera delivers images to two 5307 Coldfire vision processors combined with hardware to perform color set lookup and color space translations. The devices use on-chip processing to generate millions of instructions per second at a low energy cost. "That is, it runs like the clappers, yet is as cool as a cucumber," said Esson. Motorola's chip also enables efficient encoding of neural nets to classify the type of blemish, for sizing fruit and for color sorting.

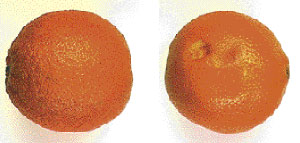

Like surface blemishes and bruises, punctures compromise an orange's shelf life and overall quality. But the minimal surface area these wounds can occupy make automated detection difficult. One possible solution captures monochrome images under red light to increase contrast and highlight small flaws such as punctures.

Successful blemish sorting of oranges comes down to solving one big problem: the correct classification of the stem and navel. To develop its systems, the company captured thousands of images and had computer programs classify the marks. Researchers then looked at each picture to corroborate the accuracy of the software to refine a system that correctly classifies the stem about 90 percent of the time.

Esson, speaking from experience, concluded two things about automated blemish sorting: "It all costs a lot of money, and humans are very good at it -- well, we think we are, anyway -- and the computer has to perform how we think, right or wrong."