Real-time 3D volumetric displays are finding applications in the medical industry, but technical challenges abound and need to be addressed for both doctors and patients to benefit from this technology.

KRIšS OSMANIS AND ILMARS OSMANIS, LIGHTSPACE TECHNOLOGIES INC.

Multi-planar volumetric imaging systems, which produce 3D volume renderings from 2D projections, are finding a number of applications in aerospace and defense. However, the medical industry is particularly ripe for this technology because of the commonplace production of 3D scans by volumetric systems, such as CT, magnetic resonance imaging (MRI) and ultrasound. While the technology is advancing, its value to medical professionals is diminished if technical challenges, such as those relating to multi-planar image generation and rendering, real-time volumetric video transfer and optical system architecture, are not overcome.

Fortunately, these technical challenges are not insurmountable. The key to overcoming them is an understanding of two key technology elements: a screen with real physical depth, also called a multi-planar optical element (MOE); and a projection system. A MOE consists of a stack of air-spaced optical shutters and has distance between the shutters that, combined with the number of shutters, creates the physical depth of the MOE.

The optical shutter can switch between two states: transparent or scattering. The MOE for LightSpace Technologies Inc.’s volumetric display imaging system, for example, uses liquid crystal shutters that are based on an electrically induced, transient scattering mode technology (Figure 1). A specific electronic controller generates the required voltage waveforms and drives MOE synchronously to the projection system.

Figure 1. A LightSpace Technologies Inc. real-time volumetric 3D imaging technology demonstration session. Courtesy of LightSpace Technologies.

The projection system shows 2D images to the MOE. Each 2D image is a slice of the volumetric scene to be shown inside the MOE. The projection is synchronized with MOE in such a manner that at any given moment, all but one of the optical shutters are in a transparent state, and the projected image can be seen on the shutter that is in the scattering state. After some time, the next shutter is switched to its scattering state, the previous shutter to its transparent state, and the next volumetric 2D slice image is projected.

To achieve a flicker-free volumetric MOE image perception, the projection system must be capable of refreshing the whole volumetric scene (3D frame — all depth layers) at least 25 times per second. Consequently, the projection system must be capable of showing at least 25 times the number of depth layers of 2D slices per second. Some of the issues behind volumetric imaging technologies’ failure to be widely used relate to real-time integration into the system, such as flicker-free volumetric image reconstruction and a reasonable volume refresh rate.

People are used to having the most frequently used video resolution and refresh standards, such as 1080p60 format

(1920 × 1080 pixel resolution and 60-Hz transfer rate), where no flickering is present and the image is updated smoothly. Such standards have also been adopted by the medical industry. For example, diagnostic displays with up to 5 MP (2560 × 2048 pixel resolution) are available. In contrast, the multi-planar volumetric imaging system can be characterized by three rates:

• 3D volumetric frame rendering rate: the volumetric image frame buffer update rate at imaging workstation.

• 3D volumetric frame dataset transfer rate: how many volumetric image frames per second are transferred to the volumetric display.

• 3D volumetric image optical refresh rate: the MOE and projection system’s reconstructed volumetric image optical refresh rate.

Image generation and rendering

One of the visualization system’s technical challenges is the generation of multi-planar volumetric image slices. This challenge is acute in medical imaging, which tends to be slice-oriented. Multi-planar technology consists of not only the multiple optical shutters, referred to as the “multiple depth planes,” but also the image content, or prepared slices, that will be reconstructed in the multi-planer optical element. Rendering a 3D scene for display in conventional 2D form from vertexes is a basic computer graphics problem that is solved by using DirectX or OpenGL, two competing application interfaces, resulting in a 2D projection view of a 3D scene. This rendering is important to obtain naturally perceivable 3D volumetric images.

The reconstruction of a volumetric scene requires 2D slices of the 3D scene. Slices can be obtained by several methods. Examples include:

• Drawing a volumetric scene and slicing it at the required depth locations. The result is the extraction of 2D images that form a multi-planar volumetric image.

• Employing OpenGL to manipulate near- and far-plane placement within the volumetric scene. Near- and far-plane placement can be manipulated to overlap as well, resulting in a transfer of more “depth” information to the depth slices, and to the MOE.

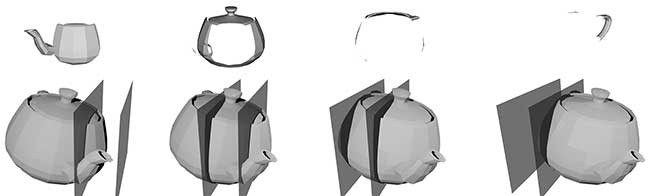

Depending on the slicing method, the reconstructed object might appear in the MOE with noticeable empty gaps between the physical depth layers. LightSpace Technologies has developed and patented specific multi-planar anti-aliasing algorithms to create a smooth volumetric image. The rendering and slicing of the volumetric scene is a time-consuming process if implemented using classic libraries and function calls. To improve the speed, additional parallel computing and processing power, such as Nvidia Corp.’s computer unified device architecture (CUDA) technology, can be used (Figure 2).

Figure 2. Volumetric image slicing using near-far plane manipulation. Here a teapot is sliced into four planes. The top row shows the 2D slices that form a volumetric object in a multi-planar optical element, while the bottom row shows a representation of near-far plane locations from which to extract the corresponding depth slice. Courtesy of LightSpace Technologies.

MRI and CT scans

Cross section scanned images or slices, such as MRI and CT scans, are some of the most natural image types to visualize in the multi-planar volumetric display. These scans capture actual physical volumes of a body that can be naturally reconstructed in a multi-planar volumetric image.

CT scans usually produce a high number of slices that have to be condensed to the number of depth layers in the multi-planar volumetric display. One way to achieve this is by defining the volume boundaries to be visualized and then the volume rendering methods to be applied. Another way is to first prepare the volumetric scene by extracting required information from the scanned slices, and then follow with volume rendering methods. One example of volumetric scene extraction is seen by applying a specific object recognition procedure, such as segmentation of the human lung CT scan to extract the blood vessel system (Figure 3).

Figure 3. A human lung CT scan with applied blood vessel system extraction algorithm. The object is rotated to show the depth. Courtesy of LightSpace Technologies.

The modern ultrasound systems that are capable of real-time 3D ultrasonic scans present a challenge for visualization because raw ultrasound data is difficult to observe for a nonspecialist and requires specific ultrasound video processing algorithms. Medical doctors have acknowledged the usability of a volumetric image and expressed keen interest in acquiring such visualization devices in order to include them in their regular work flow for diagnostic and enhanced perception visualizations.

Real-time 3D volumetric image stream

A real-time 3D volumetric image stream allows the visualization of volumetric data sets with little to no delay on the volumetric visualization system. This feature can be beneficial in applications requiring real-time imaging and response, such as 3D image-guided surgeries. A real-time 3D volumetric image stream splits 3D volumetric data set preparation (pre-processing) from the visualization itself. Consequently, the volumetric display unit can connect to various kinds of image processing workstations, depending on the required application and rendering speed. This capability is helpful, for example, when the system is set up where a doctor is observing an ultrasonic or MRI/CT scan result and can manipulate the scanned volumetric data set to observe the required detail and observation angle on the volumetric display.

To obtain a real-time volumetric display, the sliced volumetric image must first be sent to the display unit. The problem in implementing 3D volumetric imaging systems is that the 3D volumetric image stream contains considerably more pixel data than the single-plane 2D image data stream. Currently the fastest projection system can be built on a commercially available XGA (1024 × 768) resolution Texas Instruments Inc. digital light processing (DLP) chipset.

One of the key 3D volumetric data transfer problems is that such an image stream is not standardized. Standardization would allow the creation of medical apparatus that would seamlessly integrate 3D volumetric imaging data streams with one another, resulting in more functional devices in the market. One way to overcome this issue is to extend existing transfer standards, such as HDMI and DisplayPort, as a physical carrier and map a custom volumetric image data stream protocol on top of it. For a multi-planar volumetric video transfer application, this can be done by either modifying the transfer standard by embedding a depth layer identification into the data stream or by mapping volumetric image 2D slices inside a larger resolution image. Popular video transfer standards HDMI and DisplayPort have an option to embed additional data words into the data stream, such as the number of total depth planes and the current depth plane identification.

Mapping volumetric image 2D slices inside a larger resolution image requires a specifically programmed data receiver that can extract the 2D slices from the multi-planar volumetric image. The number of slices that can be mapped in a larger resolution image depends on both the slice resolution and the chosen larger resolution.

Optical system architecture

LightSpace Technologies’ subjective testing of human perception of various optical refresh rates shows that a low volumetric refresh rate is perceived as image flickering, which creates eye discomfort. Image flicker disappears if the optical refresh rate has been increased above 50 frames per second. Traditional image projectors cannot achieve the refresh rates necessary for flicker-free volumetric imaging, which for 20 depth layers would be a minimum 1,000 full-color slices per second at 24 bits per pixel color depth. Consequently, a custom high-speed projection system must be developed.

Projection systems based on a spatial light modulator (SLM), such as Texas Instruments’ DLP chipset and the digital micromirror device (DMD), are capable of displaying the required refresh rates using advanced light modulation methods. The fastest Texas Instruments DLP chipset is DLP 7000 DMD chipset, which is included in the DLP Discovery 4100 kit, and is capable of displaying over 32,000 binary frames per second. Two common options of building an SLM-based projection system are single-chip and three-chip optical configurations.

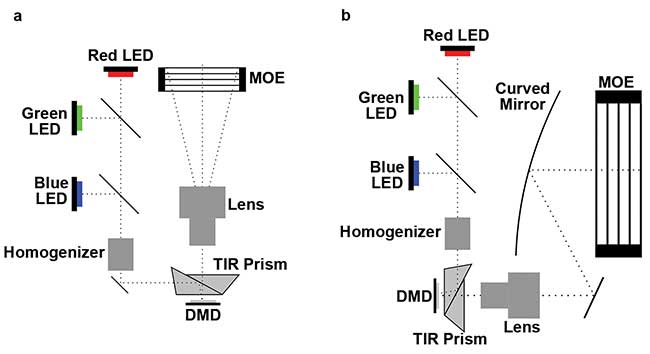

A single-chip architecture requires that each primary color — red, green and blue — is modulated time sequentially, resulting in a lower overall refresh rate. This option is simpler to implement. In contrast, a three-chip system can be used to increase refresh rate in comparison to a single-chip system. In this case, all three primary colors are shown simultaneously onto their corresponding SLM, and modulated images are superimposed by the collecting prism. This results in an overall higher refresh rate and brighter images than a single-chip DMD system, but it is more complex to implement.

SLM produces only binary images. Consequently, the light amount must be modulated by either applying the binary weighted pulse width modulation (PWM) to the SLM, by applying the binary weighted light source intensity modulation (LSIM), or a combination of both methods — mixed modulation. The PWM method results in a lower refresh rate but higher output luminance, while the LSIM method results in a higher refresh rate but lower output luminance. A combination of both methods results in a better refresh rate than PWM and a higher luminance than LSIM.

To capture light from a source, the light must be modulated and the beam size must be increased to that of the MOE. The architecture of the optics system must contain light sources (LEDs), collimating and homogenization elements, spatial light modulator, prism, lens and relay mirrors. Increasing beam size with reasonable throw ratio requires considerable physical light path length. Another option is to use a curved mirror-based system (Figure 4).

Figure 4. Multi-planar volumetric display optics system with telecentric (a) and curved mirror architecture (b). Courtesy of LightSpace Technologies.

Opportunities on display

Given how 3D viewing is crucial to limiting patient injury during invasive and noninvasive treatments, it is not surprising that the global volumetric display market is projected to grow from $38 million in 2014 to $402 million by 2022, according to Stratistics Market Research Consulting Pvt. Ltd. Illustrative of this technology’s advancement in medical settings is LightSpace Technologies’ plan to deliver in the second quarter of 2016 a 3D volumetric imaging workstation to Riga Children’s University Hospital in Riga, Latvia, for use in diagnostic examination of MRI/CT 3D data sets.

Meet the authors

Krišs Osmanis is a lead engineer with LightSpace Technologies in Riga, Latvia; email: [email protected]. Ilmars Osmanis is the CEO of LightSpace Technologies; email: [email protected].