From picking the juiciest and most colorful cranberries for consumption to detecting one of the most elusive of cancers — brain cancer — imaging systems are gathering momentum, largely thanks to shrinking size and cost.

Every autumn — usually from mid-September until around mid-November in North America and March through May in South America — cranberries reach their peak of color and flavor and are ready for harvesting. It’s then the turn of hyperspectral imagers to ensure only the best grade cranberries make it through to production from the millions of kilos that are harvested.

In March 2015, spectral-imaging specialist Headwall Photonics Inc., based in Massachusetts, began a collaboration with a well-known cranberry-products maker to begin testing new spectral-imaging/machine vision technologies targeted at providing growers and farmers with the technology necessary to improve product quality and agricultural yield.

Courtesy of Headwall Photonics Inc.

But they’re not just spotting foreign material for extraction. Headwall’s hyperspectral imagers are now grading produce with a high degree of specificity so that food processors can inspect and classify inbound products and make use of different grades of crop for different end use products.

“Rather than simply ‘good vs. bad,’ hyperspectral imaging allows for a more granular distinction across the inspected line of food products,” said David Bannon, CEO of Headwall Photonics. “Headwall’s sensors are based upon what is called a ‘push broom’ design, or a ‘line-scanning’ optical design simply using precise diffraction gratings and concentric mirrors.”

While the method may not be new — spectral imaging has been used by the military for many years — Bannon points out applying this spectral instrumentation to commercial applications takes some extra effort to develop “actionable” data maps for commercial end users. It is the ability to apply spectral mapping for critical vision applications that is attracting industry and academia alike to the power of advanced

machine vision sensors. One such example is the HELICoiD Project, which is a European collaborative funded by the Research Executive Agency,

through the Future and Emerging Technologies (FET-Open) program, under the 7th Framework Program of the European Union.

At the southeastern Massachusetts Cranberry Station, the X6 from German artificial intelligence and robotics specialist Aibotix GmbH is about to gather spectral data on a test flight, as seen in front image. Courtesy of Headwall Photonics Inc.

Its aim is to use hyperspectral imaging for real-time identification of tumor margins during neurosurgery, helping surgeons extract the entire tumor and to spare as much of the healthy tissue as possible.

“Brain cancer is among the hardest of all to detect, with ‘good’ cells looking almost indistinguishable from cancerous ones. But the specificity of hyperspectral imaging to ‘see’ this cellular distinction represents a tremendous breakthrough,” Bannon said.

‘Payload-friendly’ systems

Reductions in sensor instrument size are another enabler pushing the faster adoption of hyperspectral and multispectral imagers into new applications. As sensors move down in size, weight and cost, they also move from traditional strategic platforms such as satellites and manned aircraft to more tactical craft such as hand-launched unmanned aerial vehicles (UAVs).

Such airborne (remote sensing) applications include everything from precision agriculture, geological exploration, environmental monitoring and infrastructure inspection to pollution mitigation, ISR (intelligence, surveillance and reconnaissance) and climatology.

More ground can be covered, and more actionable spectral data can be collected, when a fleet of equipped UAVs supplements a very costly satellite mission or high-flying reconnaissance aircraft.

“Precision agriculture is benefiting from the simple adoption of the UAV and sensors that are ‘payload-friendly,’” Bannon said. “By making the sensors smaller, lighter and more affordable, more users can take advantage of hyperspectral [imaging], which basically is a new set of eyes for the remote sensing community.”

At Barrington, N.J.-based Edmund Optics Inc., the trends of shrinking size and cost are also opening up new application spaces. Cameras are pushing toward smaller sensors with smaller pixels and larger sensors with higher resolutions. The result is a new take on lens design.

“Pixel sizes are getting smaller. Customers will require [a] higher-resolution imaging lens to fully utilize the smaller pixels,” said Lisa Tsang, product line manager of Imaging at Edmund Optics. “We’ve introduced a new family of high-resolution, small format fixed focal length lenses — our new UC Series Lenses.”

TECHSPEC Ultra-Compact (UC) Series Fixed Focal Length Lenses. Designed and optimized for sensors with smaller pixels. Courtesy of Edmund Optics Inc.

Designed for pixels that are ≤2.2 μm and optimized for 1/2.5-in. sensors, these lenses provide high levels of resolution (>200 lp/mm) across the sensor, making them ideal for inspection, factory automation, biomedical instrumentation and a broad range of other applications.

At Flir Systems Inc., based in Wilsonville, Ore., Chief Technical Officer Pierre Boulanger points out that going forward, providing just a pretty thermal image may not be enough. If the final functionality needs to be realized with a second subsystem, those systems may not be competitive.

“Advancements and the availability of high-performing, yet inexpensive, sensors, optics and systems-on-chip are enabling the implementation of imagers as systems that execute beyond simply generating an image. They now solve problems,” he said.

System-on-chip enabling new capabilities

As showcased in April at the 2016 SPIE Defense and Commercial Sensing Conference in Baltimore, Flir’s Boson Thermal Camera is the first of its type to incorporate a low-power multicore vision processor. The tiny camera offers onboard processing capabilities and can be implemented into OEM devices such as cars, security systems, unmanned air systems or military equipment such as rifle scopes.

While Flir’s Boson camera remains in its early launch stage, these images represent the type of analytics that Boson will make available all within the core. The images show men walking in and out of alarm zones and a man walking in a parking lot. Courtesy of Flir Systems Inc.

“The system-on-chips are becoming more capable, enabling imagers to perform specific tasks for customers. More and more useful end-function systems based on image sensor data will become possible,” Boulanger said. “The video analytics algorithms still need to improve their performance. Several function very well today, such as for pedestrian detection in automotive markets, or virtual fence protection in security applications, but as uses become more diversified, we must design new algorithms.”

Demand for consolidation and integration is echoed by Bannon: “Consolidation comes from taking what were previously ‘connected’ elements and packing them inside the sensor housing.”

In the case of Headwall’s Nano-Hyperspec, 480 GB of embedded storage and direct-attached GPS/IMU (inertial

measurement unit) means that an outboard computer (plus related cabling) are now rendered unnecessary, optimizing

the payload budget for other related instruments such as lidar, or a FODIS (fiber-optic downwelling irradiance

sensor), RGB (red, green, blue) sensors/cameras and more.

“Integration is also key because putting specialized and sensitive instruments aboard a UAV can be a costly, drawn-out process. Headwall is providing turnkey packages to customers who need the UAV plus hyperspectral, lidar and full software control capability,” Bannon said.

While hyperspectral sensors collect literally hundreds of spectral bands of data, important decision-making in the realm of remote sensing depends on collecting other data streams as well as post-processing software, which attaches the real value into the data collection process.

Another emerging trend to watch is the increasing connectivity to the cloud, which will allow users to execute advanced functions on images. For example, Flir’s cloud-based service, called RapidRecap, compresses a full day of video from its consumer Flir FX security camera into a single minute where only events of interest are displayed.

“In security applications, you can watch all that occurs at your house and expand on specific occurrences, like when the solicitor knocked at the front door,” Boulanger said. “The cloud offers limitless processing capability and more original services will become available through it; services that cannot yet be embedded.”

Targeting wavelengths

As the cost of sensors continues to decrease, it is now possible to blend different wavelengths into a single image or sensor system, providing the best that each wavelength has to offer or allowing concentration on a certain spectral range of interest.

For example, Headwall’s airborne chlorophyll fluorescence sensor targets the very narrow range where chlorophyll fluoresces (755 to 775 nm), which can allow the scientific research community to learn more about crop health and agricultural yield.

“It allows the precision agriculture people to make better decisions with respect to irrigation, fertilization, plant stress and the presence of crop diseases because they now have near real-time access to the underpinnings of the photosynthetic process through hyperspectral image data,” Bannon added.

Alternatively, targeting a somewhat underutilized yet promising slice of the electromagnetic spectrum is the goal for companies focusing on the troublesome terahertz regime. Sitting between the microwave and optical frequencies is the part of the spectrum that is commonly referred to as the terahertz gap.

While technically the gap has been explored and mastered, the majority of devices employing T-rays (terahertz radiation) remain expensive. But just as in the case of the more well-utilized regimes, terahertz-based equipment is also becoming cheaper, raising its attractiveness for industrial applications.

“As far as we can see it, there are two major cost drivers influencing [the] THz imaging industry,” said Viacheslav Muravev, vice president of Terasense Group Inc. in San Jose, Calif. “First, it is the cost of crystals and chips that are used for production of pixels for our THz detectors and THz imaging cameras. We can make THz cameras with sensor arrays of almost any configuration. It’s like a Meccano for kids, where we simply add modules.”

Courtesy of TeraSense Group Inc.

When calculating the price for a custom-tailored solution (sensor array), estimates are based on the price per pixel, ranging from $7 to $10, depending on the size of array.

“Second, it is the cost of [the] THz generator, and it’d better be powerful enough to support THz imaging. Power output of [the] THz emitter is one of the most critical factors determining the quality of THz image and penetration capability,” continued Muravev. “Our generators — IMPATT diodes — are capable of ensuring power output of 80 to 100 mW (at the frequency of 100 GHz) and cost over $5,000. Of course, many customers would love to see some improvement in this aspect.”

Since releasing its first terahertz imager in 2012, Terasense has developed its TeraFAST-256-HS high-speed linear camera, which was designed specifically to fill the needs in nondestructive testing and quality control for various industrial applications. But the company’s Holy Grail is to develop a marketable full-body screener system.

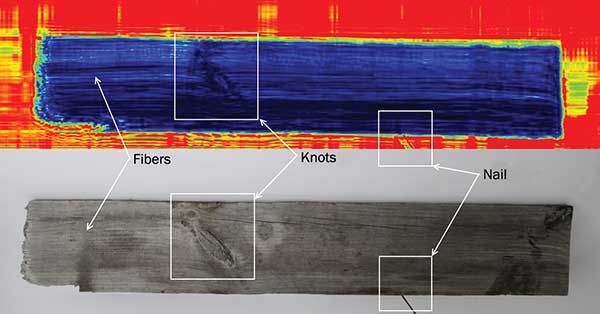

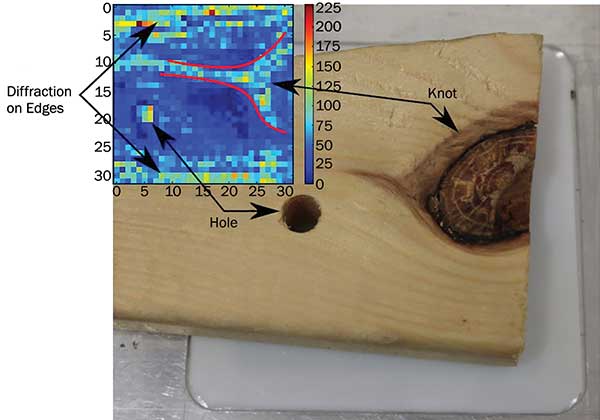

Terahertz imagers can be used to detect internal defects such as holes/knots in this 20-mm thick

piece of pine wood. Courtesy of TeraSense Group Inc.

While still at the proof-of-concept stage, Terasense is busy working toward building an entire people-screening system. Crucial to this is developing a powerful enough emitter to generate the penetration capability and image quality needed.

Today, its most powerful source offers tunable frequency (within dedicated ranges 80 to 360 GHz) at a power output of up to 1 W. But at a retail price of over $50,000, Muravev admits that sales may be slow.

“The first manufacturer who claims to have created a comprehensive solution for a safe and harmless full-body scanner for [security] screening or NDT [nondestructive testing] application will indisputably strike a bonanza,” Muravev said.