From generating crystal-clear images to making sense of huge reams of imaging data, processing power has never been more important than in today’s highly visual world.

From the moment we wake up and open our eyes, we are processing images. It is this image processing that enables us to successfully complete tasks from the mundane to those that are undertaken only by the gifted professionals trained to make split-second decisions — from the pro basketball player to the jet fighter pilot.

This image processing takes place in arguably the most complex and powerful computing device known to man — the human brain. But as well as a sophisticated processing center, the human brain is also versatile and creative and so has engineered technologies capable of performing all kinds of image processing tasks for us.

From the everyday snapping of camera phones, as well as image enhancement via playful apps, to advanced biometric sensing, factory machine vision and driverless automobiles, image processing systems take visual information and convert it into usable data. From this meaningful data, dedicated software will provide output in a convenient form — or even analyze it to make decisions.

“For centuries and certainly more, people have tried to visually represent things using drawings or paintings, later by taking photographs. Since the emergence of computers and particularly digital imaging, images now correspond to arrays of quantized values,” said William Guicquero, a Ph.D. research engineer at Grenoble, France-based Leti, a technology research institute at CEA Tech and one of the world’s largest organizations for applied research in microelectronics and nanotechnology.

Example of an inpainting process. This operation consists in finding the grayscale values of missing (or corrupted) pixels. Courtesy of CEA-Leti.

“The amount of data that are needed to directly store such arrays is, most of the time, hardly affordable by computer systems in terms of storage capacity and/or processing capabilities,” he said. “It constitutes one of the most important limitations. For example, an uncompressed, RGB demosaiced video sequence of two seconds from a 16-MP image sensor at 30 fps would generate approximatively 3 GB of data. This is where image processing comes into play by proposing to change the representations in which we have to deal with images.”

Embedded and deported processing

Image processing can be divided into two branches: embedded and deported. Embedded processing occurs close to the sensing system and does not involve the same constraints as deported computer vision, which has the advantage of more computational resources.

The current trend regarding acquisition systems is to propose novel sensing approaches that reduce constraints in terms of power consumption, frame rate, dynamic range, spectral sensitivity and so on.

At the top of the list of alternative techniques that currently show the most promise are compressive sensing (CS) and event-based acquisitions. On the other hand, direct decisions taken by the acquisition system without either storing or transmitting the image is also investigated for some specific applications such as food/product/land monitoring, mainly in the context of autonomous systems.

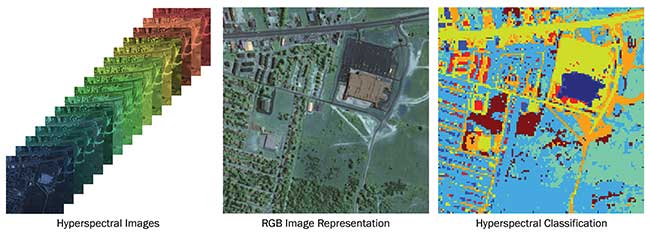

Pixel patches classification of a hyperspectral image (URBAN test dataset), based on local spectral signatures. Courtesy of CEA-Leti.

When it comes to deported processing, computer vision intensively employs advanced machine learning tools to let the computer understand the underlying meaning of images and videos. Recently, results obtained using convolutional neural networks (CNN) for image investigation demonstrate that deep-learning can be highly relevant in specific contexts, such as face detection.

“Robustness of state-of-the-art algorithms will be improved in the near future by the use of such ‘bio-inspired,’ nonlinear techniques, in the hope that it would not considerably increase the overall computational complexity,” Guicquero said. “However, it will surely have an impact on object detection and classification. Regression analysis and dimensional reduction tools are also going to play a more important role as well, for instance for object pose estimation.”

Processing power grows

“Image processing has always been about getting the job done with what was available,” said Yvon Bouchard, director of OEM Applications at digital imaging and machine vision specialists Teledyne Dalsa, Montreal. “There has been always the battle of the ideal way and the practical approach to get the job done. This probably will never change since both the processing power and the amount of data to process keep on growing.”

As the sheer volume of imaging information captured by today’s vision systems continues to rise, image processing power steps in to convert image arrays into manageable units.

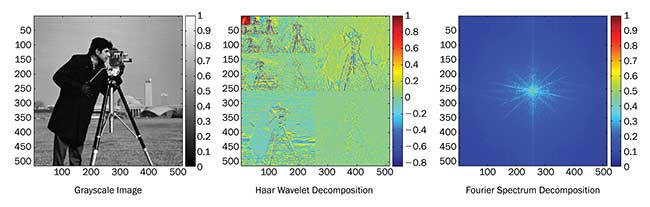

“Mathematical transforms based on Fourier analysis, for example, discrete cosine transform, or multiscale analysis — discrete wavelet transform— has been widely used for image compression purposes,” Guicquero said. “Without those kind of mathematical tools there would hardly be portable devices with embedded high-resolution cameras or even internet video-streaming websites.”

Examples of two commonly used signal representation changes based on

multiscale and spectrum decompositions. Courtesy of CEA-Leti.

Indeed, some of the big improvements in optical systems are in the image processing software at the sensor level.

The image from an imperfect optical system can sometimes be corrected via image processing. Some examples are removing distortion from an image, or refocusing an image using a technique referred to as light-field imaging.

“The real benefit of image processing has been to allow computer algorithms to replace human eyes in the interpretation of images where decisions need to be made. The advantages here are the same as in all areas where computers have impacted society: speed, efficiency/automation, accuracy and consistency,” said Akash Arora, product manager at software and services specialist Zemax of Kirkland, Wash.

Improvements in image processing have enabled major advances in a wide range of technical areas, including biometric sensing, remote sensing, factory machine vision, driver assistance/driverless automobiles, facial recognition, virtual reality/augmented reality and many others.

“The optics used in these applications aren’t necessarily revolutionary,” Arora said. “Processing the image with a software algorithm that can make consistent, accurate decisions thousands of times a second is the true revolution.”

That’s not to say optical components aren’t contributing to progress in the field. Advances in sensors now make it possible to image more than visible information.

“For example, [with] the thermal energy and the distance to objects (3D), combined with fusion technology, we can now examine senses with new information about what is going on and intervene in a way which was impossible in the past,” said Bouchard. “The availability of low-cost sensing devices is now opening up markets which never existed before — the various applications for remote sensing using drone technology is just one example of this.”

Headwall Photonics based in Fitchburg, Mass., experts in hyperspectral imaging, is only too familiar with the expanding market enabled by drone technology. Its sensors and software can be fitted and flown over fields to provide key vegetative indices that are needed for precision agriculture and environmental mapping.

Hyperspectral data taken above cranberry bogs in southeastern massachusetts is orthorectified and overlaid on top of a Google Map image. Courtesy of Headwall Photoncis.

“Users are essentially looking for answers to ‘How effective is my fertilization and irrigation?,’ ‘Is there plant stress I need to be aware of?’ and ‘Are there any early warning signs of diseases that may affect an upcoming harvest?’” said Christopher Van Veen of marketing communications at Headwall Photonics.

“The various vegetative indices we are keying in on represent the answers, and our ‘deliverable’ to customers is much more that than simply a hyperspectral sensor and the software that drives it. The ‘big data’ coming from these sensors contains actionable information

that the remote-sensing community clearly demands.”

Rethinking signal investigation

A major sector benefiting from enhanced processing is medical imaging. In the case of magnetic resonance imaging (MRI), for example, it is not only compressed sensing that has revolutionized its use, but also a rethinking of traditional signal investigation and interpretation — known as “inverse problems.”

“Traditional techniques are mostly based on signal analysis, whereas new techniques capitalize on synthesis using structural assumption of the signal,” Guicquero said. “Most of the image processing problems can be now stated as inverse problems supposing an acquisition model of hidden variables. Thanks to recent algorithmic advances, those inverse problems can now be solved at the expense of an acceptable increase of algorithm complexity.”

The resolution of inverse problems has reduced MRI scan times while improving image motion blurring by taking only a part of the data that is commonly acquired by conventional MRI systems and processing it.

This decrease in examination times helps physicians scan torsos, especially in pediatrics, removing the need to anesthetize children to reduce movements of lungs and abdomen.

Another sector that stands to benefit is consumer electronics, and in particular, augmented reality devices. Such systems seem to benefit the most from advanced image processing thanks to an optimized combination of sensing devices, computer vision programs, computer graphics rendering and display devices, all involving image processing.

“The image processing community seems to be deeply motivated to be a driving force in the technology race,” Guicquero said. “The introduction of reproducible research allows the scientific community to progress even faster by communicating more efficiently with code sharing. Open source libraries — for example, OpenCV — that are collectively maintained by the community help students, engineers and researchers to reduce the required time for coding new ideas.”