Nondefense applications such as agriculture inspection are placing new demands on optics and image processing software.

JARED TALBOT, EDMUND OPTICS INC. AND RYAN NELSON, SENTERA LLC

For decades, many of the most impactful commercial technologies to hit the market have had their development either started or aided by the military. Among the most notable examples are personal computers, the internet and wireless network communications. One of the newer technologies making this transition into the commercial arena is that of unmanned aerial vehicles (UAVs). The use of UAVs in combat dates back all the way to 1849 when Austria deployed unmanned air balloons to deliver remotely triggered bombs in combat1. Many of the earliest UAVs used in battle were assault drones rather than reconnaissance systems, as seen by the radio-controlled warplanes used during World War I. It was not until the mid-1950s that the use of drones for reconnaissance really began to develop, during the Cold War2.

Sentera AgVault software interface uses its QuickTile software to use georeference data to compile composite images within minutes on site to allow immediate viewing of the entire areas. Courtesy of Sentera LLC.

While UAV technology is at the forefront of some of the most cutting-edge military applications (for more details, see “Defense Drones Take Sensing to New Heights” in the April 2017 issue of Photonics Spectra), UAVs are now relied on for commercial applications such as infrastructure assessment, agricultural inspection, and land surveillance and mapping.

Minimizing distortion & motion blur

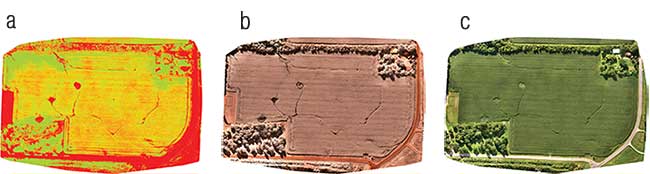

Most mapping and agricultural applications involve a UAV flying over a large area and taking incremental photographs, which are later combined together to view the entire area. Many software tools allow users to stitch together the images to view and monitor the status of the entire field, construction site or area of interest. However, these processes can take hours to complete and use significant processing power without the correct software. Alternatively, there are a few software packages available to tile the images together within minutes on site to allow immediate viewing of the entire area. Low lens distortion improves the overall appearance of the final images with these software packages. With this in mind, imaging lenses with two percent or less geometric distortion across the field of view are ideal for these applications.

For these software tools to work as intended, the images need to easily stitch together to generate 2D mosaic images or 3D point clouds. To simplify the programming required to stitch the images together, the motion blur needs to be minimized, as well as the distortion. The loss in resolution that motion blur introduces can reduce the accuracy of the stitching algorithm and, in turn, limit the accuracy of the final data set obtained. To control motion blur, the aperture setting for the lens must be considered — along with the dynamic range and sensitivity of the sensor — to ensure shorter exposure times. All specifications relating to minimizing motion blur should be considered when optimizing the exposure time for any given application.

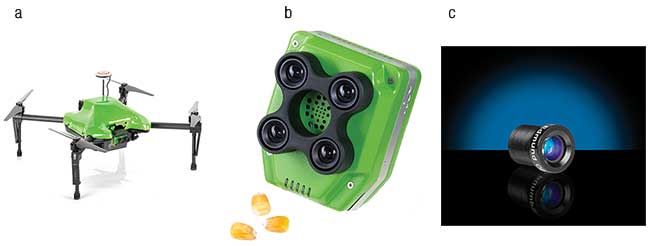

The Sentera Omni AG (a). The Sentera Quad Sensor (b) is an example of the type of multisensor imager used by agricultural researchers, allowing data for four different OVIs (optical vegetation indices) to be captured in a single flight. The Edmund Optics 8-mm NIR Harsh Environment μ-Video Lens (c). Courtesy of Sentera LLC.

Stability in the aperture setting itself is also crucial to UAV performance; therefore, fixed aperture lenses are preferable to traditional irises for UAV applications. Depending on the sensitivity of the system and image-processing algorithm to fluctuations in light intensity, even small size variations in the aperture setting from a variable iris can be problematic. Additionally, using a lens with a fixed aperture offers the added benefit of reducing the size and weight of the overall lens assembly. In UAV applications where the overall payload and geometric dimensions are critical to how aerodynamic the entire system is and the maximum time-of-flight offered, the weight and size of the imaging lens have greater importance. Particularly in situations where a camera with a large sensor format is being used, the size of the imaging lens and its weight can be untenable, and the ability to drop out subcomponents in a fixed aperture lens can be invaluable in meeting these requirements. Simplicity is paramount in UAVs; as such, anything that can be done to make the equipment smaller, lighter and composed of fewer moving components is always preferred. Having a fixed aperture lens design covers all of these requirements.

Spectral signatures tell all

In addition to aperture stability, the coatings and glass types chosen can impact the light throughput of the system. Specifically for agriculture applications, the near-infrared (NIR) region is of particular interest. Lenses with high transmission in the NIR wavelength range are required3 because in many applications, the Normalized Difference Vegetation Index (NDVI) must be quantified. The NDVI is a method of determining the density of green on a patch of land by comparing the amount of visible and NIR light reflected by the plants within it4.

Comparison of an NDVI-weighted (a), a near-infrared (b), and a visible (c) image of a farm field captured with the Sentera Double 4K Sensor. Courtesy of Sentera LLC.

When taking a true NDVI measurement, raw data is collected in both the visible and NIR spectrums. In some circumstances, different imaging lenses with their own separate imagers can be used so that each channel can be optimized for its particular spectral region. To ensure this, the coatings of each imaging lens for each spectral channel are optimized for the specified wavelength range to increase the transmission. Shortpass and longpass filters can also be incorporated to create an exact spectral separation for each channel. In some cases, different lenses designed for the best resolution in each wavelength range are used. Conversely, there are some companies that offer NDVI systems that only capture a synthetic NDVI measurement. Instead of collecting raw data in both the visible and NIR, these systems estimate the nonvisible portion of the spectrum using the visible data. But this does not guarantee a true NDVI.

Sentera DJI Phantom 4 Pro performing a crop inspection. Courtesy of Sentera LLC.

Narrow bandpass filters with high transmissions in the passband are often also in multispectral imaging. In agriculture, the optical vegetation index (OVI) is the vegetation’s spectral signature used for identifying specific types of weeds, pests and deficiencies of the crops. Most of the time, researchers need multiple cameras with unique spectral bands for the various OVIs. Being able to pack as many imagers and spectral filters as possible into one system is advantageous because it increases the number of OVIs that can be measured in a single flight. Multisensor platforms allow researchers to easily accomplish this, as well as customize their systems to cover a variety of spectral wavebands. Ideally, custom fabrication for each configuration should be avoided, so working with companies with large catalog offerings of bandpass, longpass and shortpass filters is ideal. This allows for a wide range of spectral options without a huge investment in custom components.

Temps affect optical performance

As previously mentioned, glass choices in lens assembly designs can impact transmission. However, in some cases the thermal properties of the glass should also be considered. In applications such as land surveillance, where a constant altitude above ground level needs to be maintained, the altitude with respect to sea level can vary5. When a system is required to operate over a wide range of altitudes, it can experience large swings in temperature change. Maintaining the optical performance of the system across a wide temperature range can be very difficult. The fit of the components in the lens assemblies themselves can be problematic. Tight fits can offer good stability at room temperature, but can induce stress on the lens elements as the temperature decreases and the metal contracts faster than the glass components. The stress can cause polarization effects as well as chips or fractures in the glass. Conversely, if the temperature increases from room temperature, the fit of the components can loosen due to the thermal expansion difference between the metal and glass, leading to decreased stability of the lens assembly.

In addition to the effect temperature has on the mechanical dimensions of components, the index of refraction of the glasses in the lens assembly also varies with temperature. This leads to changes in the optical performance of the imaging lens. One of the primary parameters affected is the focal length of the lens, as it experiences what is called a thermal focal shift — the variation of best focus with temperature. One way to correct for this is via active athermalization where the system will compensate by mechanically adjusting the focus of the lens as temperature varies. Thermal focal shift can also be overcome through passive athermalization. If the required operating temperature range is known up front when considering a custom lens design, the imaging lens can be designed so that the thermal focal shift over that range is corrected for specific criteria. Here, the glass types and metal materials incorporated into the design are chosen and optimized to passively compensate for the thermal focal shift.

Lastly, for systems where multiple imagers are used simultaneously, the pointing stability of the lenses can be critical6. Each imager has a slightly different point of view due to the physical offset of them being positioned side by side, rather than superimposed. This causes the accuracy with which the data from each imager can be synced together to be limited by their calibration with respect to one another and how well that is maintained. This makes the stability of the calibration during use critical to maintaining the resolution of the data. In many land surveying and 3D mapping applications, geographical reference points within the images are primarily used to stitch the images together. However, if the system is able to maintain calibration of the central pixel offset for all the imagers, the resolution of the data from the individual images can be better maintained during triangulation. This is essential for extracting depth information via triangulation of the images captured on a set of imagers.

In practice, most applications will not require the system to be optimized for each and every optical and software parameter mentioned. Often, only one or two of the parameters must be maximized in terms of performance to meet the system requirements, without over-designing the system and increasing costs unnecessarily. The key is to make sure each parameter is thoroughly examined during development to determine which are the most critical and need to be weighted more heavily in the design. This ensures the end product meets all the requirements and does so as economically as possible. As new application spaces continue to emerge in these fields with increased performance and complexity required, the need for experts able to navigate through these parameters will only grow in the future.

Meet the authors

Jared Talbot is a project manager at Edmund Optics Inc., having previously been a product support engineer and solutions engineer. He

is completing his master’s degree in optical sciences from the University of Arizona; email: [email protected].

Ryan Nelson is the chief mechanical engineer for Sentera LLC, responsible for the design and manufacturing of advanced precision agriculture sensor technology at Sentera. Ryan holds a bachelor’s degree in mechanical engineering from Iowa State University; email: [email protected]

References

1. C. Leu (2015). The secret history of World War II-era Drones. Wired. https://www.wired.com/2015/12/the-secret-history-of-world-war-ii-era-drones/.

2. United State Air Force (2015). Radioplane/Northrop MQM-57 Falconer. http://www.nationalmuseum.af.mil/Visit/Museum-Exhibits/Fact-Sheets/Display/Article/195784/radioplanenorthrop-mqm-57-falconer/.

3. Sentera LLC (2017). Sentera agriculture solutions. https://sentera.com/agriculture-solutions/.

4. P. Przyborski (2017). Measuring vegetation (NDVI & EVI). https://earthobservatory.nasa.gov/Features/MeasuringVegetation/measuring_vegetation_2.php.

5. SPH Engineering (2017). UgCS photogrammetry tool for UAV land surveying. https://www.ugcs.com/files/photogrammetry_tool/UgCS-for_Land_surveying-with-photogrammetry.pdf.

6. Y. Yang et al. (2016). Stable imaging and accuracy issues of low-altitude unmanned aerial vehicle photogrammetry systems. Remote Sens, Vol. 8, p. 316.