Patrick Myles, Teledyne Dalsa

Imaging technology is a real game changer for Dallas Cowboys fans and refs at AT&T Stadium.

Figuring out which team came up with the football during a postfumble player pileup has gotten a whole lot easier – at least, it has for Dallas Cowboys home games. At AT&T Stadium in Arlington, Texas, referees and fans alike can review the action from any angle with complete 3-D freedom, thanks to the freeD (free-dimensional video) sports replay system from Replay Technologies.

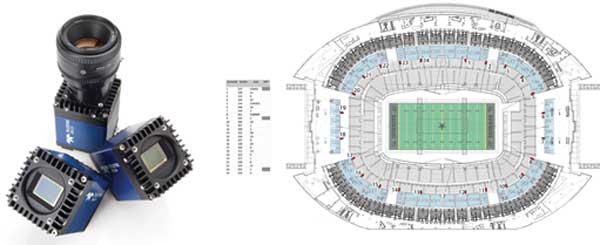

The system produces real-time scenes using proprietary algorithms that create photorealistic-quality model databases and rendered output. The system leverages two dozen Falcon2 12-MP CMOS color cameras (with rates up to 58 fps) connected to Xcelera frame grabbers (two in each of 12 computers).

The freeD replay system from Replay Technologies brings football fans up close and personal with real-time replays

at Dallas Cowboys games at AT&T Stadium in Arlington, Texas.

“We are crossing several different technological fields to create a new type of media with infinite imaging possibilities,” said Matteo Shapira, co-founder and chief technical and creative officer of Replay Technologies. The Israeli company, which also has operations in the US, combines visual-effects technology with algorithms rooted in classic image processing and computer vision.

“Using advanced computer vision systems, image processing and experience from the automated avionics industry, our system can create images with sharp, brilliant colors – all the while withstanding different physical conditions, such as moving clouds or severe camera shakes,” Shapira said.

The three R’s of freeD

The key to freeD lifelike imaging is the way in which the technology acquires data and translates it back into real-world information. Optical signals are captured synchronously from the 24 cameras, then transferred via fiber optics to Camera Link and into the frame grabbers. The configuration itself isn’t extraordinary, but unusually high speed, illumination, resolution and size (the stadium screen is 159 × 71 ft) requirements made this application anything but ordinary. Having one of the world’s largest high-definition screens as a display medium required scrutinizing every aspect of the images for photorealistic likeness and vibrant color.

The freeD stadium setup features 24 Teledyne Dalsa Falcon2 cameras. Photo courtesy of Teledyne Dalsa.

Data recording, reconstruction and rendering are the three vital steps. During development, the cameras were pushed to the maximum to achieve a quality equal to that of current broadcasting cameras. The Falcon2 cameras allowed true 10-bit imaging to drive the process, from configuring the correct filtering parameters to developing a unique method called pre-acquisition LUT (look-up table).

Dvir Besserglick, chief engineer of Replay Technologies, led the two teams’ collaboration on enabling the 10-bit sensor to conform to an 8-bit pipeline. This was accomplished by allowing the cameras to shoot 10-bit raw footage, expanding the histogram right after the sampling stage and outputting the final 8-bit images with a more robust histogram range.

Recording: To produce photorealistic 3-D images, the cameras must, of course, provide high-quality images. To find cameras that were up to the task – or that could be brought up to the task – Shapira worked with Yvon Bouchard, Asia-Pacific technology director of Teledyne Dalsa. In the freeD system, the 12 megapixels are cropped down to 9.3, or the equivalent of 4K technology, to provide the horizontal resolution required to capture the entire field in full color. As a result, the amount of data processed increased sixfold.

The technology enables broadcast-quality, high-definition replays in real time. Photo courtesy of Teledyne Dalsa.

External illumination was another challenge. All 24 cameras are synced to shoot the exact same play at the same time. To ensure that images from each camera have the same light intensity, the exposure controls must adjust dynamically to external illumination while recording is in process. Because this capability is not innate to the cameras, Bouchard performed a firmware upgrade that enables the cameras to adjust images continually as a background task while acquiring images continually as a foreground task. “We pushed these already powerful cameras to the edge so that Replay can obtain the raw footage needed to generate 3-D imagery.”

Replay Technologies had previously developed strong calibration techniques and algorithms to ensure that camera positions and scene structures remain accurate to the subpixel, even if the scenery completely changes due to adverse weather conditions or the shaking of stadium concrete after exciting plays.

Reconstruction: Replay’s proprietary algorithm extrapolates the images when moving from one camera to the next to create 3-D pixels that represent the fine details of a scene. “In fine-tuning the ability to build 2-D raw images into 3-D data from multiple images, extensive work went into resolving issues of occlusion – areas that are either too dark or too light,” Bouchard said. One solution was to set up a processing path for gamma functions that manipulate the images to make darker objects brighter and more visually appealing.

“In straight-ahead machine-vision applications,” he said, “images are rarely manipulated in a nonlinear fashion as they are in visualization applications like this one, where the tiniest nuances play such a large role in the visual experience.”

The result of the reconstruction phase is a freeD database composed of 3-D points in space that allow for the next phase, which is rendering.

Rendering: Once captured and reconstructed data has been stored as a freeD database, users can employ it to produce any viewing angle. Replay uses a proprietary rendering and shading engine that provides a real-time, photorealistic “synthetic” view; essentially, any given camera perspective, lens type and optical parameter from within the reconstruction grid, or the area covered by the camera sensors. This rendering allows for a true representation of physical phenomena such as highlights, reflections and light scattering, all of which are derived from real-world camera data (there is no human manipulation of the rendering process).

The freeD technique works by sampling each of the freeD points, known as voxels, to create a novel view with photorealistic material qualities. Based completely on a GPU (graphics processing unit), the method allows complex scenes to be rendered in real time. This capability will enable the evolution of the product into the consumer arena, including applications in mobile devices and smart televisions.

Replay’s CGI (computer-generated imagery) team, headed by Diego Prilusky, has devised a system called Camera-Engine that allows the director to create a smooth, unlimited camera travel path in the physical world within seconds. The team already is working on a consumer-based interactive version of the offering, which will allow sports fans to roam around the action with great ease.

With only three months to get the freeD football application operational in time for a September 2013 Dallas Cowboys vs. New York Giants game at the stadium, the firmware had to be brought up to the point where data was usable; the cameras also had to be synced properly, and images had to be guaranteed to be of broadcast quality – and fast.

The system had to be developed and installed in about three months to be ready for a September debut at a Cowboys game. Photo courtesy of Teledyne Dalsa.

NBC and the Cowboys helped in making sure the technology was installed and ready for the premiere “Sunday Night Football” game, Shapira noted.

Long-term vision

“From a technology standpoint, an application like this could have been cobbled together 10 years ago; however, the costs would have been exorbitant,” Bouchard said. “Advances in machine-vision technology, as well as key enabling technologies during this time, made a powerful, yet affordable, solution possible.”

It’s those ongoing technological advances that Replay Technologies is counting on – and already harnessing – to help evolve freeD into something that sounds like it’s right out of “Star Trek.”

Currently, freeD technology can create static replays of specific areas or moments of an activity; for example, the moment that a touchdown occurs. When such an event happens, the freeD servers use a complete database of raw files to process the exact moment into a freeD database.

Shapira anticipates that, by the end of 2014, the technology will have moved from being a “still stutter” to a fully dynamic offering. As a result, besides giving producers and directors a way to roam freely around a fixed moment in time, the technology eventually will allow them to create a completely unconstrained camera move, as if taken from the world of video games.

“By next year, we plan to give consumers the ability to move around a sequence of video without being bounded by one moment in time,” Shapira said. “About three years down the road, we aim to make the technology completely streaming and dynamic, allowing not only an entire production to run within a virtual environment, but also to allow the home consumer to choose how to watch the media.

“Imagine how different it will be to experience a football game when you can not only watch it, but when production teams and viewers alike can freely roam around a captured scene using a computer or tablet and view a particular scene from infinite angles.”

Although future versions of the freeD technology promise to be true game changers in how viewers experience sporting events, upcoming iterations of freeD, in tandem with enabling technologies such as holographic displays and Google 3-D glasses, also will open up applications that once were confined to science fiction shows and video game screens.

By connecting Google 3-D glasses, for example, to a continually streaming freeD database, users will experience a “holodeck”-like reality without something actually occurring at that specific place and time.

Or, think of a Skypelike experience, but instead of seeing each other through computer panels, people would perceive one another in the same space, as all together in a combined reality.

“We believe that, ultimately, this will be another social breakthrough in that you will be able to fully interact with other people while not sharing the same physical space,” Shapira said. As an example, he cited a yoga master class with a yogi located in India observing the form and giving notes to a student practicing in Los Angeles.

“Ultimately,” Shapira said, “our vision is to change the concept of the static and moving image from something which is two-dimensional to a true three-dimensional representation.”

Meet the author

Patrick Myles is vice president of business development at Teledyne Dalsa Inc. in Waterloo, Ontario, Canada; email: [email protected].