In its U.S.-based refineries, multinational energy company Chevron processes almost 1 million barrels of transportation fuels per day (pre-COVID), producing gasoline, diesel, propane and butane, fuel oils, coke, and certain chemicals for industrial use.

Because the company manufactures a range of fuels, it accordingly operates plants tailored for various types of feedstock — including both light and heavy crudes, which are then converted to jet fuels, coke, and gasoline. Its refining or manufacturing plants, where Chevron converts incoming crude oil to fuels, dot the North American continent, functioning in downstream capacities — and teaming with midstream and upstream — to maximize its value chain.

Based on the pixilation that each image delivers, compared to the images on which the AI model is trained and against images of all other connection points, an analyst then makes an appropriate, data-driven determination on whether to

intervene and how much time can be spared before doing so.

When production involves the

processing of about a million barrels of transportation fuel each day, any

hiccup could become a costly imperfection. A malfunctioning piece of equipment, such as an overheating panel, could knock a compressor or a pump offline, which could kick-start a domino effect of potential problems.

To combat such a blip, and to achieve the levels of scale and functionality that the company must maintain to meet its production goals, Chevron institutes detailed protocols aimed at maximizing efficiency and safety.

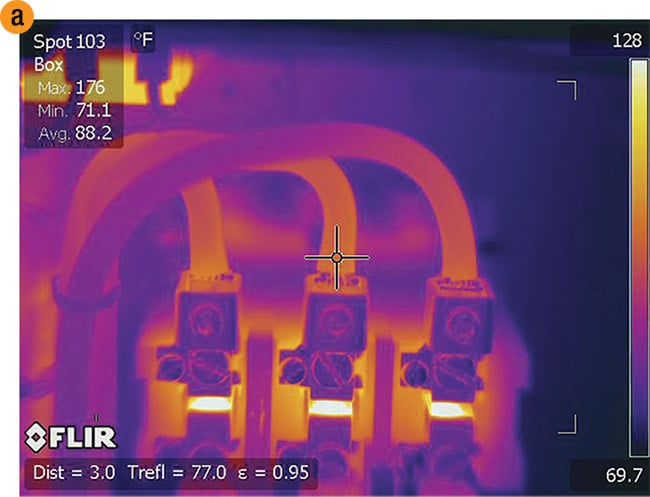

In the refining and manufacturing segment of its operation, one of the safety mechanisms involves electrical panels. The company uses FLIR cameras to capture the point at which a panel hooks up to three hose-like cables, which power essential pumps and compressors found in every refining and manufacturing facility.

The thermographic cameras pair with a custom-trained vision model using Microsoft Cognitive Services. After a set of images is obtained, it is processed using the computer vision AI model on Azure. Each image contains data and metadata that indicate potential irregularities in the temperatures of connection points. The system then uploads images to Azure storage (Blob Storage).

The custom AI model is trained to recognize optimal connections (“good” data), and conversely, suboptimal conditions (“bad” data). If connection-point temperatures fall out of range of or rise above a 7° temperature threshold, an alert is triggered, and a mechanic, as well as a person with certification in thermographic imaging, can be dispatched. An image of a potentially faulty piece of equipment tells the system to send a corresponding email, containing information about temperature differentials, to a human analyst.

Based on the pixilation that each image delivers, compared to the images on which the AI model is trained and against images of all other connection points, an analyst then makes an appropriate, data-driven determination on whether to intervene and how much time can be spared before doing so. With Blob Storage, the analyst also has near-immediate access to the images.

Chevron’s larger manufacturing facilities rely on upward of 100 panels to power machinery. A single plant may have more than 300 connection points. This means that Chevron obtains hundreds of unique images to ensure the data-delivery process is consistent from connection to connection, panel to panel, and facility to facility. The step is performed manually to feed the model with optimal data and allow it to be trained to recognize and diagnose abnormalities, and to determine to what extent they may pose a safety concern.

Though a manual component remains, the system represents a digital shift from an all-manual panel-monitoring method.

Thermographic analysis

Chevron uses FLIR T1020 thermal cameras for the application. The cameras feature a focal plane array detector and preset capabilities that allow users to select and combine measurements from any number of spots, boxes, or other profile indicators. The T1020 model detector delivers a spectral range of 7.5 to 14 μm and produces full-color infrared images with an IR resolution of 1024 × 768.

This resolution, paired with FLIR’s UltraMax image enhancement technology, delivers a total of 3.1 MP.

Thermographic images of the three-connector architecture located in Chevron’s refining and manufacturing facilities (a, b, c). Pixilation of the obtained images, compared to images on which

the AI model is trained, inform analysts of connection-point temperatures and any abnormalities.

Numerical values shown at the top left of (a), (b), and (c) correspond to the pixel range contained anywhere in the scene of each image. The values on the right of each image provide the model with the data that it processes to make determinations about connection-point temperatures. Examples (b) and (c) show a pixel range that would result in an email being sent to an analyst to trigger their further review. Courtesy of Chevron.

The visual information from the thermographic images comes in the form of data and metadata via the solution’s custom object-detection capability and optical character recognition (OCR) on Azure. These features quantify factors that determine whether a threshold has been crossed. Too much of one color in an image, for example, may indicate a low temperature or malfunctioning equipment. Not enough of a certain color in a location may signify a faulty connection.

A logical combination

Ana Auz-Law, a digital transformation analyst and program manager with Chevron, said the company’s decision to pair a thermographic imaging system with a logic model began in 2018.

The fully functional system performs in just minutes,

proceeding from image capture to the cloud, to digital

analysis, to human analyst, and, if necessary, to a technician.

What type of system to adopt, what equipment to use, and what physical features to use as a gauge in an attempt to receive a useful return on data were not immediately obvious.

“From a digital fluency perspective, and what we were

trying to get to, we did look at a couple of other things,”

Auz-Law said. “Corrosion is one of the other aspects that is

a heavy issue at refineries.” She said training a model on

images of corrosion is a time-consuming undertaking.

One of the primary factors in determining trainability — specifically, around what types of image features to train the system on — is making sure the system has enough “bad” data to weigh against the “good.”

The right model

A separate innovation involved developing a logic and

vision analytics model.

Chevron turned to a custom analytics approach. Microsoft Azure Cognitive Services and Azure Custom Vision allow end users to optimize computer vision for tailored applications. Chevron and Microsoft teamed up to develop an imaging system that adequately processed the type of infrared

images that the FLIR cameras delivered.

The system also needed to move as quickly as possible from image to insight. This could only commence after

training the AI model on proper images.

“What we are telling this model, in almost a supervised way, is, ‘Here are some examples of what a hot wire or a faulty connection looks like,’” said Ashish Bhatia, a principal program manager for Azure Global Engineering at Microsoft. Bhatia likened the deep learning training process to a hot bowl of soup, and to assessing how and why not to physically touch it.

“It is almost like how we teach the human brain — when you teach a child that the soup is hot,” he said. “You can see vapors coming out of it, or air coming out of it. That means the soup is hot. Sometimes they burn their fingers. Sometimes they burn their lips. But they figure out how you are going to make out what is a hot soup versus a not-so-hot soup.”

A breakthrough in the solution came when the team shifted from a classic image-classification model to an object-detection model. “Image classification is when the model is trying to determine if the image is ‘a dog’ or ‘a cat.’ An object-detection model determines the ‘presence of a dog’ in an image and draws a bounding box around the object of interest,” Bhatia said.

The difference between classification and detection is not so subtle. From the FLIR cameras, Chevron received images of connectors and panels. The company’s goal was straightforward: to determine the presence of a faulty wire connection. Though a classification system may differentiate between A and B classifications, a more effective model for Chevron would be one trained to assess a range of different wire connections using multiple, unique images.

“We showed examples of exactly hot connectors, so that [the model] is more targeted,” Bhatia said. Chevron had enough examples of what faulty wire connections looked like visually that system developers could place a bounding box around the images. This allowed the model to work from an object-detection point of view. The more data on which a model trains, the more precise it can learn to become.

The fully functional system performs in just minutes, proceeding from image capture to the cloud, to digital analysis, to human analyst, and, if necessary,

to a technician. An alternative —

outsourcing the manually obtained

images for analysis and awaiting a return — is a time-consuming, and potentially dangerous, practice.

Refining the model

Having enough data helped expedite the model-refining process. Solving

the problem technically using AI is only one piece of the puzzle. System deployment is another, Bhatia said.

“Microsoft and Chevron teams collaborated in training the AI models, designing the end-to-end solution and deploying it in production environment,” he said. “The close collaboration helped Chevron deploy the solution in about a month and a half.”

Too much of one color in a thermographic image,

for example, may indicate a low temperature or

malfunctioning equipment. Not enough of a certain color

in a location may signify a faulty connection.

There were speedbumps. The FLIR cameras that Chevron uses take infrared images. The collaborators needed to tweak the custom model to better perceive images in the IR format. This involved grayscaling the output images that Chevron had been processing directly from the FLIR cameras, making them amenable to the AI model.

The significance of using an object-detection approach along with OCR is that it allowed the system to make determinations with varying levels of certainty. Rather than having the

system indicate a faulty or sound connection, engineers conceived the model to process images with a level

of confidence: What it ultimately delivers is a confidence score.

Industry agnostic

Auz-Law and Bhatia both said the temptation to attempt to design a model capable of addressing multiple problems detracts from the ability to procure the proper data in the necessary amounts for an effective rollout.

“To properly train the model, you have to hone in on that specific problem that you are trying to solve, and solve for that specific problem, and then move on to the next problem.

You can’t combine the problems

together,” Auz-Law said.

From an image recognition perspective, the Chevron project is one of many potential applications for the AI system.

More challenging problems may involve recognizing whether a person is wearing a hard hat in a hard hat zone or smoking a cigarette near a gas pump.

“Those are harder problems to solve,” Bhatia said. “The way we do that with AI is to take as many of those ‘bad’ examples as we can get, and then also try to do some transformation — flip the images, do certain tricks with the images.”

Opportunities remain open to Chevron after the success of the first implementation of its system. What

the company has, Auz-Law said, is

an industry-agnostic model. Though the system is customized for manufacturing and refining, Chevron could employ it in upstream, midstream, and downstream operations. Upstream and downstream function in very different ways, both in terms of their functions and in terms of their equipment. However, when it comes to electricity and panels, she said the two operations are very much alike.