Face Recognition Systems and Consumer Devices

3D depth mapping of the human face is already familiar to users of smartphones, but how widely applicable is it to other smart consumer devices?

GILLES FLOREY, AMS AG

Many forms of biometric authentication are technically feasible, but one in particular has won acceptance in use cases that call for high security and minimal intrusiveness for the user: face recognition.

Today, most people’s interactions with face recognition will occur in one of two ways: They’ll use it either to unlock their smartphones or to support authentication in applications such as mobile payments, or they'll use it at automated passport

control machines when crossing a national border (Figure 1).

Figure 1. The automatic passport gates at Heathrow Airport’s terminal 5 use face recognition technology. Courtesy of Home Office under CC.

In both cases, users have been quick

to adopt the technology and to feel confident in its ability to correctly identify

a person. This is leading manufacturers

to explore the possibilities of face recognition for a much wider range of use

cases and products, such as for authentication and personalization in passenger cars, and for personalization and access control in home and building automation systems.

Implementing the technology in a normal consumer environment, however, is difficult, no matter how smooth the end process may appear to the user. The engineering challenge arises largely from the complexity of the system, which requires integration of IR emitters, detectors, optical components, and software in a solution that must work reliably in widely varying environmental conditions. The huge number of development resources available to smartphone manufacturers helps them to overcome these challenges. Manufacturers of other types of devices should take care not to underestimate the difficulty of implementing the technology, nor the benefits of buying a complete solution for face recognition from a third-party specialist.

The complexity of real life

Many people’s first experiences of face recognition will occur in automated passport control lanes. This experience is unrepresentative of the conditions in which a smartphone typically performs face recognition, however.

In a passport control lane, users are required to:

• Stand still while an image of their face is captured.

• Remove obstructions from their face, such as a hat, long hair, or eyeglasses.

• Look directly at the camera for several seconds.

• Maintain a fixed, unsmiling facial expression.

Software must compensate for the various use factors that impair the raw image on which the depth map

These directives enable an image to be

captured under ideal and consistent lighting conditions and then compared to a reference image that was captured previously under similar conditions.

In this use case, a conventional camera may be used to take a flat, 2D image of the user. Because the conditions under which the two images are taken are so tightly controlled, the images are guaranteed to have enough common features that a true match can almost always be recognized. And since a manual (human) backup is always available — in the form of immigration officials or border police — the software can be configured conservatively to avoid any risk of false acceptances, at the cost of a small number of false rejections.

In the consumer world, the conditions of use are quite different, and 2D face recognition simply does not work.

In everyday life, the user wants biometric authentication to be quick, convenient, and secure. The face recognition function in a smartphone has to cope with habits of normal use. Users will:

• Glance at the phone rather than look directly at the display.

• Attempt face recognition in poorly

lit rooms.

• Attempt face recognition when half their face is lit and half is in shadow.

• Use the face recognition function while walking.

The smartphone’s face recognition technology has to cope with all of these factors that can impair operation. Yet

consumers will have low

tolerance for repeated false

rejection events.

Smartphone owners also expect

the phone’s face recognition

system to remain secure; if it is

broken or works improperly, it

leaves the user’s phone and data

vulnerable to theft or misuse.

This means the false acceptance

rate must be extremely low, and

that the face recognition system must resist spoofing or attempts to fool the system by showing it a picture or even a mask of an authorized user.

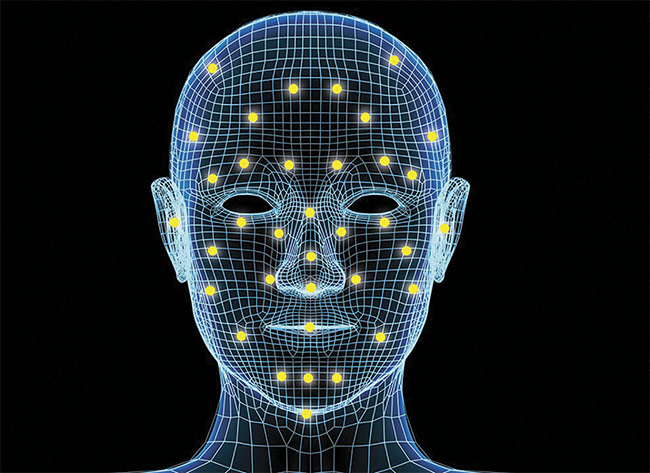

The technological solution to these multiple challenges is 3D face recognition, in which a depth map rather than a photograph of the user’s face is generated. A facial depth map is a set of thousands of coordinates mapped in 3D space showing the surface contours of the user’s head relative to a single point of view in front of the user (Figure 2). This depth map may be compared with a reference depth map of the user’s face to authenticate the user. Several known techniques are available for generating a facial depth map, including:

Figure 2. A depth map has no requirement for visible light phenomena such as color or shade. Courtesy of ams.

• Time-of-flight sensing. This technique uses highly accurate distance measurements across the field of view of the photosensor. It measures distance by timing the flight of reflected IR light from the emitter to the user’s face and back to the photosensor.

• Stereo imaging. Like human vision, this technique uses the differences in perspective between two spaced photosensors to create perspective and depth.

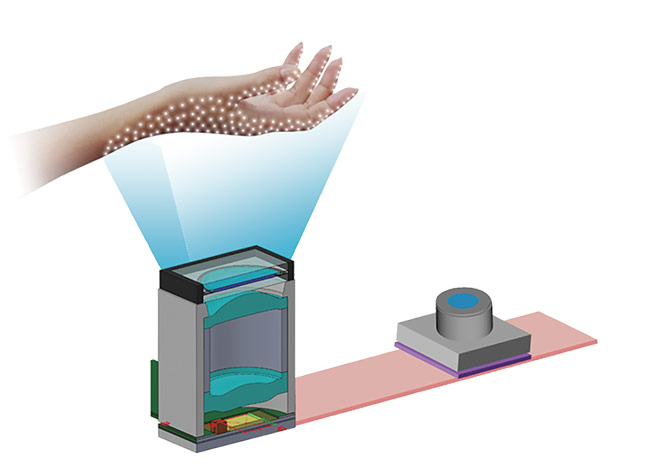

• Structured light. Structured light systems project random patterns of dots — sometimes more than 30,000 — onto the target. The miniature projection systems consist of VCSEL arrays as the light source, along with sophisticated optical and micro-optical elements. A camera optimized for NIR wavelengths captures images of the dot pattern (Figure 3). Algorithms generate depth maps by analyzing the distortions of the pattern caused by the profile of the user’s face.

Figure 3. A structured lighting system analyzes distortions of an emitted pattern of lights. Courtesy of ams.

These techniques may be known, but this does not mean that they are easy to implement. The hardware — emitters, micro-optics, and photosensors — is available in various modules from suppliers, and may readily be integrated into the design of a smartphone’s assembly.

But the hardware alone is not the solution to face recognition. Software is required to: 1) convert the signals from the photosensor or image sensor into a depth map, and 2) match the depth map to a reference depth map for recognition of the user’s face.

The software must also compensate for the various use factors that impair the raw image on which the depth map is based, such as a varying angle of incidence of emitted light, or any motion of the user’s head. NIR-based systems also have to cancel out interference from ambient light sources, such as sunlight. In addition, the software may have to compensate for imperfect placement or assembly of the depth-mapping module in the case of a phone.

Every implementation of a face recognition system will be different in every smartphone design. Factors such as the positioning of the illuminator and sensor modules relative to each other, the type and thickness of the cover glass, and the shape and size of the aperture all affect depth-mapping performance. And because hardware components and assemblies are never exactly the same from one production unit to another, the face recognition system requires testing and calibration to ensure that its operation meets the specified performance standard.

The complexity of the hardware and software design challenge is formidable, as is that of the calibration process, and the cost of implementing the technology is correspondingly high. This has been no bar to the progress of its adoption in the smartphone, however, where booming consumer demand has driven manufacturers to introduce face recognition as an important feature in high-end phones since 2018, and no doubt they will implement it in mid-range and budget phones at a later date.

Most phone manufacturers will choose to source their solution for face recognition from a single integrated supplier. Such suppliers provide both the hardware and the software for depth mapping and face matching, the combination of which can optimize the system’s operation in any phone assembly and also saves the OEM the task of integrating discrete hardware and software components. This provides for faster time to market and reduced design risk.

The cost and complexity of 3D face

recognition systems, however, also constrain their application beyond the smartphone. Carmakers and manufacturers of smart door locks do not want to spend the development time required to integrate face recognition capabilities into their products and thereby inflate their own bill-of-materials expenses.

In consumer applications, product manufacturers have an alternative option: to piggyback on an implementation of face recognition in a smartphone. The smartphone is already the hub of almost every consumer’s digital life. Both the Android and iOS platforms provide familiar developer interfaces for integrating third-party applications. In the automotive world, systems such as Ford’s SYNC technology already provide a high level of integration between the smartphone and the car’s infotainment system.

This means that the fastest and least risky route to the adoption of face recognition across a huge range of consumer-oriented applications is likely to occur by following a master face recognition system hosted on the user’s smartphone, in which the form factor and use model are best suited to operation of the face recognition function.

Face recognition is the most natural way to recognize people; it is fast and accurate and does not require a person to learn or do anything differently. User identification should be hands-free, intuitive, and natural. Friends and family easily recognize people visually. Technology should work the same way.

Meet the author

Gilles Florey is director of 3D software

partnership management and marketing at

ams AG.

/Buyers_Guide/ams_OSRAM/c1285

Published: September 2019

Glossary

- structured light

- The projection of a plane or grid pattern of light onto an object. It can be used for the determination of three-dimensional characteristics of the object from the observed deflections that result.

- micro-optics

- Micro-optics refers to the design, fabrication, and application of optical components and systems at a microscale level. These components are miniaturized optical elements that manipulate light at a microscopic level, providing functionalities such as focusing, collimating, splitting, and shaping light beams. Micro-optics play a crucial role in various fields, including telecommunications, imaging systems, medical devices, sensors, and consumer electronics.

Key points about micro-optics:

...

facial recognition3D mappingsmartphonesface recognitionHABA systemshome and building automation3D face recognitiontime-of-flight sensingstereo imagingstructured lightemittersmicro-opticsphotosensorsFeatures