Hyperspectral Machine Vision Enables Smart Automation

ADAM STERN, RESONON INC.

Conventional vision systems often fail to sort items that have similar colors or appearances. In these cases, hyperspectral data combined with spatial pattern recognition algorithms can detect a wide range of materials, patterns, coatings, defects and contaminants. Hyperspectral vision systems generate data for quality control and also transmit information to robotic actuators, enabling automated picking and sorting of commodities.

Hyperspectral imaging involves measuring high-resolution spectral data at every pixel in a 2D image. At each pixel in a hyperspectral image, the materials’ reflectance spectrum is a continuous curve with hundreds of spectral data points. In contrast, standard cameras provide three spectral data points at each pixel: red, green and blue (RGB). The viewer’s brain inputs the mixture of these colors and interprets them as a unique color.

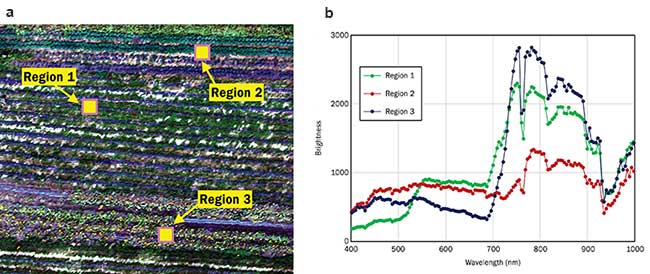

An RGB image from aerial hyperspectral data of a farm accompanied by reflectance spectra from the scene provides an easy-to-understand method of automated plant detection (Figure 1). The spatial distribution of the spectra arise from the different reflectance spectra of each plant species.

Figure 1. True-color image from aerial hyperspectral data of a farm (a), and reflectance spectra from the specified regions (b). Courtesy of Resonon Inc.

Hyperspectral imaging is a combination of both spectroscopy and imaging. A standard spectrometer provides only one pixel per measurement — there is no imaging. A standard color camera provides only three broad spectral data points — there is no spectroscopy. Hyperspectral imaging provides both.

Focusing an image in both spatial and spectral dimensions is a difficult optical problem, and the manufacturing tolerances required to obtain high-precision data can be quite challenging. Furthermore, hyperspectral cameras often require specialized hardware and software, and complete system integration is both a significant business obstacle and critical for this technology to become widely accepted.

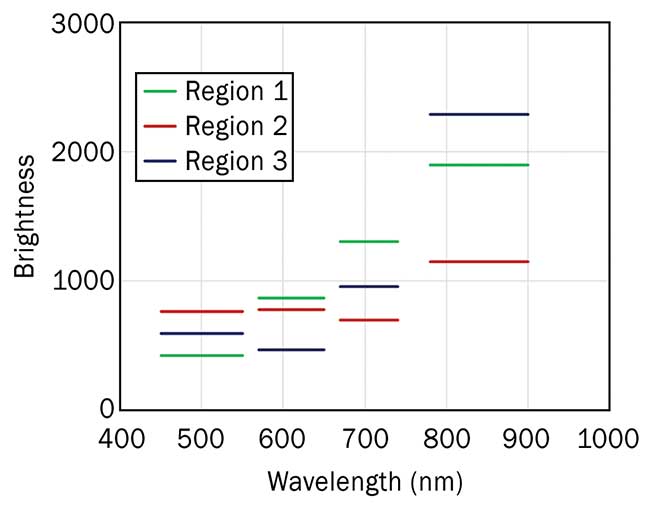

A less expensive alternative technology is multispectral imaging, which typically delivers between four and 12 spectral data points. Hyperspectral data contains much more information than multispectral data, enabling more detailed analyses and robust classifications (Figure 2).

Figure 2. Multispectral data of the same regions from the image in Figure 1. Spectral bands correspond to those used in Landsat satellites. Courtesy of Resonon Inc.

The potential value of hyperspectral imaging in automated sorting is suggested by the results of recent

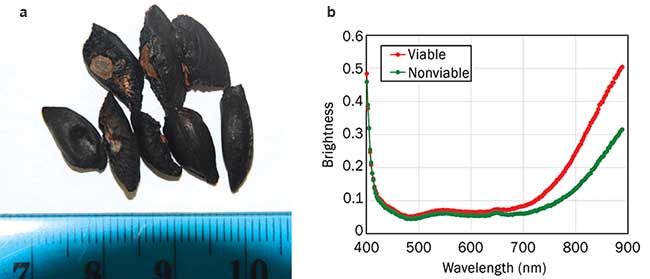

research at the University of California, Davis1. In this study, hyperspectral data of corymbia tree seeds (similar to eucalyptus) was obtained, and the seeds were planted. The seeds’ viability was correlated with different reflectance spectra (Figure 3). The red curve represents seeds that are alive, and the green curve represents those that are dead. The spectra are very similar in the visible spectral region (roughly 400 to 700 nm), but spectral differences are only evident in the NIR (wavelengths >700 nm), which is invisible to human eyes.

Figure 3. RGB image (a) and hyperspectral data (b) of corymbia seeds, showing a difference in reflectance correlated with seed viability. Courtesy of C. Nansen et al. and Resonon Inc.

Two categories of objects that are troublesome for conventional vision systems are materials with similar colors, such as seeds and husks, and materials that require infrared spectroscopy to differentiate, such as plastics. When standard vision systems fail, these sorting tasks fall to humans. This process is costly, slow and prone to mistakes.

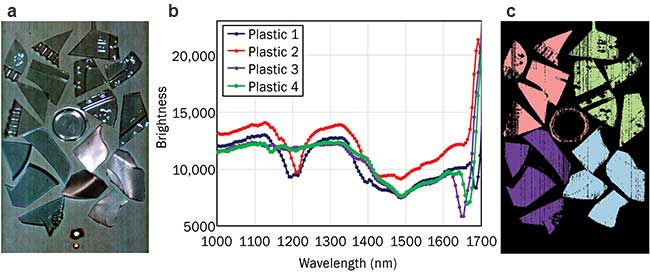

Hyperspectral data provides faster and more accurate results than human sorting and more reliable classifications than conventional vision systems. It can be used to distinguish differences between similarly colored materials and can access information outside the visible range in both the infrared and ultraviolet. Plastics come in different colors and materials, and a standard color camera will be unable to differentiate between different materials. In the IR, however, each type of material has a unique reflectance spectrum, regardless of the color perceived by human eyes. From these spectra, automated classification algorithms can generate false-color images (Figure 4).

Figure 4. False-color RGB image of plastics, generated from infrared hyperspectral data (a). Reflectance spectra of different plastics in the image (b). False-color image showing automated classification (c). Courtesy of Resonon Inc.

There are a few limitations for hyperspectral machine vision applications. A critical factor is speed, which is limited by the large data volumes inherent in hyperspectral data, computational numerics, available sensors and illumination. The analyses and communications must be optimized for speed and efficiency. To accomplish this, it is important to choose a sensor that is reliable, capable of adequate frame rates and also has good quantum

efficiency across the desired spectral range.

Ensuring that enough light is available also can be an issue. Hyperspectral cameras separate a signal into its spectral components and project each component to a single pixel, so the total amount of energy that falls on

a single pixel can be quite low. Furthermore, faster frame rates mean each frame has less time to absorb energy, and extra illumination is required to maintain an adequate signal. The maximum speed is thus a compromise between cost (through sensor and lighting choices), computational performance and product throughput.

New sensor materials are becoming commercially available, opening new spectral ranges for investigation, especially in the IR. Traditional IR sensors are made of InGaAs (indium gallium arsenide), scan a spectral range from 930 to 1700 nm, and are readily available. Recently, the development of sensors made from MgCdTe (magnesium cadmium telluride), InSb (indium antimonide), and type-II superlattices has made it possible to measure IR signals up to 2500 nm. There are many interesting spectral features between 1700 and 2500 nm, for both organic and inorganic materials. This is an exciting development for many industrial applications.

Traditional methods of analyzing spectral imaging data use the concept of hyperspectral indices,2 more commonly called band math. Although indices are useful tools, they only incorporate a very small portion of the information available in broad continuous spectra. Hyperspectral data carries much more information than a few selected spectral bands and can yield any index upon request. Employing the entire spectra along with statistical methods yields more reliable classifications and can detect small variations, providing much more information than a simple pass-fail criteria.

Machine learning algorithms, which use rigorous statistics to analyze entire spectra, can more accurately classify plants and other materials, such as plastics, sewage and algae, than mere indices. Techniques such as support vector machines and convolutional neural networks have proven successful in hyperspectral sorting applications when spatial-pattern recognition is required in addition to spectral classification, such as grading food quality and identifying surface coatings. When combined with spatial recognition algorithms, hyperspectral machine vision can make very smart computers with very sensitive eyes.

Despite the clear advantage of providing more detailed data than conventional imaging systems, hyperspectral imaging is still in its infancy. Speed limitations have only recently been overcome for real-world applications, and research and development are still required to make this technology widely installed. Nonetheless, the future of hyperspectral imaging appears bright.

Meet the author

Adam Stern is senior scientist at Resonon Inc., a designer and manufacturer of hyperspectral imaging cameras located in Bozeman, Mont.; email: [email protected].

References

1. C. Nansen et al. (2015). Using hyperspectral imaging to determine germination of native Australian plant seeds. J Photochem and Photobio B, Vol. 145, pp. 19-24. This dataset, along with hyperspectral analysis software, can be downloaded for free from https://downloads.resonon.com.

2. For a good discussion of hyperspectral indices applied to agriculture, see P.S. Thankabail et al. (Eds.) (2012). Hyperspectral Remote Sensing of Vegetation. New York: CRC Press.

/Buyers_Guide/Resonon_Inc/c12725