Advanced analytics, along with improvements in lighting and sensor technology, is making inspections faster and less costly, yet more powerful.

HANK HOGAN, CONTRIBUTING EDITOR

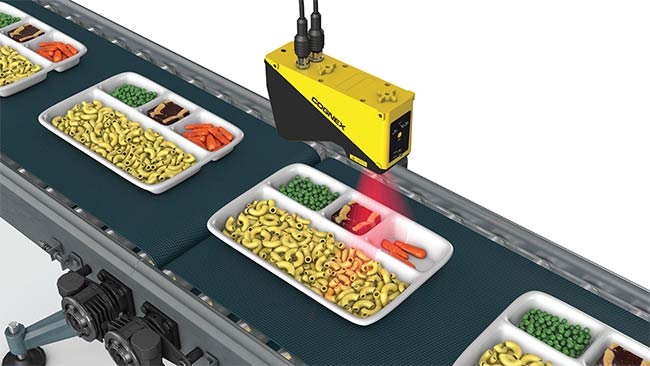

Machine vision has long been employed effectively in packaging inspection, where barcode readers and cameras verify that boxes are properly labeled. Increasingly, vision is playing

a critical role in food processing.

Automated inspection systems can

examine an apple, for instance, to

reliably and repeatedly distinguish

between a disqualifying bruise and a more benign spot. People routinely

perform this role, but it’s difficult to

codify the rules they follow.

“How do they describe a bruise? They say ‘Well, I know it when I see it,’” said Robb Robles, senior manager of product marketing at vision solutions supplier Cognex Corp. of Natick, Mass.

Such machine vision inspections

and their associated quality judgments

are now possible because of advanced analytics. Innovations in lighting and sensors have expanded the spectrum

that can be imaged, and added depth

information. Inspections that were once

impractical are now feasible. Further

improvements promise to make

inspections even faster and less costly,

yet more powerful. Doing so within

constraints, however, will continue to

be a challenge.

Neural nets

Today, analytics and image processing are increasingly performed by

neural nets. These mimic the function of neurons, allowing a machine to

learn to recognize acceptable or unacceptable quality from images of each

in a training set.

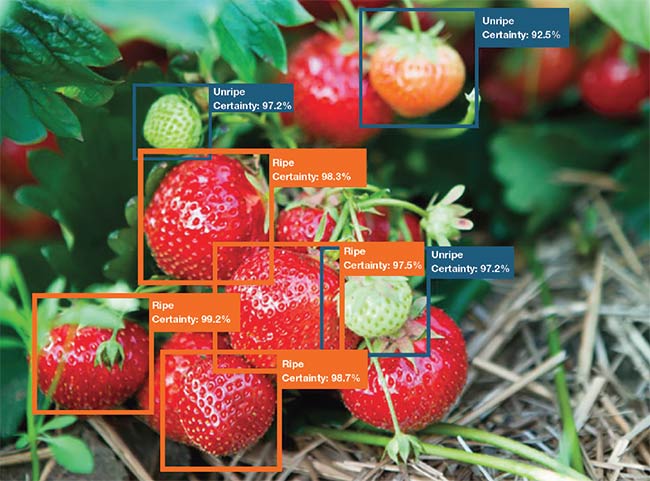

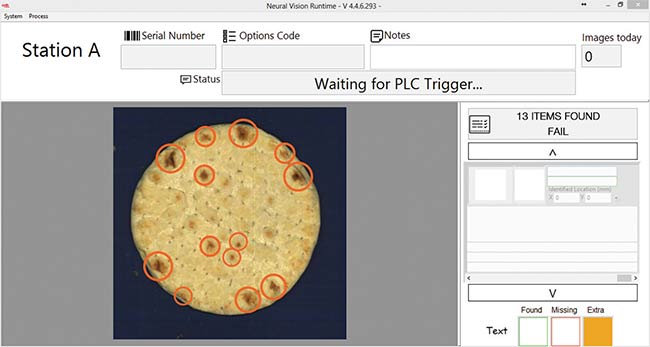

This simulation of a biological system is known as deep learning and adds human-like capabilities to a vision inspection system. “In many ways, deep learning technology really emulates the decision-making humans implicitly do when they make a decision about quality,” Robles said.

At present, deep learning requires considerable computing horsepower and so is a challenge for simple vision sensors. If it follows the trajectory often taken by technologies, then in the future, deep learning should work its way into inexpensive vision solutions.

Food and beverage inspection analysis is already benefiting from the work of companies in other industries,

said Andy Long, CEO of San Diego-based technology integrator Cyth Systems Inc. Google and Facebook,

for instance, have developed tools

that make the application of deep learning and artificial intelligence

easier.

Neural net technology makes vision

a more powerful tool, enabling inspection equipment to deal with the variations in size and color that are common among food and beverage products. It can also be used to improve the price a product will fetch.

“For every food you can imagine, there is a perfect specimen of what

the supermarkets want. And they know

which ones will sell at a higher price,”

Long said.

The training set of images used for deep learning is important and must be carefully selected, he added. It may be that produce of one color, say green, is all marked good while another color, for example yellow, is marked bad. The system may erroneously then decide color is the key differentiator.

According to Long, four out of five vision systems that Cyth currently offers involve artificial intelligence and deep learning. Many are proof-of-concept solutions, indicating the technology has

considerable momentum behind it and

strong growth ahead.

However, Long noted that the fundamentals still apply. For vision inspection

of food and beverage, lighting and

sensors play a critical role. According

toXing-Fei He, senior product manager

at Teledyne DALSA of Waterloo, Canada, the vision solution cannot

have a high cost, even though volumes

may be high.

A compact, image-based code reader allows food processors to track products for improved inventory and risk mitigation in the event of allergens, recalls, and health warnings. Courtesy of Cognex Corp.

An example can be seen in rice sorting, He said. A commodity, rice is graded by its appearance as kernels drop from a chute. A sensor images the rice, providing information that helps a system decide whether the object

passing by meets proper specifications. If it fails, or if the system detects an

unwanted contaminant, then a puff of air blows the grain or object into a downgrade or reject bin.

This sorting happens at high rates of speed and the processing must be performed while adding little to the cost of the rice, He said. A single sorting machine may have as many as 20 sensors, often specifically designed for this type of application.

Expanding to NIR

He pointed to the growing trend of widening the spectrum captured by sensors. Food and beverage applications have long used RGB color

sensors. Now there is a push to include information from 700 to 1100 nm

— the NIR.

“The near-IR channel provides useful information on food,” He said.

The NIR also happens to be a spectral region where silicon sensors can still work, which is important because such technology is less expensive than imagers based on other materials. Working in the NIR does require the use of specialized filters, as that’s the only way not to overwhelm the NIR signal. NIR lighting may also be required. The addition of this new band can be thought of like adding a new color, thereby increasing the amount of data generated and giving the analytics more information to work with.

The next step may be hyperspectral imaging. Indium gallium arsenide (InGaAs) sensors respond up to about 2 µ (2000 nm) wavelength, and other materials can work even further in the IR. On the other end of the spectrum are ultraviolet and even x-ray solutions.

However, these sensors and systems

tend to be more expensive.

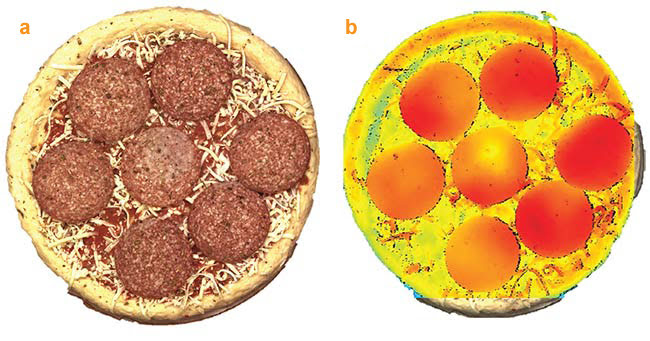

Another dimension exists, literally, for the future of food and beverage inspection. Chromasens GmbH of Konstanz, Germany, supplies products that can simultaneously provide color and 3D information, said Klaus Riemer, product manager for Chromasens. The company makes two-color line-scan cameras that generate a stereo image. When used with optimized illumination, this setup can detect small structures on shiny surfaces.

The 3D approach provides data that can be used to determine food quality. For instance, it can tell the location and orientation of a piece of cut meat, as well as provide information about shape and fat percentage. Another application measures cookie or pizza size and shape, or other parameters of quality. Such inspection requires high resolution in X, Y, and Z over a wide field of view, as well as fast acquisition and analysis of images.

A neural net vision system inspects the ripeness of strawberries. Courtesy of Cyth Systems Inc.

Chromasens takes care to ensure

that its camera technology can handle these requirements. “The 3D calculation — the height image and point cloud — is calculated on a GPU (graphics processing unit) to get the 3D image and data very fast,” Reimer said.

Integration of 3D

‘In many ways, deep learning technology really emulates the decision-making humans implicitly do when they make a decision about quality.’ — Robb Robles

As technology continues to improve, Reimer added, operations that are performed by a computer today will in the future run on an embedded system. Integration of 3D technology will then be simpler and solutions involving it will be easier to use.

According to Donal Waide, director

of sales at BitFlow Inc., a Woburn, Mass.-based manufacturer of industrial-grade frame grabbers and

software, the increase in data due to the implementation of 3D, or the addition of spectral bands, may mandate the use of a frame grabber.

When data rates top 400 MB/s and decisions must be made in real time, a frame grabber can handle the data transfer from a camera to the rest of the system, Waide said. These high amounts of data flow may arise even when collecting a small amount of image information, if the information comes from a large number of objects.

A neural net vision system looks for burn marks on a piece of pita bread and grades it for acceptability. Courtesy of Cyth Systems Inc.

One example involves sorting frozen

peas that are moving by an inspection point at a rate of 100,000 per minute. Several images of each pea may be

needed to determine whether the quality of a pea is good or bad, as well as to

weed out foreign objects and contaminants.

If a system takes too long to spot a problem in such a situation of high data rates, the delay can lead to a large amount of ruined product. Wade said a frame grabber could cut the detection time from 10 s to 1 s, saving product and thereby making the frame grabber a cost-effective solution.

Pizza imaged by a stereo 3D color camera for quality and adherence to specifications (a, b). A false color image generated to highlight potential problems (b). Courtesy of Chromasens GmbH.

High-speed connectivity, such as that offered by CoaXPress, he added, means processing can take place at a distance from the sensor. This saves space in a possibly crowded food processing unit and means the analysis system can be contained in an enclosure to protect it from dust, water, and other environmental challenges.

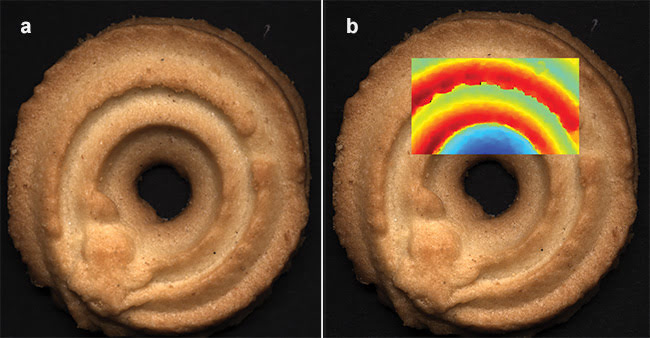

Cookies captured by a stereo 3D color camera and examined for quality and adherence to specifications (a, b). A false color image generated to highlight potential problems (b). Courtesy of Chromasens GmbH.

Waide pointed to hyperspectral imaging as an emerging trend. “If you take a McIntosh apple, which is red and green, you have to be able to figure out which is the green spot versus which is the bruise. You’re using a hyperspectral camera to actually see the tissue that’s underneath the skin and what condition it’s in,” he said.

Raghava Kashyapa, managing

director of Bangalore, India-based

machine vision solution supplier

Qualitas Technologies, predicted a bright future for vision in food-and-beverage applications. Qualitas

specializes in deep learning technology, but Kashyapa noted that it always

helps to add information from more spectral bands to an image, or to add height information.

“I see machine vision powered by deep learning to be the main driving force in the next two to five years,

followed by hyperspectral technologies,” Kashyapa said. “The two are going to be a very potent combination.