Machine vision is finding applications in food processing, from inspecting processed chicken to helping give mass-produced cookies the slightly imperfect look of the ones Grandma used to make.

Hank Hogan, Contributing Editor

John Stewart would like to make your next meal of chicken strips or nuggets cheaper and healthier. Stewart, a research engineer at Georgia Tech Research Institute in Atlanta, is working on a machine vision system that he hopes will be used to spot foreign objects in finished poultry and other food products on the processing line.

In the manufacture of processed chicken, the meat is diced into the appropriate size and moved along a conveyor belt to the tune of 6000 pounds an hour. Foreign objects, which can include plastic from the processing machinery, usually are caught during traditional, human-based inspection. Stewart noted that there isn’t much chance of these objects ending up in a consumer’s mouth, but that doesn’t mean the contamination doesn’t cause pain. The manufacturer must warehouse the suspect food and decide whether it should be reprocessed or scrapped.

Researchers developing a machine vision system to detect foreign objects in processed poultry are focusing on finding blue and green objects. Blue has become the standard color for plastic in food processing. Courtesy of Georgia Tech Research Institute. Photo by Gary W. Meek.

“More than anything else, it costs a lot of money to a producer when that happens,” Stewart said.

Food producers would like to keep people away during the final processing stages to reduce the chance of bacterial contamination. Additionally, people aren’t good at scanning a moving conveyor belt for hours on end. Thus, manufacturers would like to find a way to minimize the presence of humans and rely on other inspection methods.

Production lines may include metal detectors to monitor the food stream for foreign objects, but this approach won’t work with stray pieces of plastic from the machinery. Food processing equipment typically is a bright blue or another easy-to-spot color so that any wayward piece will stand out against the meat.

It’s that color difference, Stewart noted, that makes it possible to tackle the plastics problem with machine vision. The solution developed at the institute involves an inexpensive vision system with low-wattage lighting designed to sit between the metal detectors that already may be present.

The poultry inspection system sits above the production line, adjacent to metal detectors. Courtesy of Georgia Tech Research Institute. Photo by Gary W. Meek.

The vision system has to be low-cost but rugged because of its location. “It’s a wet environment, with a lot of sanitizers and stuff that’s worst-case for an imaging system,” he said.

In their prototype, the researchers used a Sony CCD color camera with 640 × 480-pixel resolution that can operate at 30 frames a second. That frame rate allows the system to scan the belt twice, thereby facilitating the detection of foreign objects. A computer algorithm processes the images, classifying each pixel as containing chicken, the conveyor belt or a foreign object.

Lighting is a factor that is often critically important in machine vision applications. For food inspection, the scientists had to come up with a solution that would produce enough light without producing a lot of heat. One solution they employed was a bank of white Luxon LEDs from Lumileds Lighting LLC of San Jose, Calif. By turning the lights on for 1.2 ms in every frame, they cut the duty cycle to 3.6 percent and similarly reduced the power consumption and the heat generated.

In test situations, the prototype has displayed a detection rate of 100 percent for pieces of glove 1.5 mm in size and of 70 percent for colored pieces of tubing measuring 1 mm. There were some problems, the researchers noted, when shadows from the chicken chunks obscured the test objects. They presented results of experimental trials of the system at the July meeting of the American Society of Agricultural Engineers in Tampa, Fla.

The institute plans to continue testing and refining the technique, and an industrial partner, Gainco Inc. of Gainesville, Ga., is working to transform the prototype into something suitable for a factory.

Machine vision systems already in use in food processing comprise two main application areas: the inspection of labels and packages, and of the food itself. Cognex Corp. of Natick, Mass., makes systems and products for both categories, said Steve Cruickshank, principal product marketing manager for the company’s PC Vision Systems Div.

Verifying labels

Label verification is particularly important for those who are allergic to foods such as peanuts. Food and Drug Administration regulations require labels to accurately disclose product content. Cognex recently introduced ProofRead, a product intended to help with label verification.

Originally a custom vision solution, the optical character verification system was converted to a standard product. Cruickshank pointed to two technical innovations in the system, which currently uses CCD cameras.

The first is that the system isn’t trained from an image of the printed output but rather directly from the printer font, which eases the burden on the end user. All that must be entered is the font type. Cruickshank said this approach also improves the system’s accuracy because printing isn’t a perfect process.

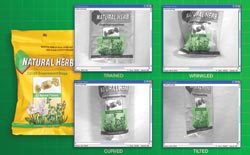

The second is an ability to recognize highly distorted character strings, using a tool the company calls PatFlex. These distortions result from wrapping a label around a cylindrical bottle, for example, or from filling a printed bag full of potato chips and air.

The PatFlex tool enables optical character recognition systems to locate targets regardless of deformations or changes in perspective. The green mesh illustrates how the deformation grid tracks pattern geometry on an object’s surface as it changes shape. Courtesy of Cognex Corp.

Banner Engineering Corp. of Minneapolis also has machine vision sensors that verify packaging and inspect food, said Jeff Schmitz, corporate business manager for vision sensors at the company. He noted that food inspection years ago might have been performed using only photoelectric point sensors. Today, inspections that require groups of photoelectric sensors could be done more cost-effectively with vision sensors or smart cameras. Schmitz gave as an example mass-produced cookies, which may be machine-made but which lose consumer appeal if they are too uniform.

Manufacturers of mass-produced baked goods desire variation in their products so that they look homemade, but the variation must be tightly controlled to satisfy other consumer requirements. Increases in the pixel count of the sensors in these systems enable the detection of smaller defects than previously was possible. Courtesy of Banner Engineering Corp.

“They need to have some variation in the size, shape, texture and so forth, so they look like Grandma made them,” he said.

On the other hand, cookies can be too nonuniform. If they’re too big, they may not fit in the package. If they’re too small, the customer may feel cheated.

Manufacturers of mass-produced baked goods, therefore, strive for tightly controlled variation and monitor the process with sensors. In the case of cookies, that might mean one vision sensor above the cookie and another on the side. By combining the two images, it’s possible to ensure that the length, width and height of the cookie fall within acceptable limits. Because this involves blob analysis of the image, the system can address other needs with just a software change. For example, the setup can check for burn spots on a tortilla or muffin.

More pixels, more imagers

Schmitz noted two trends in his company’s vision sensors, both of which affect their use in food processing. The first is an increase in resolution. Three or more years ago, the resolution was on the order of 640 × 480 pixels. The company’s latest product, the PresencePlus P4, has a 1280 × 1024-pixel resolution. This increase in pixels means that smaller defects can be spotted.

The second trend lies in the type of sensor incorporated into these systems. Years ago, the only choice would have been a CCD imager. The latest products are based on CMOS imagers, an indication that the newer sensing technology has caught up with the old standard.

Schmitz pointed out that Banner’s products are all gray-scale sensors. The lack of color doesn’t present a problem when measuring size and doing blob analysis, but it is unsuitable for grading fruit or inspecting chicken parts.

With machine vision in color and at the right price, vision systems could be looking at fruit, to weed out pieces with bad spots, and checking to make sure that only chicken — and not some foreign object — ends up in your meal.

Fourier Transform IR Spectroscopy Samples Meats

Researchers at the University of Manchester in the UK are solving meaty food processing problems using photonic techniques that do not employ machine vision. The first problem is that there is no quick way to accurately detect whether beef has been spoiled by or contaminated with bacteria. Another involves the fact that it is hard to automatically distinguish between types or even cuts of meat.

According to Royston Goodacre, a professor of biological chemistry at the university, the research group has met with some success on both fronts through the use of Fourier transform infrared (FTIR) spectroscopy. In FTIR, which is widely used in the biosciences, the absorbance spectrum of an infrared beam acts as a biochemical fingerprint because the absorbance at particular spectral wave numbers is due to specific chemical bonds in the sample.

In their investigation into spoilage, the researchers pulverized a small piece of beef, compressed it into a thin film and used a horizontal attenuated total reflectance accessory mounted on an infrared spectrometer to measure the chemical changes that occurred as it went bad. The accessory, a ZnSe crystal, internally reflected incoming radiation many times, resulting in an evanescent field that penetrated the sample to a depth of about 1 μm, producing the infrared absorbance that they measured.

The scientists collected data hourly and compared that with standard bacterial tests, which take hours to complete. In contrast, the FTIR spectra were collected in minutes. They then correlated the bacterial load and the absorbance through the use of genetic algorithms and got good agreement. They found the carbon-nitrogen bonds of two biochemicals — amides and amines — particularly significant.

In an extension of the technique, the group has developed an approach in which specific wave numbers could be used to discriminate between different species and muscle types of meat. This could help rapidly identify meat products and spot food adulteration.

The research on meat spoilage, performed in conjunction with investigators at the University of Wales in Aberystwyth, UK, was reported in the July 1, 2004, issue of Analytica Chimica Acta. The Manchester group is in the process of publishing its results on identifying types and cuts of meat using FTIR spectroscopy.