Machine Vision Makes the Move to IoT

The introduction of the GigE Vision standard in 2006 brought new levels of product interoperability and networking connectivity for machine vision system designers, paving the way for the emergence of IoT.

By Jonathan Hou

One of the most hyped technologies in recent years has been the Internet of Things (IoT), a trend that has entered our consumer lives via home monitoring systems, wearable devices, connected cars, and remote health care. These advancements have been, in large part, attributed to two factors: the expansion of networking capabilities and the availability of lower-cost devices. In the vision market, by comparison, these same factors have been key challenges that have instead slowed the adoption of IoT.

The increasing number of imaging and data devices within an inspection system, combined with more advanced sensor capabilities, poses a growing bandwidth crunch in the vision market. Cost is also a significant barrier for

the adoption of IoT, particularly when considering an evolutionary path

toward incorporating machine learning in inspection systems.

Evolving toward IoT and AI

IoT promises to bring new cost and process benefits to machine vision and to pave the path toward integrating artificial intelligence (AI) and machine learning into inspection systems.

The proliferation of consumer IoT devices has been significantly aided by the availability of lightweight communication protocols (Bluetooth, MQTT, and Zigbee, to name just a few) to share low-bandwidth messaging or beacon data. These protocols provide “good enough” connectivity in applications when delays are acceptable or unnoticeable. For example, you likely wouldn’t notice if your air conditioner took a few seconds to start when you got home.

In comparison, imaging relies on low-latency, uncompressed data to make a real-time decision. Poor data quality or delivery can translate into costly production halts or secondary inspections — or worse, a product recall that does irreparable brand harm.

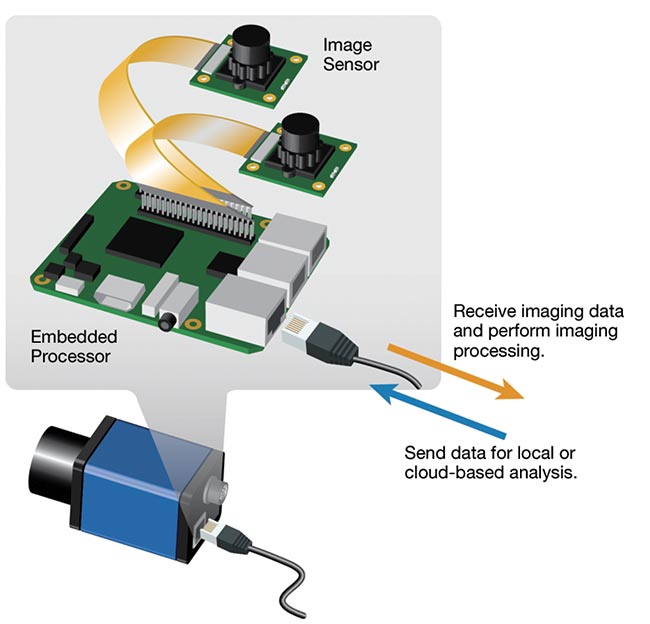

Embedded smart devices integrate off-the-shelf sensors and processing platforms to enable compact, lower-power devices that can be more easily networked in IoT applications. Courtesy of Pleora Technologies.

Traditionally, inspection has relied on a camera or sensor transmitting data back to a central processor for analysis. For new IoT applications that integrate numerous image and data sources — including hyperspectral and 3D sensors

outputting data in various formats — this approach poses a bandwidth challenge.

To help solve the impending bandwidth crunch, designers are investigating smart devices that process data and make decisions at the edge of the inspection network. These devices can take the form of a smart frame grabber that integrates directly into an existing inspection network or a compact sensor and embedded processing board that bypass a traditional camera.

Smart devices receive and process data, make a decision, and then send the data to other devices and local

or cloud-based processing. Local

decision-making significantly reduces the amount of data required to be transmitted back to a central processor. This lowers bandwidth demands while also reserving centralized processing power for more complex analysis tasks. The compact devices also allow intelligence to be placed at various points within the network.

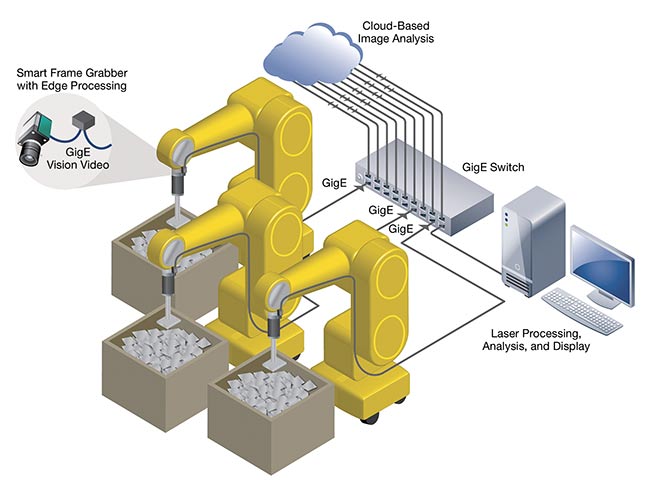

A smart frame grabber, for example, can be integrated directly into a quality inspection line to receive data from an existing camera and make a decision. Lower-bandwidth processed data, instead of raw video data, can then be shared with the rest of the system. The smart frame grabber also converts all sensors into GigE Vision devices, providing a uniform data set to use across the application. This means advanced inspection capabilities, such as hyperspectral imaging and 3D scanning, can be integrated into an existing inspection system.

The smart frame grabber approach provides an economical way to implement edge processing compared with upgrading expensive installed cameras and processing systems. This also provides a fast avenue toward adding preliminary AI functionality to an existing inspection line.

Classic computer vision analysis excels at finding defects or matching patterns once it is tuned with a known data set. In comparison, AI is trainable, and as it gains access to a wider data set it’s able to locate, identify, and segment a wider number of objects or faults. New AI algorithms can be added to a smart frame grabber to perform more sophisticated analysis, with the camera and AI-processed video stream transmitted from the device to existing machine vision software.

Software techniques enable the design of virtual GigE Vision sensors that can be networked to share data with other devices and local or cloud-based processing. Courtesy of Pleora Technologies.

Embedded smart devices enable more sophisticated processing at the sensor level. Key to this has been the introduction of lower-cost, compact embedded boards with processing power required for real-time image analysis. Embedded smart devices are ideal for repeated and automated robotic processes, such as edge detection in a pick-and-place system.

Like the smart frame grabber, embedded smart devices offer a straightforward path to integrating AI into vision applications. With local and cloud-based processing and the ability to share data between multiple smart devices, AI techniques can help train the computer model to identify objects, defects, and flaws while supporting a migration toward self-learning robotics systems.

Solving the connectivity challenge

The introduction of the GigE Vision standard in 2006 was a game changer that brought new levels of product interoperability and networking connectivity for machine vision system designers. Today’s designers contemplating IoT and AI face similar challenges, while also dealing with an increasing number of imaging and nonimaging sensors and new uses for data beyond traditional inspection. Once again, GigE Vision is providing the path forward to more sophisticated analysis. Industrial IoT promises the ability to leverage various types of sensors and edge processing to increase inspection speed and quality. However, 3D, hyperspectral, and infrared (IR) capabilities that would power high-definition inspection each have their own interface and data format. This means designers can’t easily create a mix-and-match inspection system to take advantage of advanced sensor capabilities or device-to-device networking. High-bandwidth sensors and an increased number of data sources also pose a bandwidth challenge.

Novel software techniques that convert any imaging or data sensor into a GigE Vision device ensure that each sensor speaks a common language. This enables seamless device-to-

device communication across the network and back to local or cloud processing. Edge processing also

significantly reduces the amount of data that needs to be transported, making wireless transmission for real-time

vision applications a reality.

For real-time decision-making and cloud-based analysis, smart devices can share data with local processors to enable learning systems that access a wider pool of data and thereby improve processes that use machine learning techniques. Courtesy of Pleora Technologies.

This approach is already being adopted in the 3D inspection market. Designers are using software to convert existing sensors that identify surface defects into virtual GigE Vision devices. These 3D sensors are compact and low power, and are often part of a mobile inspection system, with no room for additional hardware. With a software approach, these devices can appear as “virtual GigE sensors” to create a seamlessly integrated network.

GigE Vision

GigE Vision, ratified in 2006 and managed by the Automated Imaging Association (AIA), is a global standard for video transfer and device control over Ethernet. The standard reduces costs for the design, deployment, and maintenance of high-speed imaging applications by enabling products from various vendors to interoperate seamlessly, while making it easier to leverage the long-distance reach, networking flexibility, scalability, and high throughput of Ethernet.

|

Today, designers are converting the image feeds from these sensors into GigE Vision to use traditional machine vision processing for analysis. Looking

ahead, there will be obvious value in fully integrating the output from all of the sensors within an application to provide a complete data set for analysis and eventually AI.

Machine vision and the cloud

The cloud and having access to a wider data set will play important roles in bringing IoT to the vision market. Traditionally, production data has been limited to a facility. There is now an evolution toward cloud-based data analysis, where a wider data set from a number of global facilities can be used to improve inspection processes.

Instead of relying on rules-based programming, vision systems can be

trained to make decisions using algorithms extracted from the collected data. With a scalable cloud-based approach to learning from new data sets, AI and machine learning processing algorithms can be continually updated and improved to drive efficiency. Smart frame grabbers and embedded imaging devices provide a straightforward entry point to integrating preliminary AI capabilities within a vision system.

Inexpensive cloud computing also means algorithms that were once computationally too expensive because of dedicated infrastructure requirements are now affordable. For applications such as object recognition, detection, and classification, the learning portion of the process that once required vast computing resources can now happen in the cloud versus via a dedicated, owned, and expensive infrastructure. The processing power required for imaging systems to accurately and repeatedly simulate human understanding, learn new processes, and identify and even correct flaws is now within reach for any system designer.

Providing this data is a first step toward machine learning, AI, and eventually deep learning for vision applications that leverage a deeper set of information to improve processing and analysis. Traditional rules-based inspection excels at identifying defects based on a known set of variables, such as whether a part is present or located too far from the next part.

In comparison, a machine learning-based system can better solve inspection challenges when defect criteria change — for example, when the system needs to identify and classify scratches that differ in size or location,

or across various materials on an inspected device. With a new reference data set, the system can be easily trained to identify scratches on various types of devices or pass/fail tolerances based on requirements for various customers. Beyond identifying objects, a robotic vision system designed for parts assembly can use a proven data set to program itself to understand patterns, know what its next action should be, and execute the action. The need to transport and share large amounts of potentially sensitive, high-bandwidth sensor data to the cloud will also help drive technology development in new areas for the vision industry, including lossless compression, encryption, security, and higher-bandwidth wireless sensor interfaces.

With some incremental technology

shifts toward bandwidth-efficient devices integrating preliminary AI functionality, IoT will be a reality for the vision industry. The first key step is to bring processing to the edge of the

network via smart devices. These devices must then be able to communicate and share data, without adding costs or complexity to existing or new vision systems. Finally, while the concept of shared data is fundamentally new for the vision industry, it is the key to ensuring processes can be continually updated and improved to drive efficiencies.

Meet the author

Jonathan Hou is chief technology

officer of Pleora Technologies Inc.,

a provider of sensor networking solutions for the vision market.

/Buyers-Guide/Pleora-Technologies-Inc/c11935

Published: September 2019

Glossary

- machine vision

- Machine vision, also known as computer vision or computer sight, refers to the technology that enables machines, typically computers, to interpret and understand visual information from the world, much like the human visual system. It involves the development and application of algorithms and systems that allow machines to acquire, process, analyze, and make decisions based on visual data.

Key aspects of machine vision include:

Image acquisition: Machine vision systems use various...

- deep learning

- Deep learning is a subset of machine learning that involves the use of artificial neural networks to model and solve complex problems. The term "deep" in deep learning refers to the use of deep neural networks, which are neural networks with multiple layers (deep architectures). These networks, often called deep neural networks or deep neural architectures, have the ability to automatically learn hierarchical representations of data.

Key concepts and components of deep learning include:

...

- internet of things

- The internet of things (IoT) refers to a network of interconnected physical devices, vehicles, appliances, and other objects embedded with sensors, actuators, software, and network connectivity. These devices collect and exchange data with each other through the internet, enabling them to communicate, share information, and perform various tasks without the need for direct human intervention.

Key characteristics and components of the internet of things include:

Connectivity: IoT...

machine visionGigE VisionAIAIOTAIdeep learningFeaturesInternet of Things