Smart Perception is the Next Stage for Machine Vision

An innovation in hyperspectral imaging combines the high resolution of a linescan sensor with the capabilities of a snapshot camera.

JULIEN PICHETTE, IMEC

Intelligent robotics is one of the technological disruptions that will fundamentally alter society — both from an industrial and a social perspective. And “smart perception” is a key concept that will drive this evolution toward intelligent robotics. Indeed, in the next 20 years, micro-sized cameras will look at every angle, depth, mode or change in any given scene and report back with all relevant information. Think of precision agriculture. Or a surgeon’s operating theater enhanced by real-time visualization of critical tissue parameters imaged from inside the patient’s body. Or a car that hums through dense traffic based on an understanding of its surroundings, including what’s around the corner and what will happen next.

The camera system as a prototype (left) and in an exploded view (right). Courtesy of Imec.

Yet, to equip tomorrow’s robots with smart perception capabilities, we’ll need new technologies — new hardware and new software. What’s needed is mass-produced, single-chip, high-quality hyperspectral vision technology that replaces today’s expensive and complex equipment. And there’s a need for hyperspectral cameras that combine the high spectral and spatial resolution of hyperspectral linescan cameras with the ability to acquire data sets as easily as with a digital snapshot camera. With such technology, acquiring and processing full hyperspectral images takes only seconds while accommodating plenty of new opportunities, especially in the life sciences, medical applications and diagnostics — domains that are not served by existing hyperspectral camera solutions.

Evolution in hyperspectral imaging

Machine vision enables a computing device to inspect, evaluate and identify still or moving images. As such, it is actually quite similar to the use of surveillance cameras but provides automatic image capturing, evaluation and processing capabilities. A machine vision system typically consists of digital cameras and back-end image processing hardware and software. The camera at the front end captures images from the environment or from a focused object and then sends them to the processing system where the images are either stored or processed.

Equipping machines and robots with smart perception capabilities goes a step further. These perceptive systems include smart cameras that integrate various viewing and sensing modalities into a single compact and cheap system — cameras that can recognize and scan a surface through radar technology, look below the surface with an x-ray component, analyze material through a hyperspectral view and have the necessary on-board intelligence to acquire and merge the various data streams into exactly the view needed by the application. When discussing image technology with surgeons, for instance, they will ask to have as much “live” visual information as possible while they are operating. Today, they often rely solely on stills of scans (x-ray, MRI, etc.) and live RGB camera footage. With all new technology coming online, however, they will soon

be equipped with live visual information enriched with spectral and ultrasound data to visualize oxygen levels and blood circulation, 3D surface information, depth imaging, tissue type recognition and anomaly detection — all in real time and with the ability to zoom into microscopic detail.

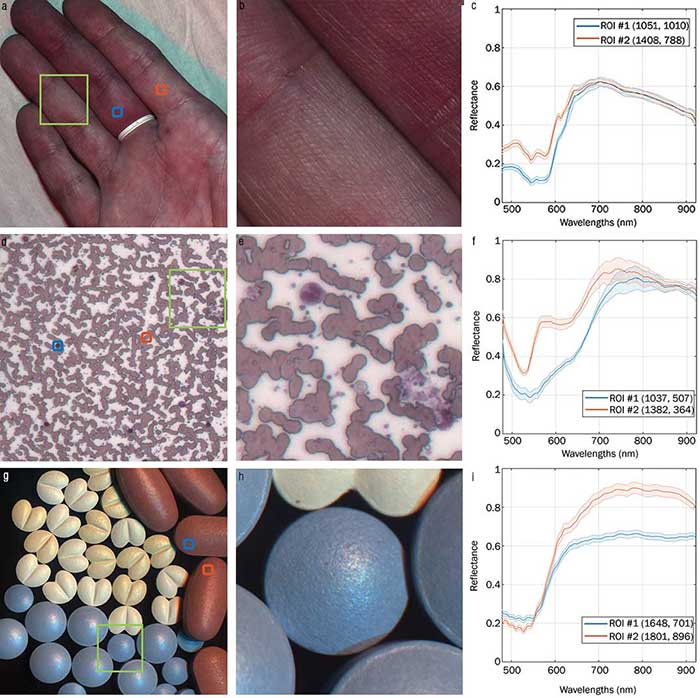

Full view of the datacubes (left), with a zoom over a region of interest (middle). Blue and red spectra correspond to the blue and red selection on the left-hand figures (right). Displayed spectra are an average over a 5 × 5 region with the corresponding standard deviation displayed in transparency. The datasets are a hand (a,b,c), a blood smear (d,e,f) and pills (g,h,i). Courtesy of Imec.

Hyperspectral cameras will be at the basis of this evolution. They split the light reflected by an object into many spectral bands, which they capture and process separately. For each pixel in a scene, a complete spectral signature is recorded; as such, much more information is captured than what can be achieved through standard RGB imaging, which mimics what the human eye can see.

So far, the primary disadvantages of hyperspectral imaging have been cost and complexity. On the one hand, traditional hyperspectral cameras are based on expensive, heavy and large specialized optics. Moreover, it is typically very challenging to get them calibrated and running properly.

To overcome those limitations, Imec developed a hyperspectral imager that makes use of optical filters on top of CMOS image sensors. This has resulted in a number of compact, cost-efficient and fast hyperspectral cameras that enable a broad range of exciting new applications.

Mosaic sensors

Line scan hyperspectral cameras scan scenes line per line. This technique is well-suited for applications that need a high spatial and spectral resolution, and that involve scenes that pass by the camera — such as remote sensing or conveyor belt applications. Concrete examples include aerial observation with planes or satellites and food sorting applications.

Linescan technology, however, is less suited for 2D scenes with a freely moving camera or scene. Think of inspection cameras in robots, free-flying drones, movement-activated security cameras, microscopic imaging or real-time imaging during surgery. For those types of applications, you need whole-frame acquisition — with an entire three-dimensional multispectral image being sensed at once.

That type of capability can be provided by snapshot hyperspectral imaging solutions. Snapshot hyperspectral technology uses filters that are organized in a mosaic or tiled configuration instead of a linescan’s staircase approach. Each tile is responsible for sensing one narrow band of the spectrum, and that for the whole scene. This approach has already resulted in the creation of the world’s smallest and ultralight industrial hyperspectral cameras, which are especially suited for use in small UAVs and drones (where power use and thermal management

are key and payload weights critical, but where high spatial resolution is not vital). One important application domain for this technology includes precision agriculture.

In other words: Snapshot (mosaic) sensors can offer a good alternative to line-scan imagers because of their real-time capabilities. However, their lower resolution can be a problem in, for example, medical applications such as endoscopy, brain surgery or wound imaging. Those higher resolutions are offered by hyperspectral linescan imagers; but that technology is typically not suited for medical applications either, because it

intrinsically needs a scene/object to move through the imaging field along the scanning direction.

To fill that gap, researchers at Imec developed a novel, patent-pending hyperspectral camera. The Snapscan camera makes use of a moving sensor behind the lens to scan the projected image. From a user’s point of view, no external translation or speed synch is required. Hence, the camera combines the high-resolution capabilities of linescan sensors (up to 2048 × 3652 × 150 in the spectral range of 475 to 900 nm) with the convenience of a snapshot camera. No external scanning movement is required; scanning is handled internally, using a miniaturized scanning stage. Flat signal-to-noise ratios, as high as 200 over the full spectral range, have already been demonstrated thanks to software features that optimize the reconstruction and correction of hyperspectral data cubes.

High-resolution, full hyperspectral images are acquired in a matter of seconds. This widens the market for hyperspectral imaging research and development significantly — enabling many additional opportunities in domains such as digital microscopy for pathology and cytogenetics as well as medical imaging for endoscopy, wound diagnostics and guided surgery.

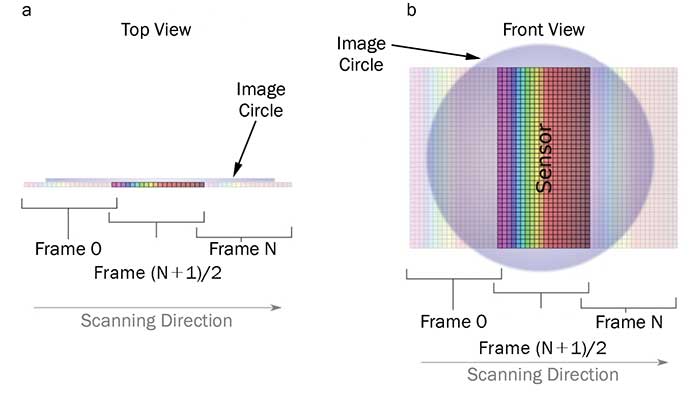

A hyperspectral linescan sensor moves behind the lens using a piezoelectric translation stage, allowing a controlled scanning of the lens image field. The scanning of the scene is thus realized without moving the object, or any external camera movement.

The sensor is displaced behind the lens using a translation stage. The movement allows a controlled scanning of the lens image circle. Hence there is no need for object or external camera movement for scanning. Courtesy of Imec.

As a result, objects that cannot be placed on a translation stage — such as an outdoor scene or a patient’s brain — can still be scanned. Although the image acquisition does not happen in real time, scanning time can take less than two seconds. If necessary, image registration can be applied to correct small movement artifacts from the imaged target. The sensor board is light (<25 grams), so a piezo stage can easily achieve the level of precision required for this application (for other hyperspectral cameras weighing multiple kilograms, this would be a real challenge).

Promising medical applications

To demonstrate the applicability of the hyperspectral camera in life sciences, medical applications and diagnostics, Imec performed a number of experiments — for three different real-life targets.

First, the hyperspectral camera imaged a person’s hand, with one finger tied with a rubber band. The image of the fingers shows the blue spectra taken from the finger with the restricted blood flow, whereas the red spectra are imaged from a normally oxygenated finger. With the bare eye, there is hardly a difference to be observed between the deoxygenated and the oxygenated fingers. However, looking at the hyperspectral curves, there is a clear difference in the hyperspectral response for both fingers, related to the presence of oxygenated versus deoxygenated blood. These results indicate that the camera could monitor, for example, wound healing (where oxygenation is essential), or in surgery (to follow up in real time on the condition of the organs) — as oximetry is at the basis of many medical applications.

In a second experiment, a blood smear was imaged, with the hyperspectral camera being attached to an optical port of a microscope. Snapscan delivered high spatial and spectral resolution — allowing users to see details of fine structures in the sample. Snapscan thus showed to be interesting for microscopy applications, as well, where automatic counting or identification of certain types of cells could be performed.

In a third experiment, six types of pharmaceutical pills were imaged. Whereas some pills could not be differentiated using only RGB imaging, the hyperspectral curves in the NIR spectrum clearly allowed researchers to distinguish the individual pills. This example shows that Snapscan technology could be used by the pharmaceutical industry for inspecting pill defects and contaminant detection.

By combining a compact hyperspectral CMOS-based sensor with a high-precision and equally compact translation stage, a new hyperspectral imaging technology has been developed. Sensors that were typically used in remote sensing or for conveyor belt applications can now image static scenes without the use of external translation, thus simplifying data acquisition for applications such as wound imaging, microscopy and surgery. Imec demonstrated the capabilities of the Snapscan hyperspectral system during preliminary experiments and plans to conduct feasibility studies for many more applications — with sample characterization that can be done at very high resolution and specific band selection, enabling targeting of very specific application fields.

Meet the author

Julien Pichette obtained his Ph.D. in electrical engineering from the University of Sherbrooke in Québec, Canada, in 2014. He joined Imec’s hyperspectral imaging team as an R&D engineer in 2015, after completing postdoctoral studies at The Polytechnique Montréal in the Laboratory of Radiological Optics (LRO); email: [email protected].

/Buyers_Guide/Imec/c22187