Surgical robots perform increasingly delicate, minimally invasive surgeries — guided by OCT and other optical imaging techniques.

JOEL WILLIAMS, ASSOCIATE EDITOR

The first robotic surgery took place in 1985 when the PUMA 560 was used in a stereotaxic operation in which computed tomography (or x-ray) was used intraoperatively to guide a robot as it inserted a needle into the brain for biopsy. In the late 1980s and early 1990s, robotic systems began to be used for laparoscopic surgery, in which a flexible optical instrument was inserted into the body and used to guide surgeons through hard-to-reach areas, from the pelvis to the chest cavity.

By the turn of the century, Intuitive Surgical Inc.’s da Vinci surgical system, which was approved by the FDA in 2000, was used for general laparoscopic surgical procedures and even cardiovascular surgeries — a high watermark in the early years. The system is still in wide use today.

Intuitive Surgical Inc.’s da Vinci is among the most well-known surgical robotic systems. Courtesy of Intuitive Surgical Inc.

Fast forward two decades, and today’s surgical robots are more precise and user-friendly, and capable of highly delicate microsurgeries, thanks to advancements in optical technology that have allowed for safe and effective intraoperative imaging. From a patient’s perspective, one of the biggest benefits of robot-assisted minimally invasive surgery is the reduced trauma, which enables quicker recovery times when compared to open surgery.

In minimally invasive surgery, typically only two to three small incisions are made — one or two for surgical tools and one for an imaging tool. In past open procedures, a surgeon might have needed to make an incision a foot or more long and then crack open the rib cage to be able to access and view the surgical site. Recovery time for such a procedure would obviously take much longer compared to a modern minimally invasive procedure. A gallbladder surgery, for example, was once a major undertaking, but today it’s often a same-day procedure.

The three pillars

If anyone can speak to the evolution of robotic surgery, it’s Russell H. Taylor, the John C. Malone Professor of Computer Science at Johns Hopkins University, who is known by many as the father of medical robotics. He is credited with breakthroughs in computer-assisted brain, spinal, eye, ear, nose, and throat surgeries.

Taylor characterized surgical robotics as a three-way partnership between people, technology, and imaging devices that works like legs on a stool. The removal of any of those pillars would render a robot useless.

Roughly speaking, there are two types of imaging when it comes to surgery, according to Taylor. The first is preoperative imaging, performed prior to surgery. This includes x-rays, MRIs, PET scans, and so on. The images are loaded into a computer and used to guide and inform the surgeon’s movements. With preoperative imaging alone, a surgeon or robot is unable to see what’s happening within the patient’s body during the procedure. This requires intraoperative imaging, which enables real-time viewing. However, this type of imaging also has limitations.

“Usually you use an endoscopic imaging system,” said Jin Kang, a professor of electrical and computer engineering at Johns Hopkins Whiting School of Engineering, who has collaborated with Taylor. “The problem is when you do that, you have a limited visualization. But more than that, you have a limited depth perception. And so that becomes an issue.”

Kang, whose expertise encompasses optical intraoperative imaging and image-guided microsurgical robotics, pointed to the importance of 3D imaging, which enables greater precision of movement in surgery and can help prevent damage of critical tissues.

One of the most common 3D methods — probably the most well-known — is stereoscopic imaging, which is used in many surgical robots, including the da Vinci. Two cameras are used to produce an image, which simulates human

binocular vision and enables depth perception. A limitation of this technique is that, to create a clear image, the cameras need to be a certain distance from the target. The depth resolution, while better than a single-camera setup, is less than perfect.

Surgery at the micron level

Microsurgery is increasingly becoming an area of interest for the application of surgical robotics. Because of the limits of human fine motor control, open surgery becomes extraordinarily difficult at a small scale. For robots, which don’t have to deal with conditions such as fatigue or hand tremor, microsurgery is the same as any surgery. The biggest obstacle is obtaining a clear view of the surgical site.

Optical coherence tomography (OCT) is proving to be an effective modality of imaging for microsurgery, and robots can enhance its effectiveness. OCT has traditionally been used for diagnostics in ophthalmology, where it has proven to be a valuable tool. And Kang sees great potential for the technique in intraoperative imaging.

“The nice thing about OCT is that it’s really fast and it’s very high resolution,” Kang said. “So you can use it to get a very clear image of a small volume and then you can control your robotic tools very accurately. It provides very accurate dimensions of the tissue that you’re performing surgery on.”

Robot-guided OCT is well suited for micron-level imaging and shows promise for ocular surgery, neurosurgery, audiology surgery, and vascular surgery.

According to Kang, OCT works similarly to ultrasound. Near-IR light is sent into tissue, where most of it scatters into various layers. Some is reflected and backscattered. At the core of a typical OCT system is a Michelson interferometer, which detects the reflected and backscattered light. This data is then used to construct a depth-resolved image. Because the technique uses light rather than sound or radio frequency to create an image, it is able to construct a much higher-resolution image compared with other techniques (such as ultrasound).

Kang has been experimenting with OCT for about 15 years to create a system suitable for guiding microsurgeries. “But in order to do that,” Kang said, “the system has to be fast, it needs to provide high-resolution images at high speeds because the surgical tools that you use are moving very fast. And then you have to be able to track the robotic tools, and guide them precisely to the target.”

Surgeons use Synaptive Medical’s Modus V to perform a neurosurgery. Preoperative imaging is displayed on the right, while a real-time image is displayed

on a monitor to the left. Courtesy of Synaptive Medical.

Kang said he worked on using different processing and imaging techniques, as well as ensuring the system was as compact as possible to be effectively integrated directly into a surgical tool.

With the robot-guided imaging system integrated directly into the surgical tools, the surgeon is afforded a first-person view of the action. As the imaging system moves around, the surgeon has a fuller view of the surgical site right in front of the tool being used.

“I think probably the first OCT-guided microsurgical robotic will be in ophthalmology,” Kang said. “OCT systems for eye diagnostics is such an accepted technology, and doctors are very used to using OCT.”

OCT may be ideally suited for ocular surgery because it can obtain a clear 3D image of the eye due to the largely transparent ocular tissue. The modality is effective in many microsurgical settings, and by varying the quality of the light, the range of effectiveness can be expanded.

“So, for example, in ophthalmology, typical wavelengths people use [are] something like 850 nm and one micron. For dermatology, we use about 1.3 microns, and then for something like brain and other tissue that is highly scattering, we can use something like 1.7 microns to reduce scattering and be able to image deeper,” Kang said.

OCT can also be used for spectral analysis (of oxygen saturation levels, for example) and for functional imaging to determine the location of blood vessels.

Instruments at the end of the da Vinci SP (single-port) surgical robot, which uses an endoscopic imaging system. Courtesy of Intuitive Surgical Inc.

The technique is not a catchall, though. For surgical sites with high blood volume, such as in cardiology, OCT will be unable to get a clear image due to blood’s light-absorbing qualities. With very little light being reflected, the interferometer has too little information to produce an image.

Extending microscopy’s reach

More traditional optical technologies, such as microscopy, have been in the surgical setting for generations. Surgical microscopes have become more sophisticated, integrating augmented reality, various spectral ranges, and more, but they can be cumbersome to move.

“What we saw was the surgeon spending a lot of time manipulating the microscope during the surgery,” said Cameron Piron, president of Synaptive Medical. “[A traditional microscope’s] optics are such that it’s focused down on a point, so it’s up to the surgeon to have the grand perspective of the anatomy during surgery.”

Enter the Modus V, Synaptive Medical’s robotically controlled microscope. “It was launched to be able to address the issue of workflow improvements and efficiency of optics in surgery,” Piron said. “It was really developed hand in hand, watching surgeons and how they’re doing their operations.”

The microscope’s first iteration combined a lens system called Vision with a robotic arm called Drive. A fixed magnification lensing system suitable for microscopy was attached to a sterilizable camera head, and the entire setup was manipulated with a robotic arm. The system was less extensive than the current model in its field of coverage due to its limitations of fixed magnification, but it did provide deep depth of field and hands-free control.

“We really looked at the inputs and the comments from our customers and realized that there’s an opportunity for a much more expansive system,” Piron said. When designing the robot’s current iteration, the company looked at increasing the field of coverage, improving resolution, and adding an adjustable magnification to the lensing system.

The current iteration of the technology consists of a robot arm with a high-definition camera system that has an adaptive optical lens array that provides 12.5× resolution, enabling the system to image structures 10 µm in diameter. The camera system is connected to a digital processing architecture that interprets and processes the information gathered from the optics and instruments.

“What’s very important is that [the information] is processed in parallel so it’s [in] real time, so you don’t see any perceptible lag as you move tools and instruments through the field. That’s an important requirement for surgeons,” Piron said.

Kang expressed the same sentiment. “Real-time imaging is critical. You can’t really have latency; otherwise it would be a really slow robot. So it would see, interpret, move a little bit, see, interpret — go, stop, go, stop. That’s not ideal,” he said.

In neurosurgery in particular, high speed is key, Piron said. It’s important not only for imaging, but also in terms of the surgery’s duration.

“It’s really critical to get that surgery done as quickly as you can and be as effective in your time during surgery as possible,” he said. “We’re always looking at ways we can reduce that surgical time and keep the surgeon’s attention most focused on the information, so that they can do the best possible job.”

Part of that process, Piron said, is ensuring that the doctor has all the relevant information about the patient, which means bringing in data from a variety of technologies, including preoperative imaging. In the same way that surgery is a team effort between surgeons and nurses — and now, robots — the delivery of information can be viewed in a collaborative context.

“We’re looking at better integration with other tools and instruments and other company’s products as well,” Piron said. “We’re looking at emerging technologies for performing high-resolution cross-sectional imaging, to be able to look at other modalities and how they can better integrate into the surgical landscape.”

As robots evolve, the public will see more technologies being integrated into medicine. Machine learning, better processing power, finer motion capabilities, and faster and higher-resolution optical technologies will lead the way toward more efficient and effective surgeries.

Synaptive Medical’s Modus V robotic microscope can be manipulated quickly and with minimal effort using the handle seen here. Courtesy of

Synaptive Medical.

Delineating a ‘Forbidden Region’ in an Area Designated for Surgery

One way to bolster the effectiveness of an imaging modality is through virtual fixtures, which bring augmented reality into the mix. Virtual fixtures are digital overlays that can be used to delineate a target or a “forbidden region” for both people and robots. They allow an increased level of spatial awareness, similar to the way humans interpret and assign value to visual data.

“When you walk into a room, what you do is actually, unconsciously, scan the room quickly so you know where the back wall is, where the doors are. So you build a 3D model of the room that you’re in,” said Jin Kang, a professor of electrical and computer engineering at Johns Hopkins Whiting School of Engineering. The trick is to get robots to do the same.

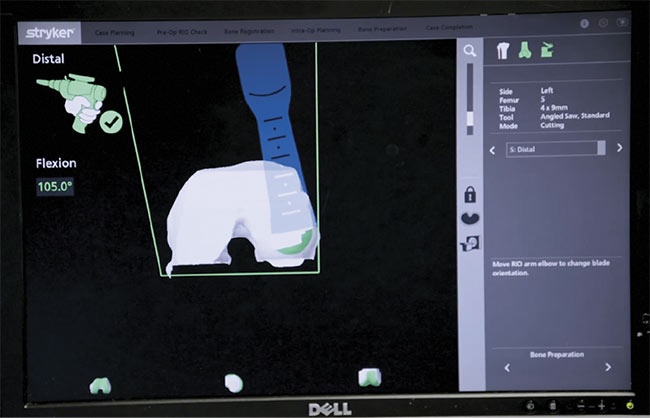

Stryker’s combination of preoperative and intraoperative imaging enables precise cutting to

ensure reduced recovery time and an optimal fit for joint replacements. Here, the saw is nearly finished with the cutting plan, displayed in green toward the bottom of the 3D model on

the monitor. Courtesy of Stryker.

Just as a human would know not to sit in a chair that someone else is sitting in, a virtual fixture can serve to delineate a forbidden region. For example, in neurosurgery, a virtual fixture can mark the carotid artery as a region not to be touched.

Stryker, the manufacturer of Mako Robotic-Arm Assisted Surgery systems used for joint replacements, ensures patient safety (and precise joint replacement fittings) using a combination of preoperative and intraoperative imaging. Preoperatively, CT scans of the patient’s joint are stitched together into a 3D model and loaded into the computer. After the patient is given anesthesia, an incision is made and pins are placed on the bone, with arrays located at the pin ends. The arrays are fitted with little reflectors that signal to the optical imaging system which bones are being looked at. The process is similar to the motion capture techniques used in filmmaking.

The surgeon then takes a probe and, according to prompts appearing on the 3D model displayed on the monitor, registers points on the patient’s bone that correspond with those displayed in the 3D image. The robot is then able to accurately follow the surgical plan loaded into the computer.

“If the patient moves, the camera captures this immediately and constantly signals the software of the specific bone location,” said Robert Cohen, vice president of global research and development at Stryker’s Joint Replacement Division.

Cohen explained that in knee

replacement procedures, the surgeon registers about 20 points. With that data, the robot is able to know exactly where the bone resection saw is in relation to the bone. This level of accuracy, combined with the robot’s haptic boundaries, reduces trauma to soft tissues, which reduces recovery time, and also ensures that the new joint has the best possible fit.

According to the professor known as the father of medical robotics, Russell H. Taylor of Johns Hopkins, another optical technology that can help ensure patient safety is the interferometric strain gauge. When performing ophthalmologic surgery in particular, a light touch is key, he said. A force of just 7 millinewtons can tear the retina. (For reference, a human might be able to feel a force of 100 millinewtons.) Incorporating an interferometric strain gauge into a surgical tool enables the tool to sense and limit how much force is being applied. So, to preserve patient safety, a robot can be programmed to limit the amount of force it exerts on an eye.