Robotics applications in the past were limited because motion planning and machine vision were applied in isolation.

NEIL TARDELLA AND JAMES ENGLISH, ENERGID TECHNOLOGIES CORP.

Historically, robots have improved manufacturing

processes through mechanical, repetitive motion. In a typical operation, the arm of an industrial robot followed

a preprogrammed sequence of movements, sensing or reasoning only as needed for motor control. It blindly reached toward a location and mechanically grasped whatever was there, and then moved and released the object at another location. Where efficiencies could be realized through such repetitive mechanical processes, traditional robotics increased the speed of production and yielded cost savings.

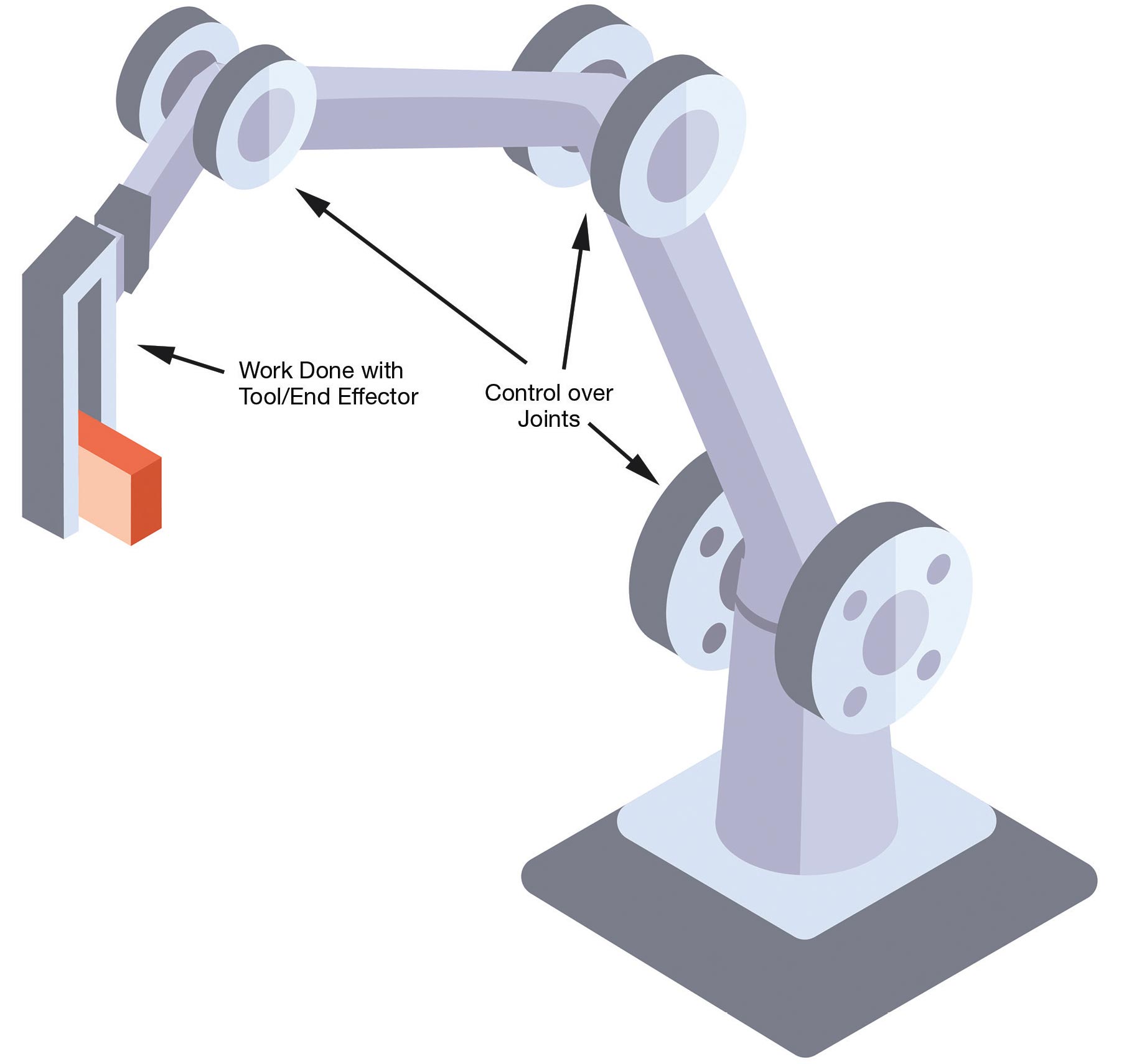

The motion control problem involves how the joints are moved to achieve desired motion of the tool (or end effector). Courtesy of Energid Technologies Corp.

For more advanced applications today, machine vision is required for

inspection and localization of accessible, often planar, scenes. A typical machine vision application inspects

a simple surface of a part to detect

basic features or flaws. If flaws are discovered, the part is rejected. If basic

features are examined, those features are used for localization or analysis.

Machine vision has provided significant

efficiency improvements in controlled,

easy-to-configure, repetitive industrial

tasks.

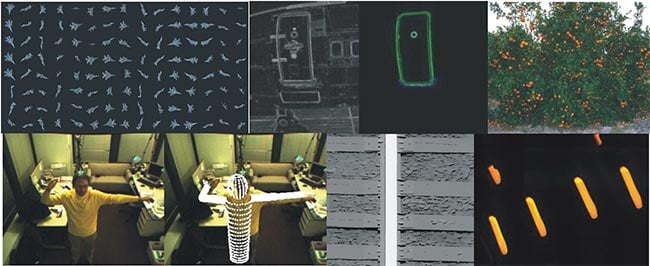

Most robotics problems — related to cleaning, manufacturing, and harvesting, for example — require integration of machine vision with manipulation so they work together.

But past robotics applications were

limited because machine vision and motion planning were often applied in isolation to problems, with limited scope. Practical solutions to most robotics problems — related to cleaning, manufacturing, and harvesting, for example — require integrating machine vision and manipulation. These functions work together to make robots useful, just as network communications and touchscreens, say, work together to make smartphones useful. The new ways of integrating machine vision and motion control will lead to rapid progress in robotics.

Motion control

There are two types of motion control. The first, local control, addresses how a robot should shift so a tool, or end effector, moves by small increments. This is important for interactive tasks such as insertion and grasping; it’s also critical for refining the end-effect or position, such as when correcting for errors or taking on a payload. The second type is global control, which involves larger changes in motion for the end effector. Finding a path that is free from collisions, singularities, and joint limits during a big move can be hard, as it requires the exploration and comparison of many paths.

The quality of the motion and the ability to calculate results quickly have benefitted from conceptual changes. Local control tasks have been redefined from comprehensive end-effector position and orientation to lesser constraints, including point placement and angular pitch. This so-called natural tasking is easier to define by robot operators, and gives extra degrees of freedom in robot movement — degrees of freedom that can be exploited to improve motion quality. When there are more degrees of freedom in motion than degrees of constraint in the task, the extra freedom translates into a continuum of ways to implement local control.

In an outdoor environment, hanging fruit moves readily, and robotic harvesting movements must be based on real-time feedback. In the case shown, cameras are built into the frame moving at the end of the hydraulic arm. Harvesting is an example of visual servoing that cannot rely on tags attached to manipulated objects, such as oranges on a tree. Courtesy of Energid Technologies Corp.

Control algorithms exploit this continuum. Common algorithmic techniques optimize positive qualities of motion with the extra degrees of freedom. Motion may be optimized to avoid collisions and joint limits, optimize strength, minimize errors, or any combination of these. The algorithmic techniques for accomplishing this, such as augmented Jacobian methods, run very fast on available hardware. A modern local motion controller can calculate commands thousands of times per second on affordable embedded hardware.

Global motion control, however,

has vast flexibility even when the degrees of freedom in motion equal the degrees of constraint in the end goal.

This is because the constraint for global motion control lies in the starting and ending configurations and not in the path between them. The path can be chosen with many options. Historically, reducing the time required to calculate the best path has been a challenge with global motion control. But recent advancements, including techniques

such as rapidly exploring random trees (RRTs) and probabilistic roadmaps, have enabled high-quality global paths to be calculated quickly — in some cases in a fraction of a second. Specialized hardware using field-programmable gate arrays (FPGAs) can speed up global path planning even more.

Evolution to 3D vision

Breathtaking advancements in

machine vision have been made over the last several years. Traditional 2D machine vision systems are planar.

Just as 2D computer graphics gave way to 3D graphics to enable modern computer games, 2D machine vision

is now evolving into 3D vision. No longer are simple shapes required for analysis. Instead, algorithms can be

applied to complex and cluttered scenes containing lots of distracting

objects. This innovation is driven in part

by advanced sensors and even more by advanced algorithms and computing hardware.

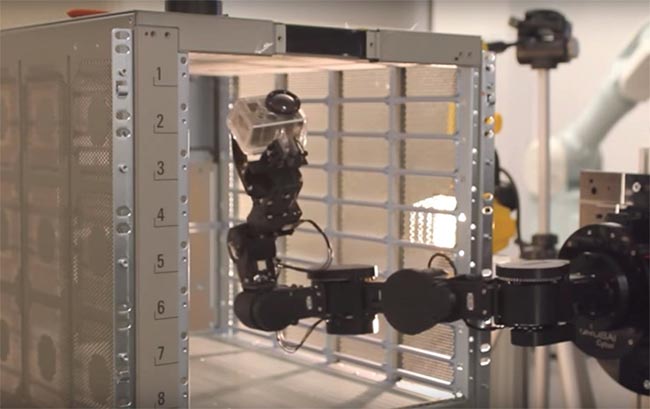

With complex part inspection, the robot must reach inside a server case to inspect the inner surface. This requires placement of the camera to avoid collision between the arm and the case. It also requires powerful algorithms to analyze the imagery and find flaws in the server case. Courtesy of Energid Technologies Corp.

Sensors for machine vision can be active or passive and can use visible light or a different sensing modality. Color cameras are popular because they are inexpensive and fast; other sensors — fundamentally 3D — are gaining in popularity because they offer unique capabilities for select applications. Sensors customized for robotic applications are continually advancing and being aggressively marketed.

New algorithms leverage these new sensors. Deep learning and other AI techniques assess sensor data based on training, rather than programming. Training allows nonprogrammers to use software for machine vision applications in an intuitive way, and allows for the creation and configuration of algorithmic techniques that could never be made by hand. Improvements also continue in nonlearning-type algorithms

that perform assessments about edges, blobs, and other features.

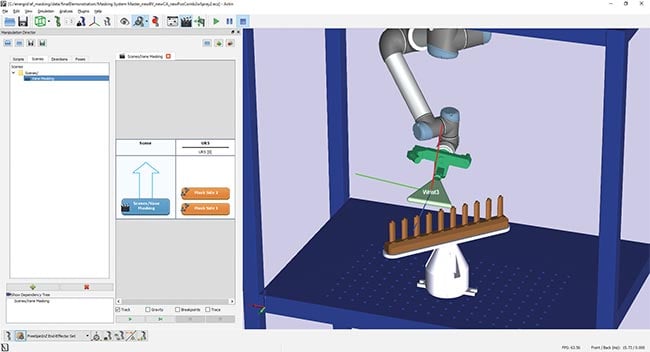

An example of software that integrates motion control with machine vision is Energid Technologies’ Actin SDK, which enables the simultaneous control of a six-degrees-of-freedom robot and a two-degrees-of-freedom platform integrated with a vision system using a graphical interface. Actin provides the graphical interface, accepts vision data as input, and issues motion commands to the robot and platform.

Bin picking

Bin picking is an important part of many manufacturing and machine-tending processes in which a variety of parts come randomly packed in bins. These parts have to be located and

removed during an assembly or

machining task. A part may be incorporated into a larger assembly or inserted into a computer numerical control (CNC) chuck for further processing. Historically, robotic bin picking has

been difficult to perform because the parts are hard to identify when piled randomly, and once identified are

hard to manipulate.

Examples of machine vision applications. (From upper left) Real-time 3D-model-based aircraft tracking; aircraft door detection for autonomous docking of boarding bridges to aircraft; orange detection for robotic citrus harvesting; gesture recognition for UAV landing guidance; automated railroad inspection; on-the-fly 3D reconstruction of glass gobs for glass-manufacture quality control. Courtesy of Energid Technologies Corp.

Efficient and effective robotic bin picking is now possible. New systems integrate motion control with machine vision and — only through this combination — are able to pick almost all parts from a bin. Motion control is critical because without it, failed pick attempts caused by collisions with other parts or bin walls leave too many parts in the

bin, disrupting the efficiency of the system. Natural tasking provides freedom in the orientation of the suction cup about its primary axis, and this empowers the algorithms that ensure collision avoidance.

Real-time feedback

Visual servoing refers to using machine vision feedback in real time to control a robot, where the machine vision cameras observe the robot itself or an object to be manipulated. The measuring camera can be either attached to the robot — placement at the tool, or hand, is common — or disconnected from the robot. The key element is that machine vision data is used to close the loop on error in end-effector placement. This approach is more accurate and comprehensive than so-called open-loop control, where joints are placed only through idealized mathematical calculations.

Visual servoing may rely on recognizing an object in its natural state, or it may use special attachments, or markers, that aid visual recognition. Markers may be lights, reflectors, or special tags that resemble QR codes.

Generally, markers make visual

servoing faster, easier, and more

accurate. They are commonly applied

for identifying the location of parts of

a robot or its environment. However, the use of markers is not always possible on objects to be manipulated,

such as when working with a natural

environment, when parts come from

an uncontrolled source, or when parts

cannot support the cost, weight, or

visible presence of a tag.

Piles of identical parts sit inside a bin. They are selected by a machine vision system and picked with a robotic arm using pneumatic suction. The black object above the bin is

the scanning sensor used to find and discriminate the parts in the box is. After a part is identified, a path must be found for picking it that avoids collisions with the other parts and with the bin walls. Courtesy of Energid Technologies Corp.

Robotic arms under open-loop

control — with feedback coming only from the motor controllers — are generally not highly accurate. They can

be repeatable to within small fractions of a millimeter; that is, after joint motion that returns to the same joint values, they return the end effector to almost the same place. But they are not

accurate in the sense of placing the

end effector in a predictable place in the world. Visual servoing gives these robots accuracy. It can also compensate for lower-precision parts in a robot, allowing similar performance with a lower-cost arm.

Inspecting complex parts

A unique combination of vision and motion control is required for the robotic inspection of complex parts — those that require navigation in and around the part, where reasoning about collision is a dominant factor. This is needed, for example, during the manufacture of large items, such as cars, servers, washing machines, and industrial equipment. It is easy to take for granted how difficult it is to ensure that these items are assembled correctly in structure and appearance. In addition to reducing cost, robotic inspection can be more reliable than human inspection; humans are prone to distraction and error, and skill set varies from person to person.

Challenges lie in both the machine-vision and motion-control aspects of complex-object inspection. Some cosmetic flaws can be hard to detect with color cameras. Scratches and other flaws are often visible only with the right lighting and perspective. Detecting them requires both the right vision algorithms and the right motion control.

For motion control, CAD models of

the complex shape of the manufactured part, when available, can be

used in collision-avoidance algorithms. Alternatively, shape information captured from machine vision can be

used. Motion control is required to

find camera positioning that is both

consistent with robot kinematics

and free from collision with the complex

part.

Worldwide, the job of complex-part

inspection consumes the time of tens of thousands of people and is done imperfectly. It is a notorious problem. Better algorithms for image analysis and better motion control for the robot

arms holding the cameras and lights

are changing the nature of this work by allowing robotics to replace some or

all human inspectors.

A screenshot of Actin software from Energid Technologies Corp. depicts graphical control of a robot, a moving base, and machine vision. Courtesy of Energid Technologies Corp.

Advanced automation that marries machine vision with robotics is transforming manufacturing and other areas

of human endeavor by making robotic systems highly capable. Sophisticated control algorithms are ushering in a

new wave of applications — where

vision and motion planning complement each other and depend on each

other for success — including bin picking, vision-based servoing, and complex-object inspection. The continued coordination of machine vision with robot motion planning will enable the advancement of next-generation

robotic systems.

Meet the authors

Neil Tardella is the CEO of Energid Technologies Corp., which develops advanced real-time motion control software for robotics. In addition to providing leadership and strategic direction, he has spearheaded commercial initiatives in the energy, medical, and collaborative robotics sectors. Tardella has a B.S. in electrical engineering from the University of Hartford and an M.S. in computer science from the Polytechnic Institute of New York University; email: [email protected].

James English is the president and chief technical officer of Energid. Specializing in automatic remote robotics, he leads project teams in the development of complex robotic, machine vision, and simulation systems. English holds a Ph.D. in electrical engineering from Purdue University; email: [email protected].