Imaging in very low light requires extra sensitivity and image fusion.

Danny De Gaspari, Jan Veldeman, Patrick Lamerichs, Siegfried Herftijd, Patrick Merken and Jan Vermeiren, Xenics NV

The night sky is never absolutely dark, even after removing the influence of starlight and diffused sunlight from the other side of the globe.

Spectral irradiance, caused by airglow, is several times stronger in the 900- to 1700-nm band than in the visible realm. This makes the near-IR band the best choice for night-vision applications. For handheld systems, size and power consumption of the camera engine are almost as important as its performance.

The most natural image still is an intensified image in the visible spectrum and in the near-IR realm, although it is very specular because of the low quantity of incident photons. Short-wavelength infrared (SWIR) delivers very similar images but a larger amount of incident photons. These two types of images not only allow the recognition of objects, but also the identification of objects or persons. We will use the terms “detection,” “recognition” and “identification” in the “military sense,” following Johnson's criteria.1

Airglow phenomenon

Airglow, sometimes called nightglow, is a very weak emission of light in the upper atmosphere. Therefore, the night sky is never absolutely dark, even after removing the influence of starlight and diffused sunlight from the other side of the globe. Three effects mainly contribute to airglow: recombination of particles photoionized by the sun, luminescence from cosmic rays striking the upper atmosphere, and chemiluminescence caused by oxygen and nitrogen, which react with hydroxyl ions.

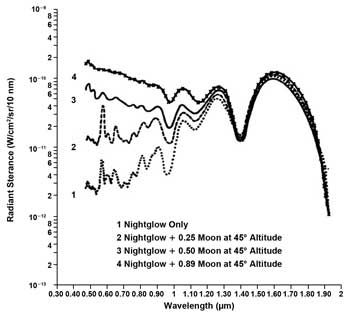

The wavelength spectrum of airglow, depicted in Figure 1, shows its maximum intensity in the SWIR range between 1 and 1.8 µm. Even if the moon is shining, the SWIR realm is hardly affected because this contributes to the intensity only in the visible range. At full moon, the radiation densities of moonlight and airglow are comparable (Figure 1), proving that the strength of airglow should be sufficient for night-vision applications with suitable equipment.

Figure 1. Airglow lightening the sky in the near-IR spectrum as compared with moonlight in the visible realm. Source: Reference #2. Images courtesy of Xenics NV.

Low-light-level cameras

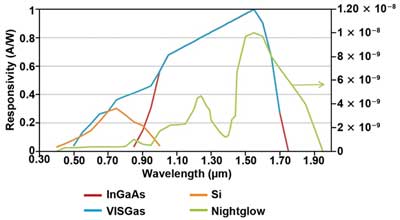

The sensor material for SWIR low-light-level cameras must offer high quantum efficiency (QE) in the near-IR range. For this purpose, InGaAs is an optimal material because its QE in the 0.9- to 1.65-µm range exceeds 80 percent (Figure 2). For special purposes, such as combined visual and near-IR focal plane arrays (FPAs), InGaAs also can be made sensitive for the visible and near-IR ranges where the QE in the 0.5- to 0.9-µm range is more than 50 percent.

When every photon counts, losing any sensitive area should be avoided. Unlike most CMOS and CCD imagers, InGaAs sensors have a fill factor of nearly 100 percent, compared with typical 50 percent for (interline) CCD and 20 to 30 percent for CMOS.

Figure 2. The near-IR sensitivity of InGaAs focal plane arrays covers most of the nightglow spectrum. Source: Reference #3.

To make low-light-level imagers, it is important to choose the highest possible sensitivity. Under night operation conditions, large signal wells never get filled and will cause only additional noise. This way, the sensitivity of InGaAs sensors can easily reach 32 µV/e—.

Noise generation of the sensor array and its readout circuitry must be kept as low as possible. Commonly, imagers have a noise level of ~100 to 120 e— for the high sensitivity from above, whereas image sensors using correlated double sampling reduce their noise levels to the 30- to 60-e— range. Noise levels as low as 5 to 10 e— are expected for future commercial devices. Unlike other SWIR sensor technologies, InGaAs sensors don't need cooling to feature these low noise levels.

Integration time must be as long as possible. It is defined by the capacity of the well and — depending upon the application — should not exceed 40 ms for smooth movement visualization at a 25-Hz frame rate. This parameter needs the lowest possible dark current and, hence, as low bias as possible. However, this introduces certain requirements for the input-referred nonuniformity of the readout circuit. Following this advice results in SWIR camera systems for low-light-level operation with outstanding features and high sensitivity (Figure 3).

Figure 3. Night-vision images taken on a moonless night. The person on the left is standing in the shadow of trees. Right: A country road at low contrast, with a farmhouse in the background.

Additional requirements imposed by the application of the camera, especially for handheld or portable operation, are small size, low weight and low power (SWaP), as well as high operating temperature (HOT). SWaP is essential for nonfatiguing use and long battery life. HOT means that InGaAs can be operated at near room temperature.

Camera engines

Xenics is developing a camera engine family that is well suited to fulfill SWaP and HOT requirements. The engines are compact, low in weight and efficient with power. An additional advantage is that InGaAs sensors and FPAs do not need cooling.

The XSW (Xenics Short Wave) camera engine family is built around a VGA-format FPA with 640 x 512 pixels. It will be complemented soon by an XGA-format 1280 x 1024 FPA, and a dedicated low-light-level version with 1/4-VGA resolution of 320 x 256 pixels.

The camera engine is available in two versions: self-contained and as a basic camera core. The latter is very compact, measuring just 40 x 40 x 20 mm. It consists of an analog sensor board with digital driver and a signal processing board. It consumes less than 2.5 W and weighs just 150 g. With these properties, it offers the highest flexibility for integration in customer setups such as helmet-mount, unmanned aerial vehicle (UAV) and compact analysis equipment.

The self-contained camera core consists of the basic core plus a power conditioning unit and a communications module, which governs two data transmission protocols: GigE Vision and CameraLink. The robust construction contains a printed circuit board frame and measures 40 x 40 x 40 mm without the lens. Good thermal housekeeping ensures high reliability. Power can be supplied via the Ethernet connection, which is limited to 4.5 W. Optical interfacing with a C-mount adapter accommodates all conventional visible-NIR lenses and a wide variety of specialized SWIR lenses.

Figure 4. Camera module XSW, Bobcat camera and image fusion with Meerkat. Fusion covers the visible spectrum to SWIR to LWIR.

Both versions are equipped with a 14-bit analog-to-digital converter, whose dynamic range (in high gain) is close to 60 dB. In low-gain conditions, it's 66 dB. Figure 4 shows the self-contained camera core, the Bobcat camera equipped with this core, and the Meerkat Fusion, which features image fusion, covering visible to SWIR to long-wavelength infrared (LWIR).

Camera fusion

Even the best SWIR camera cannot deliver optimal results under all operational conditions. Especially under very low light level conditions, most cameras fall short. Image fusion offers a solution because it allows the merger of two or three images from a thermal, a visible and a SWIR camera. For this purpose these images are overlaid, where those parts of the images with highest contrast take precedence.

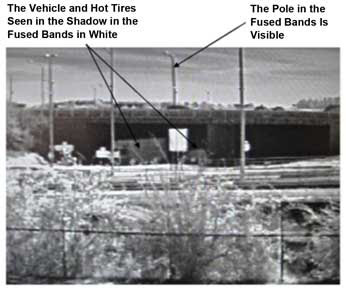

This technique allows a considerable increase in scene quality in terms of dynamics (depth of digitization), contrast, and detection/recognition. The method also allows visualization of other aspects of a scene; for example, heat accumulation and the flash-over risk under fire conditions as well as skin diagnostics and vegetation detection. Figure 5 shows an example of a fusion of LWIR and SWIR images: The truck in the shadow and its warmed tires can clearly be distinguished from the background, while the pole in the upper center — compared with a SWIR image alone — offers more contrast against the background.

Figure 5. The fusion of SWIR and LWIR realms detects hidden structures in the shadow and before bright backgrounds.

The camera engine is well suited for image fusion applications because it can be operated easily together with the XTH companion thermal module for LWIR. Because of their small size, the parallax effect is minimized. Also, the small size and the potential for detached operation of the sensor head allow for parallax-free operation based on a single lens and a beamsplitter, or integration with dual-in-line catadioptric optics.

SWIR camera modules enable new ways of building advanced night-vision equipment for search and rescue operations as well as autonomous flight operation of unmanned aerial vehicles in environments that are illuminated just by air-glow. Together with cameras for other spectral ranges, they unveil hidden details of scenes that cannot be detected with only one type of camera.

References

1. J. Johnson (1958). Analysis of image forming systems. Image Intensifier Symposium, AD 220160 (Warfare Electrical Engineering Department, US Army Research and Development Laboratories, Fort Belvoir, Va.), pp. 244-273.

2. M.L. Vatsia (September 1972). Atmospheric optical environment. Research and Development Technical Report ECOM-7023. http://www.dtic.mil/dtic/tr/fulltext/u2/750610.pdf.

3. M.P. Hansen and D.S. Malchow (March 2008). Overview of SWIR detectors, cameras, and applications. Proc. SPIE, Vol. 6939. http://lib.semi.ac.cn:8080/tsh/dzzy/wsqk/SPIE/vol6939/69390I.pdf.

Meet the authors

Danny De Gaspari, Jan Veldeman, Patrick Lamerichs, Siegfried Herftijd and Jan Vermeiren are employees of Xenics NV in Leuven, Belgium. Patrick Merken is an employee of Xenics NV and a professor at the Royal Military Academy in Brussels. The primary author, Vermeiren, can be contacted at [email protected].

Every photon counts

Because light levels are very low, the requirements for a SWIR camera for night-vision or low-level imaging can be derived quite easily from the general principle “every photon counts”:

• High quantum efficiency

• High fill factor

• High sensitivity

• Low noise

• Long integration times