With a range of newly available and already established imaging modalities, vision guidance is helping to drive a steady ascension of robot capabilities in the logistics sector.

JAKE SALTZMAN, NEWS EDITOR

Allied Market Research in the spring projected that the value of the global

logistics automation market would roughly triple from its 2020 value to $147.4 billion by 2030. Even before such high growth was projected, this sector had made considerable advancements to vision-guided robotics (VGR) to support warehouse and distribution center functions such as sorting, palletization, and pick and place.

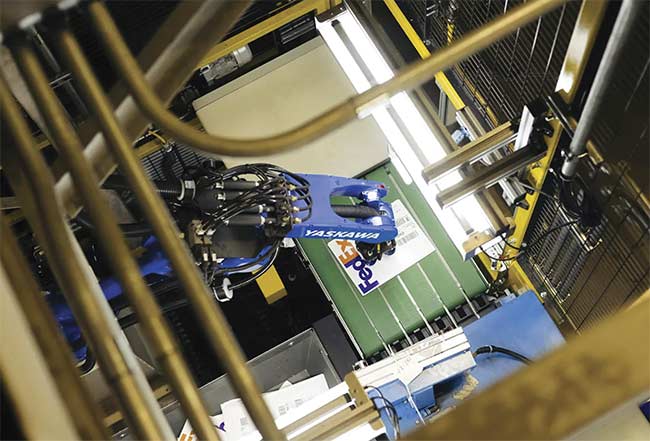

A sealed package, often with an attached barcode label, is a common item that robotic arms are required to process in logistics environments. Courtesy of Yaskawa Motoman.

In addition to the ascension of robot capabilities, the market research firm cited the rise of e-commerce, IoT, and Industry 4.0, as well as the continued demand for improved efficiency and a growing workforce as forces that were moving the needle of progress for VGR solutions.

To accommodate robot movement in a three-dimensional space, many of these solutions have manifested as 3D imaging techniques that leverage active or passive illumination. These techniques include time-of-flight (TOF) and stereoscopic cameras, as well as certain structured light-based approaches.

Each 3D imaging technique offers comparative advantages and disadvantages, but the prevalence of vision-guided robots deployed in warehouses has opened the door for all modalities to see broader adoption in the years ahead. In picking and sorting alone, for example, 3D guidance enables a robotic arm to orient its end-of-arm effector to accommodate an object’s position and orientation.

Two-dimensional imaging is often enough to help guide a robot arm in a manufacturing environment in which items such as automotive drivetrains are stacked in an orderly or structured

fashion, according to Frantisek Jakubec, a sales specialist and product manager at MATRIX VISION, a brand of Balluff Inc. “Two-dimensional imaging can be used effectively if you have all of the same items all of the time,” he said.

In a logistics environment, however, the items to be picked often vary in size, relative location, and orientation. This requires the use of a vision system to provide substantially more information and enable a robot arm to navigate accordingly.

Several 3D vision-guidance technologies have advanced to a point where they are helping to drive robotic picking and palletization applications, according to David Dechow, vice president of outreach and vision technology at Landing AI.

“What you have right now are multiple techniques that exist within the vision marketplace that can perform multiple applications,” he said. “The bottom line is that the 3D vision components in the marketplace are all very competent right now.”

Meeting speed expectations

Operational speed is a vital gauge of the success or failure of a VGR deployment. Speed is therefore a critical metric for the imaging technology applied to the logistics task, although different applications require different operational speeds.

“In logistics, your key measurables are things like picks per minute,”

Jakubec said.

The selection of an imaging technology for VGR systems comes down to the space that the robot is working in and the speed required for the application, said David Bruce, an engineering and vision product manager at FANUC America.

TOF imaging satisfies many of the speed requirements for robot systems targeting logistics applications. TOF cameras provide point cloud data that is gathered by measuring the time it takes for a light signal to reflect off objects in the captured scene and return to the sensor.

Alexis Teissie, product marketing manager at LUCID Vision Labs Inc., said TOF’s direct depth measurements differentiate the technology from other 3D scanning techniques used in VGR systems. “As a result, it can be a much more self-contained solution,” he said. “Also, due to the compact size of recent TOF cameras, a much more compact system can be implemented, without any field calibration.”

LUCID Vision Labs’ Helios2+ TOF camera offers two depth processing modes on the camera: high dynamic range (HDR) and high speed. The HDR mode combines multiple exposures in the phase domain to provide accurate depth information in high-contrast, complex scenes that contain both high- and low-reflectivity objects. The high-speed mode enables depth perception using a single-phase measurement to allow for the faster acquisition speeds and higher frame rates that are compatible with moving object perception.

The moving object perception capability is important in VGR and logistics applications in which the camera, the scene it captures, or both are often on the move.

“We see more time-of-flight systems for that reason — because they can deal with objects in motion,” said Tom Brennan, president of machine vision systems integrator Artemis Vision. “It can be hard to justify a robotic solution if the process is going to have to stop. In general, we’ve found things that prioritize speed and keep things flowing faster are better, from a standpoint of what is really solving a customer’s problem.”

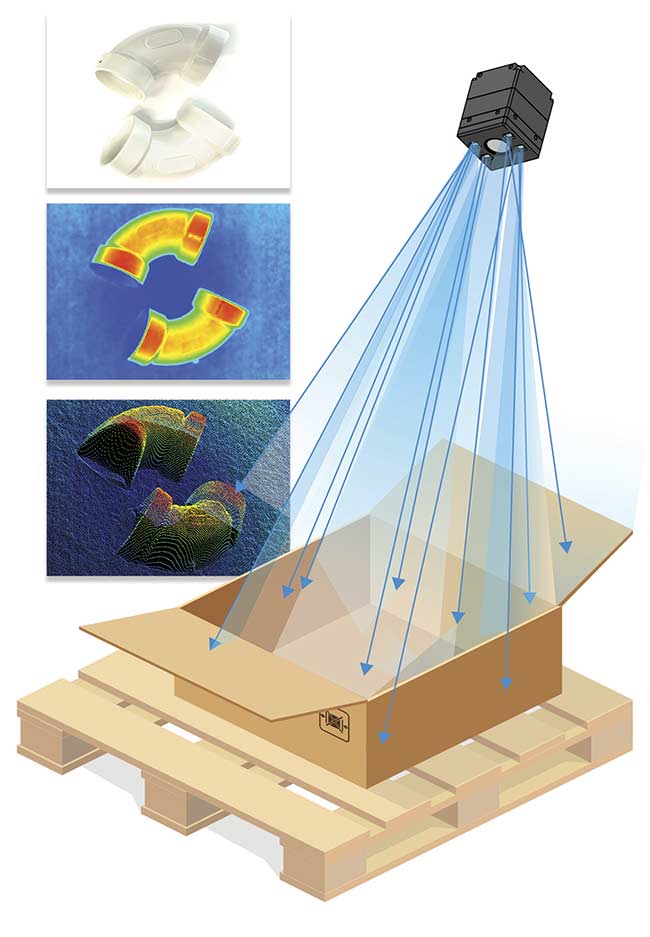

Point clouds are obtained from items varying in size, weight, and shape and located inside a package. The data sets deliver information that a robot can process to determine how to orient itself to sort the package or its contents. Time-of-flight imaging provides point cloud data that is gathered by determining the time it takes for a light signal to reflect off objects in the captured scene and return to the image sensor. Courtesy of LUCID Vision Labs.

Bringing goods into a warehouse or distribution center is one such application for which high imaging speed is key.

Induction of goods into a distribution center often involves a convergence of unstructured items that are typically mixed, said Chris Caldwell, product manager at Yaskawa Motoman Robotics. “You’ll see boxes, blister packs, bubble-wrapped packages, flats-like envelopes, and everything dumped together.”

Demand for higher throughput during induction has prompted the need for robotic arms to perform more bulk sorting at greater speeds. It is the job of these robots to select items from the mixed stack and sort common items onto corresponding conveyor belts for further sorting downstream.

“The real value of time of flight is that it has the ability to provide data at or near frame rate,” Landing AI’s Dechow said. “It is a frame rate application where we can watch movement and changes as the part moves, or as the camera moves. Even if we are taking a single frame, it is very fast.”

Time and space for precision tasks

Compared to robot-guided surgical

procedures or factory automation, logistics applications are more forgiving of the accuracy of robotic movements. “With logistics, you don’t need submillimeter accuracy,” FANUC’s Bruce said. “With 5 mm of precision, or even less than that, you’re probably going to be able to pick the part.”

In today’s logistics settings, many automated tasks necessitate that a vision-guided robot’s imaging component guide it in three dimensions, which can require finer movements than a robot supported by 2D imaging is able to make. Even though accuracy tolerances are often within a range of 10 mm in logistics applications, inaccuracies in the motion of a vision-guided robot may impede the ability of the robot to optimally perform its task, Bruce said.

Unstructured bin picking is a common assignment for 3D vision-guided robots that requires greater accuracy to select the correct part. Structured illumination setups, particularly those based on stereoscopic vision, provide information on the texture of parts to help the robot differentiate among them and make the correct pick.

“If I want to pick an individual object out of a mixed bin that has been prepared for a shipping task, for example, I may need structured light imaging,” Dechow said. “I may need quite a precise structured light imaging to identify that part and provide the robot with the location.”

3D structured light systems project a pattern or grid of light onto a scene to superimpose coordinates that a camera can then incorporate into the image data that it collects.

3D imaging enables a robotic arm to operate at a range of distances and to perform tasks even when an object is located near the position of the arm. Functions such as palletization necessitate positioning the robot at a greater distance from the items to be handled.

Courtesy of Balluff.

Structured light typically applies to the spatial domain, though suppliers such as Zivid incorporate a temporal component to overcome inherent challenges. Instead of projecting a strictly spatial pattern of light, Zivid’s temporal approach cycles through a series of projected patterns. This allows the camera to capture a time-coded series of images that leverage different levels of intensity at each pixel at different times.

Raman Sharma, vice president of sales and marketing for the Americas at Zivid, said such integration of temporal information allows the system to perform all calculations at the pixel level, which eliminates any noise or loss in spatial resolution.

Zivid initially targeted bin-picking applications in which VGR systems often struggle to discern shiny or reflective objects. The glare of such objects acts much like occlusion does in image data, and the problem can be made worse by the active illumination of structured light systems.

“Cameras used in bin-picking operations often need to perform various types of filtrations,” Sharma said. “In some cases, in that same scene where you have a shiny metal object, you’ll have a matte, dark object that needs a totally different type of filter.”

Zivid’s solution pieces scenes together from several images and pattern projections to deliver overexposed and underexposed image data that provides the vision system a wide dynamic range with which to work.

Harnessing the power of stereo

Stereoscopic imaging mimics, and sometimes improves upon, the way human vision perceives depth. The technique uses one or more cameras positioned at fixed distances to perform a triangulation of image pixels captured from 2D planes. Each pixel collects light that extends back to the camera on a 3D ray. The location of pixels and the intersection of rays gives a three-dimensional profile of an object or a surface captured in an image.

Stereoscopic imaging is distinguished from TOF in that it can deliver both 2D and 3D image information.

The combination of 2D image data (which can be used to scan barcodes, for example) with 3D image data brings value to the technique. 2D data can be overlayed onto the 3D data to obtain both color and depth information, said

Konstantin Schauwecker, CEO of Nerian Vision Technologies, a developer of 3D stereo vision solutions.

This technique is often used for object detection. “It is often very easy to make the detection on the 2D image, run a neural network, and then, once you have localized the object, you can use the 3D image to make precise dimensional measurements,” Schauwecker said.

Stereoscopic imaging can also

capture data in ambient light. Though, like conventional machine vision cameras, it often benefits from the use of active light sources. Such active 3D stereoscopic vision systems can incorporate structured light techniques to enhance their image data.

Despite these advantages, stereoscopic cameras can be a problematic solution for VGR due to the amount of image processing they must perform.

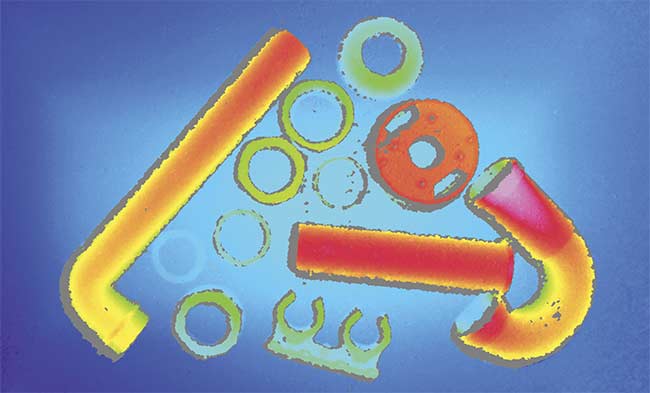

A stereoscopic image of assorted items used for plumbing. Stereoscopic imaging delivers 2D and 3D image data. Depth information is obtained algorithmically by overlaying the 2D data onto the 3D data. Courtesy of Nerian Vision.

“The general problem with stereo vision is that because the camera outputs two ordinary images, you have to algorithmically make the depth measurement from the input images,” Schauwecker said. “Doing this effectively requires a lot of computing. I think this has hindered stereo vision from wider adoption in logistics.”

Nerian 3D stereo cameras use field-programable gate arrays (FPGAs), which Schauwecker said are computationally efficient compared to GPUs, especially if the FPGA is tailored to specific tasks — for example, ones that benefit from massive parallel processing. Processing a stereo image is considered one such task.

Despite the computations required to stereoscopically obtain, process, and deliver usable information from an image, the fast measurement rates of FPGA-based cameras are effective for mobile logistics.

The induction of goods into a warehouse often involves a convergence of items that vary in size, weight, shape, and texture. An imaging component allows a vision-guided robot to differentiate among the items. Courtesy of Yaskawa Motoman.

Nerian’s solutions find application in robot palletization and depalletization systems, which place more stringent requirements on accuracy of movement than do applications such as sorting and picking. If a robot is positioned too close to a palette, for example, its effector will be unable to operate with the range of motion it needs to place the palette in a desired location or even to remove it from a stack.

The 3D stereo image data can not only measure a robot’s proximity to a palette, it can also guide the robot’s actions as it performs tasks, Schauwecker said.

“You are not relying on the ability to project light to a certain distance. And we are using lenses with a wider field of view than what is typically used for time-of-flight cameras.”

New directions

According to Bruce, many of the VGR systems that FANUC developed before 2015 and 2016 primarily leveraged 2D imaging and were constrained by the technology’s limitations in guiding robotic movement. The continued development of 3D imaging modalities, however, has rapidly changed this dynamic, as is evident today from their broad adoption and integration into automated systems.

This trend has prompted developers to explore combining various modalities into a single vision system.

Yaskawa Motoman’s Caldwell said that, for induction, many solutions use a combination of image sensor types — for example, 2D imaging combined with either TOF or 3D stereoscopic cameras. Alternatively, some developers have incorporated such a combination into a structured light system. The need for the robot to work quickly, to minimize double picks, and to sometimes rely on color as the only distinguishing trait necessitates a consolidation of imaging methods and approaches.

“Whether you’re using an active

stereo system or a time-coded structured light system, or whatever other type of camera you might need to use, you will generally mix and match until you find the best fit for your application,” said Nick Longworth, a senior systems applications engineer at SICK Sensor Intelligence.

“Applications vary so much that it is hard to say that one modality is always best for random bin picking or best for piece picking,” he said. “It all depends on the specific task.”