Joint optimization of nontraditional, specialized optics and sophisticated signal processing improves the image formation process.

Edward R. Dowski Jr., CDM-Optics/OmniVision Technologies Inc.

Conventional imaging systems use signal processing essentially as an accessory to optics, but Wavefront Coding allows specialized optics and signal processing to be jointly optimized to form the best image. This relationship enables increased imaging quality, reduced size and overall cost reduction that follows Moore's law.

Wavefront Coding is not a new concept, but it is newly being used in mobile phone cameras where requirements for close focusing low-height optics and low-tolerance assembly are combined with the need for high target yields. In these systems, the technology enables optics, sensors and processors to be jointly optimized.

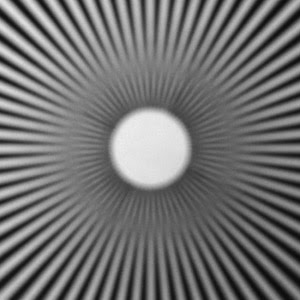

Figure 1. This image of a spoke pattern was taken 10 cm from the lens of a traditional miniature camera.

More than 30 years ago, Gerd Häusler described a method of breaking the depth of field versus light-gathering trade-off through digital processing and mechanically changing the focus of the imaging lens during exposure.1 In 1995, W. Thomas Cathey and I described a nonmechanical method of handling the trade-off.2 The technique improved on the former one by changing the optical wavefront, essentially causing various spatial regions of the lens systems to image different regions of object volume simultaneously with all other regions of the lens. Without using moving parts and by acquiring only a single image, we collected image information over an increased object volume, achieving extended depth of field while controlling the effects of imaging aberrations.

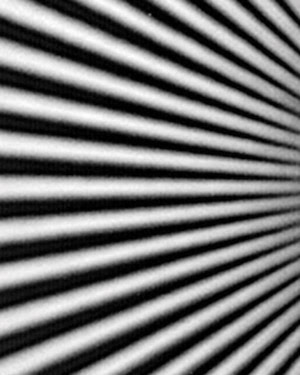

Figure 2. A cropped area from Figure 1 shows a phase change resulting from severe misfocus of a traditional lens at such a close object distance.

Conventional imaging systems are designed to generate the “best possible image” at the image plane relative to the eye. This type of system can be expensive, bulky and highly sensitive to optical and mechanical tolerances, resulting in higher costs. These sensitivities cannot be overcome by changing only the optics. Wavefront Coding relies on the specialized optical wavefronts of the imaging system that “code” formed images. Signal processing performs “decoding” that renders all parts of the designed object volume sharp and clear.

Improving focus

Wavefront Coding renders systems insensitive to a number of characteristics that commonly reduce image quality and increase costs. The code designed into the optics makes the captured images insensitive to object location, which allows, for example, a broad object volume to remain in focus in every image without the need to focus the lens. Focus using Wavefront Coding is independent of light level and also eliminates the time delay found with autofocus, during which the system finds the best focus. The specialized optics also can be designed to be insensitive to fabrication and assembly tolerances, allowing simpler and lower-cost optomechanical systems.

A multidimensional advantage

In a perfect world that has perfect fabrication, an optimum number of lenses and absolutely zero signal-processing constraints, the design of wavefront-coded imaging systems with large depths of field would occur in a three-dimensional design environment. Hovever, the realities of practical optical materials such as plastic, environmental conditions such as a wide temperature range, and a variety of assembly and fabrication tolerances yield a myriad of additional dimensions to address and control. This means that a practical design could be a multidimensional nightmare.

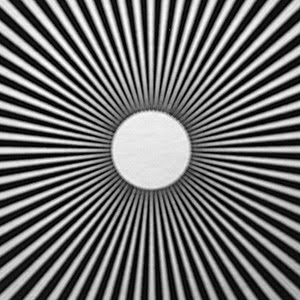

Figure 3. Another spoke pattern image was taken at 10 cm with a wavefront-coded miniature camera.

Wavefront Coding design operates within the space that represents the close connection among all the diverse dimensions of practical imaging systems. This allows all physical dimensions that influence image quality, or error space, to be manipulated and controlled. Jointly designing the lens, the signal processing and the sensor allows the error space to be mastered, achieving true joint optimization.

Design trade-offs

Because conventional imaging systems are designed to make the best possible image at the image plane (one plane of focus), aberrations occur and image quality diminishes if the object moves, if the temperature changes or if the assembly or fabrication is not just right.

However, the designer can gain freedom and flexibility by manipulating the optics through wavefront coding, so that the captured images are invariant to these changes in the error space.

With this capability, design trade-offs can achieve the maximum amount of information possible from an imaging system over a given error space. The error space also can be balanced dynamically so that physical tolerances, for example, are not treated equally. Control of tolerances that require little relative cost in production may be weighted less than other tolerances that demand a high cost to control through mechanical means. Wavefront Coding is then used to control the effect of aberrations that incur the greatest cost when controlled mechanically.

Focus errors

Most parameters that affect error space relate to focus. For example, in a traditional system, moving an object causes the image to blur, but Wavefront Coding allows the best image possible over a range of distances. Other aberrations can be decomposed into focuslike aberrations if one imagines that the effect of Wavefront Coding is a dynamically changing image surface.

Field curvature, for example, is misfocus as a function of image position and can be controlled by using a curved image plane. Aberrations in focus related to temperature can be dynamically controlled by changing the image plane without resorting to glass optics or sophisticated mounting materials. Even comatic aberrations, which are commonly produced through misalignment of optical elements during assembly, have a component related to focus and can be dealt with using Wavefront Coding. The trade-offs that are part of any design still exist, but this technology allows optimization of the optics and signal processing together.

Figure 4. A cropped area from Figure 3 shows high modulation of the transfer function for the wavefront-coded system.

The manner in which the system is optimized for each application and the trade-offs selected because of the application requirements are under the designer’s control. For example, a designer may make element alignment and form error less accurate and also use additional signal processing, resulting in an overall cost savings without a decrease in image quality. Any given level can be optimized when one considers how each dimension influences the system goals and costs.

For mobile phone cameras, lining up a subassembly is a challenge. The barrel, with its three or four resident lenses, must be attached to a sensor. Often a lens maker delivers the subassembly to a module maker, who creates and populates the board based on mechanical tolerances that may be many thousandths of an inch — an enormous measurement compared with mandatory optical precision. The result is the status quo in mobile phone imaging quality today — a situation that can be solved only with joint optimization.

Meet the author

Edward R. Dowski Jr. is co-founder and president of CDM Optics in Boulder, Colo.; e-mail: [email protected].

References

1. Gerd Häusler (1972). A method to increase the depth of focus by two step image processing. OPT COMMUN, Vol. 6, pp. 38-42.

2. Edward R. Dowski Jr. and W. Thomas Cathey (1995). Extended depth of field through wavefront coding, APPL OPT, pp.1859-1866.